Arm announces its first-ever in-house silicon, the 'Arm AGI CPU,' in its 35-year history.

Arm, a UK-based semiconductor company, announced the ' Arm AGI CPU ' on March 24, 2026, as a new class of product to support next-generation AI infrastructure. This marks the first time in Arm's more than 35-year history that the company has directly offered a finished silicon product of its own design, and it is positioned as the first in a new line of silicon products for data centers based on the Arm Neoverse platform.

Announcing Arm AGI CPU: The silicon foundation for the agentic AI cloud era - Arm Newsroom

Arm expands compute platform to silicon products in historic company first - Arm Newsroom

https://newsroom.arm.com/news/arm-agi-cpu-launch

A look inside the Arm AGI CPU: Core features and benefits - YouTube

Arm AGI CPU: Reactions from global technology leaders - YouTube

Arm moves beyond IP with AGI CPU silicon — 136-core data center chip targets AI infrastructure with Meta as lead partner | Tom's Hardware

https://www.tomshardware.com/tech-industry/semiconductors/arm-launches-its-first-data-center-cpu

According to Arm, the Arm AGI CPU was designed to meet the infrastructure needs of the AI agent era. As AI systems operate globally and continuously, and software agents make real-time decisions in collaboration with multiple models, CPUs have become a core component of modern infrastructure, handling everything from controlling accelerators, managing memory and storage, scheduling workloads, moving data between systems, and even coordinating fan-out for numerous agents. To address these changes, Arm has introduced the Arm AGI CPU as a rack-scale, high-density, and highly efficient CPU.

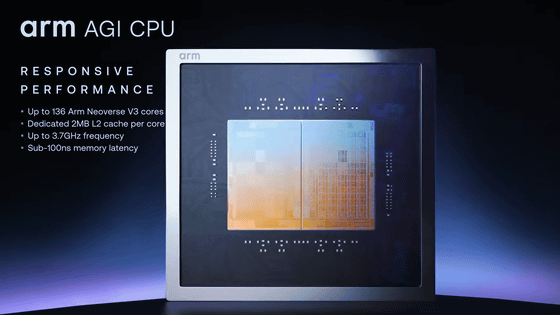

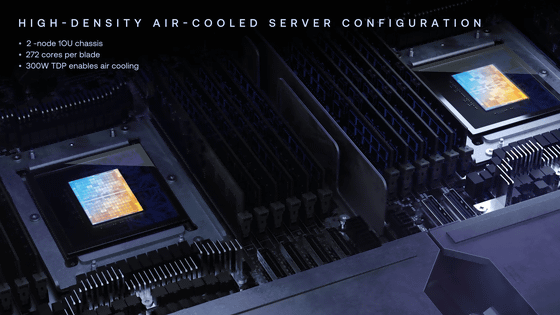

The Arm AGI CPU is manufactured using TSMC's 3nm process technology and features up to 136 Arm Neoverse V3 cores. Each core has its own dedicated 2MB L2 cache and operates at a maximum frequency of 3.7GHz. It employs a dual-chiplet design, prioritizing low latency by placing memory and I/O on the same die, targeting memory access times of less than 100ns.

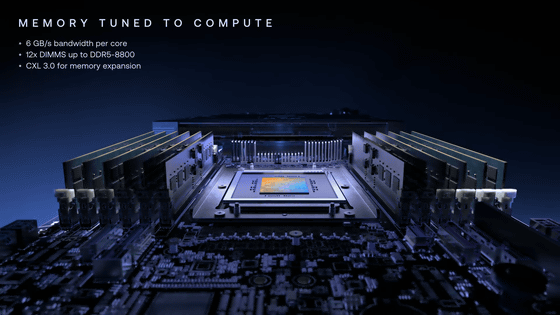

The memory supports up to 12 channels of DDR5-8800, achieving a total bandwidth of over 800GB/s and a memory bandwidth of 6GB/s per core.

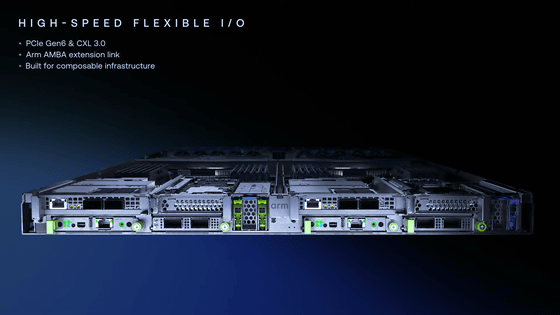

It supports capacities of up to 6TB per chip and features 96 lanes of PCIe Gen6, CXL 3.0, and AMBA CHI extension links for I/O. A key feature is that all these functions are delivered within a 300-watt thermal design power (TDP).

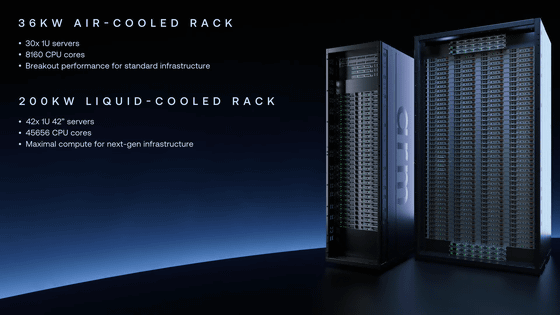

In terms of performance, Arm explains that its reference server configuration can deliver more than twice the performance per rack compared to the latest x86 systems. Specifically, the deployment uses a dual-node configuration with one operating unit (OU) equipped with two chips, implementing 272 cores per blade.

In a 36kW air-cooled rack with 30 blades, a total of 8160 cores can be operated. Furthermore, a 200kW liquid-cooled configuration in partnership with Supermicro can accommodate 336 Arm AGI CPUs, integrating over 45,000 cores. Arm explains that the high memory bandwidth allows for more effective threads per rack, and the high single-thread performance and efficiency of the Arm Neoverse V3 cores contribute to these rack-level performance improvements.

In this partnership, Meta is collaborating on development as both a lead partner and customer. The Arm AGI CPU will be used in combination with Meta's proprietary custom accelerator, MTIA, to optimize gigawatt-scale infrastructure supporting its suite of applications. Alexis Bjornin, Vice President of Infrastructure at Meta, said, 'We are honored that Meta is the lead partner for the Arm AGI CPU,' and expressed his expectation that combining it with their next-generation AI accelerator, MTIA, will maximize performance and efficiency across their entire gigawatt-scale data center footprint.

OpenAI stated, 'OpenAI operates AI systems at scale. ChatGPT is used by hundreds of millions of people every day, companies build services on our APIs, and developers use tools like Codex. Arm AGI CPUs are expected to play a crucial role as our infrastructure expands, strengthening the orchestration layer to manage large-scale AI workloads and improving overall system efficiency, performance, and bandwidth.'

Cloudflare stated, 'To continue our mission of helping build a better internet, Cloudflare needs infrastructure that can scale efficiently across our global network. Arm AGI CPUs provide high-performance, power-efficient compute designed for next-generation workloads.'

AI company Cerebras believes that Arm AGI CPUs have the potential to fundamentally change the way AI is done in data centers, and emphasizes that integrating its AI supercluster with Arm's new CPUs will enable AI training and inference at unprecedented scale and speed.

NVIDIA CEO Jensen Huang commented, 'Accelerated computing hasn't made CPUs obsolete; rather, it's made them an essential partner. The Arm architecture is the foundation of all our platforms. From Jetson for robotics, Drive for autonomous driving systems, the BlueField DPU, to the Vera CPU, Arm is used extensively. These systems we're building wouldn't be possible without the flexibility to shape, adjust, and modify the Arm ecosystem and Arm platform. This adaptability and modifiability of Arm has enabled us to integrate it into all our platforms.'

Related Posts: