``RAG (Retrieval Augmentation Generation)'' provides accuracy, knowledge update speed, answer transparency, etc. to large-scale language models (LLMs)

2023 will be a year in which chat AI will rapidly become popular, such as ChatGPT, Google's Bard, and Microsoft's Bing AI. The large-scale language model (LLM) that is the basis of such chat AI has problems such as ``causing hallucinations'', ``slow knowledge updating'', and ``lack of transparency in answers'', but we need to solve these problems. RAG is the technology that can do this.

[2312.10997] Retrieval-Augmented Generation for Large Language Models: A Survey

https://arxiv.org/abs/2312.10997

GitHub - Tongji-KGLLM/RAG-Survey

https://github.com/Tongji-KGLLM/RAG-Survey

LLM, which is the basis of chat AI that is rapidly becoming popular such as ChatGPT, is extremely powerful, but in practical applications, it is often seen as a problem that it causes ' hallucination ' that outputs content that is different from reality. . Hallucinations caused by AI have become a hot topic, and the word was selected as a ``word of the year 2023'' by the Cambridge Dictionary published by the University of Cambridge.

There have also been cases where ChatGPT has created a non-existent precedent due to an illusion, and a lawyer who adopted it without knowing it has been ordered to pay a fine.

Lawyer who adopted non-existent past precedent fabricated by ChatGPT is ordered to pay $5,000 - GIGAZINE

It has also been pointed out that Amazon's AI chat service ``Amazon Q'' is leaking sensitive data such as the location of AWS data centers due to hallucinations.

It has been pointed out that Amazon's AI 'Amazon Q' is leaking sensitive data such as the location of AWS data centers due to severe hallucinations - GIGAZINE

For this reason, AI researchers have developed evaluation models that can objectively verify the risk of LLM causing hallucinations , and created the LLM ' Gorilla ' that can significantly reduce the incidence of hallucinations. We are proceeding with countermeasures.

In addition, LLM also has problems such as ``slow knowledge updates'' and ``lack of transparency in answers.'' This is because in LLM, the number of parameters generally represents the complexity of the model, and although increasing the number of parameters can achieve high performance for complex tasks, it also increases the time and computational resources required for learning. This is because the data may become over-fitted or become a black box.

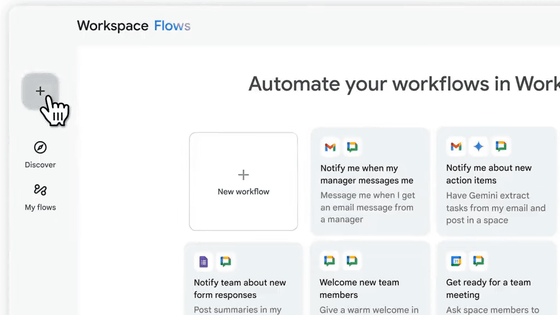

``Retrieval-Augmented Generation (RAG)'' was developed to solve these problems faced by LLM. RAG is based on the idea of retrieving relevant information from an external knowledge base before answering any prompt (question) in LLM, and adding a search-based function to generation-based LLM to compensate for its shortcomings. It has become.

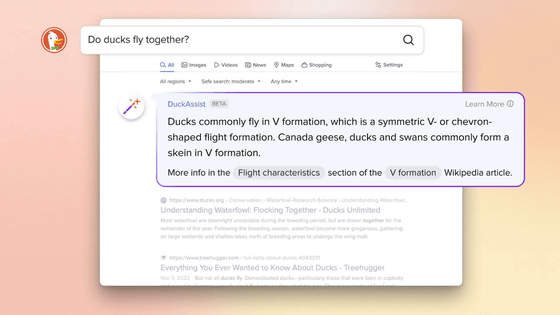

Regarding RAG, semiconductor giant NVIDIA explains , ``It gives the model a source that can be cited like a footnote in a research paper.'' By using RAG, it is possible to add sources (citation sources) derived from external databases to the LLM output, increasing the accuracy and transparency of answers. A research team led by Yunfan Gao, who studies RAG, said, ``In knowledge-intensive tasks using LLM, it is possible to significantly improve response accuracy, and at the same time, it can lead to a reduction in the incidence of hallucinations.'' It has been proven.”

Additionally, users will be able to cite the source of answers output by LLM, allowing them to verify the validity of their answers. In other words, this also leads to increasing the reliability of the LLM output. In addition, since external databases can be used, it is easy to ``update the LLM's knowledge base'' and ``introduce domain-specific knowledge to the LLM.''

The developer team says of RAG, 'RAG effectively combines LLM parameterized knowledge with a non-parameterized external knowledge base, making it one of the most important techniques for LLM implementation.' I am claiming.

In addition, on the social news site Hacker News, RAG was mentioned in the answer to the question ' How can I train a custom LLM or ChatGPT with my own documentation? '

Related Posts:

in Software, Posted by logu_ii