Google's AI-related internal documents stating that 'open source is a threat', 'winner is Meta', 'OpenAI is not important' leaked

From 2022 to 2023, the competition for large-scale language model (LLM) development will intensify in one year, with OpenAI announcing ``

Google 'We Have No Moat, And Neither Does OpenAI'

https://www.semianalysis.com/p/google-we-have-no-moat-and-neither

OpenAI, which develops 'ChatGPT', which has explosively increased the popularity of interactive AI, can be analyzed as being at the top of the field of interactive AI, but Google said, ``Google and OpenAI can win the next arms race. I am not in a position.' Google's analysis is that 'open source' is the only one who wins while companies such as Google, OpenAI, and Meta compete for market share.

In March 2023, the data of the large-scale language model ``LLaMA'', which was just announced by Meta last month, suddenly leaked on the Internet and became available for anyone to download. Regarding this event, Google said, ``The community quickly understood the importance of what they were given. He pointed out that the speed of development exploded as an important model got into people's hands.

Data of Meta's large-scale language model 'LLaMA-65B' leaked at 4chan - GIGAZINE

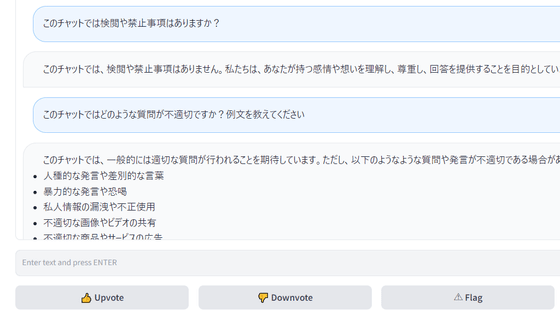

In addition, shortly after the appearance of LLaMA-65B, an open source large-scale language model 'Vicuna-13B' boasting performance that surpasses LLaMA was released. This model achieves high-quality performance by fine-tuning LLaMA's base model based on the data of the extension 'ShareGPT' that allows you to share interactions and prompts in ChatGPT. In the response quality evaluation of various interactive AIs, when ChatGPT is 100%, LLaMA is 68% and Alpaca 7B is 76%, while Vicuna-13B's quality is approaching 92%.

Japanese chat AI 'Vicuna-13B' with accuracy comparable to ChatGPT and Google's Bard has been released, so I tried using it - GIGAZINE

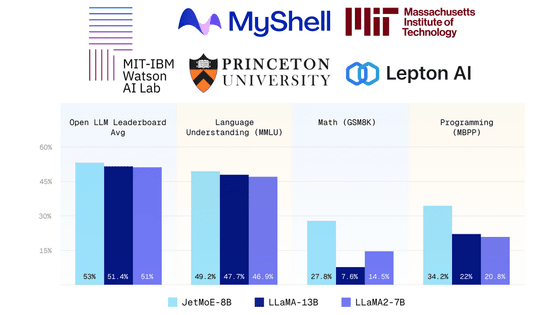

Regarding the emergence of such open source large-scale language models, Google said, ``Our model still has a slight edge in terms of quality, but the gap is closing surprisingly quickly. Models are faster, more customizable, more private, better performance per pound where we're getting by with $10 million and 540 billion parameters the Vicuna-13B We're doing it with $100 and 13 billion parameters, and we've done it in weeks, not months, which means a lot to us.'

“By enabling public participation at a low cost, we have seen a surge of ideation and iteration from individuals and organizations around the world, with a momentum unmatched by major corporations. The innovations that have fueled open source's recent success directly solve problems we still struggle with: more attention to their work can help us avoid reinventing the wheel.' continued.

The effect of the model being released as open source is particularly noticeable in the field of image generation. , and innovative technologies such as the user interface were born.

Google said, ``It remains to be seen if the same thing will happen to LLM, but the broad structural elements are the same. It's not going to pay, we should think about where our added value is, our best hope is to learn from and collaborate with what others are doing outside of Google We should prioritize enabling third-party integration, ”he wrote in the document, saying that the closed environment we have been doing so far should be reviewed.

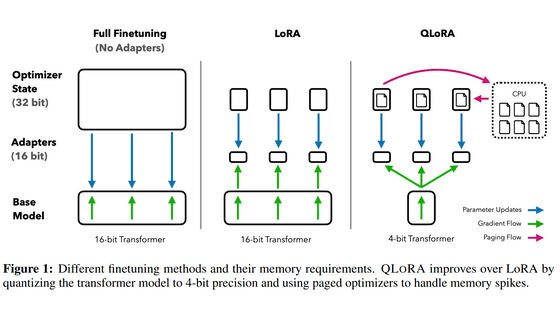

In addition to the threat of open source, Google is concerned that `` LoRA '', an adjustment function that allows efficient handling of language models at a small cost, should not be ignored. Google said, ``Despite having a direct impact on Google's most ambitious projects, this technology is underutilized within Google. You can, which means anyone with an idea can create and distribute an update, and the pace of improvement with these models far exceeds what our largest variation can do, The best are already almost indistinguishable from ChatGPT, and focusing on maintaining the largest model on the planet actually puts us at a disadvantage.Open Source It's a loser's proposal that directly competes with.'

Whether to keep its technology secret or to open it has always been a proposition for Google, but in recent years, when cutting-edge research at LLM has become affordable, it has become a competitive advantage in technology. It's getting harder to maintain. While we can choose to keep our secrets firmly, Google seems to be considering the current situation of 'whether to take the option of open sourcing' where we can learn from each other.

Regarding Meta, whose model has leaked out, Google pointed out, ``It's paradoxical, but the clear winner is Meta.'' This is because, although it has leaked, most of the open source innovation is happening on Meta's architecture, so Meta can incorporate that technology directly into its products.

As for its competitor OpenAI, he said, 'Google is making the same mistake as Google in its stance on open source.' You can do it,” he argued. From these examples, Google has indicated its intention to 'establish its position as a leader in the open source community and take the initiative by cooperating rather than ignoring the discussion.'

Related Posts:

in Posted by log1p_kr