A memory-efficient AI model called '1-bit Bonsai' has appeared, boasting 8B of parameters but consuming only 1.15GB of memory, delivering performance equivalent to or better than models consuming 14 times more memory.

AI models tend to perform better with a larger number of parameters, but there's a trade-off: more parameters mean increased memory usage. ' 1-bit Bonsai ,' announced by AI development company PrismML on March 31, 2026, can run a model with 8 billion parameters using a remarkably low memory usage of 1.15GB. Furthermore, it has recorded benchmark scores that beat other models that consume approximately 14 times more memory, attracting attention as a high-performance, memory-efficient AI model.

PrismML — Announcing 1-bit Bonsai: The First Commercially Viable 1-bit LLMs

1-bit Bonsai successfully reduces memory usage significantly compared to models with the same number of parameters by employing a 1-bit model design across the entire network that constructs the AI model, including the 'embedding,' 'attention layer,' 'MLP layer,' and 'LM head.' Three versions, ' 1-bit Bonsai 8B ,' ' 1-bit Bonsai 4B, ' and ' 1-bit Bonsai 1.7B, ' have already been released as open models and can be downloaded and run by anyone.

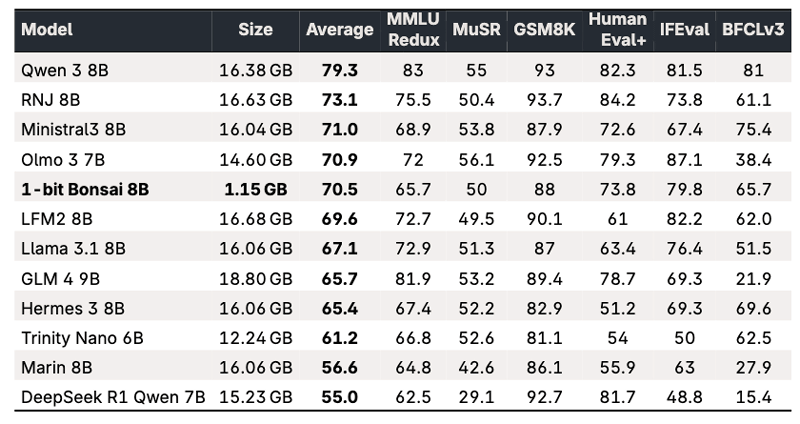

The table below summarizes the memory usage and various benchmark results of the 1-bit Bonsai 8B and its competitors. While models with 8 billion parameters typically require around 16GB of memory, the 1-bit Bonsai 8B manages to keep it down to just 1.15GB. Despite its low memory usage, it successfully maintains performance, achieving higher scores than the Llama 3.1 8B and GLM 4 9B.

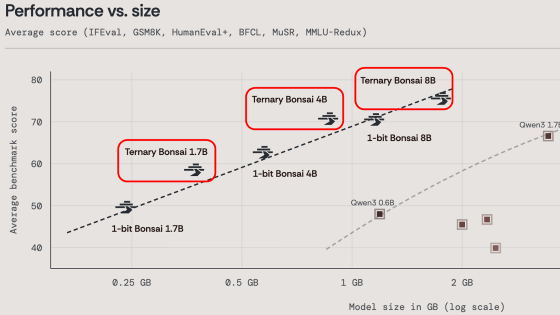

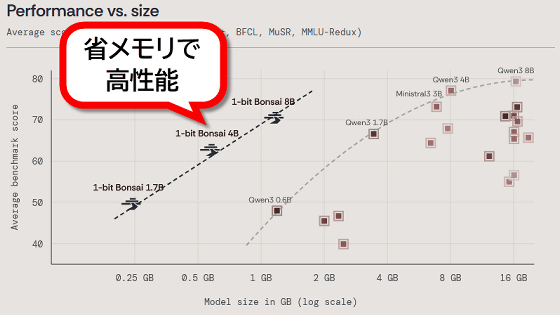

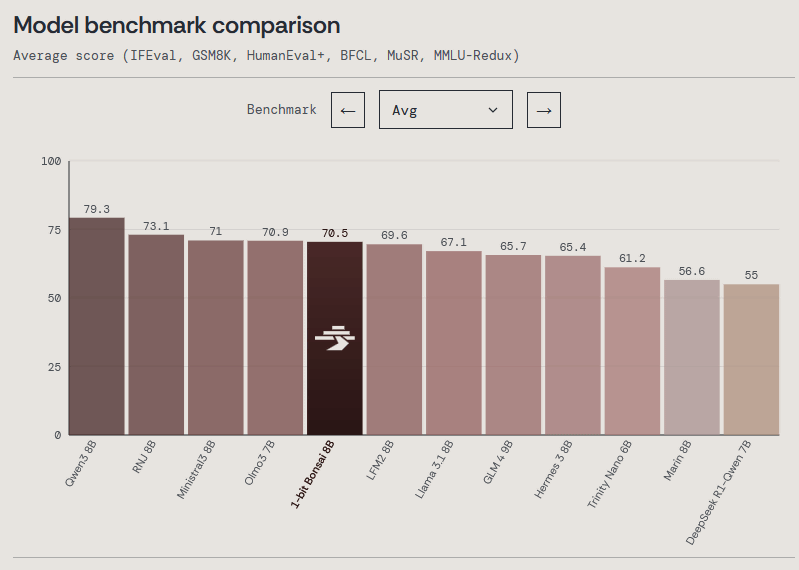

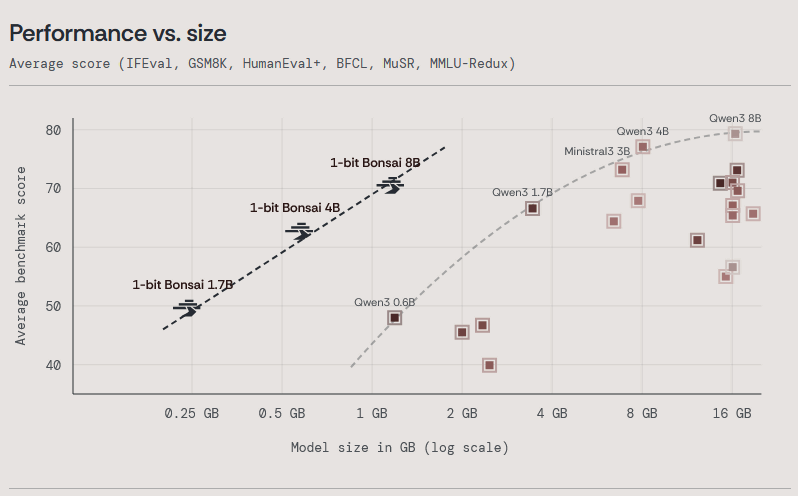

The graph below shows the average benchmark test results. It is clear that the 1-bit Bonsai 8B has comparable performance to competing models.

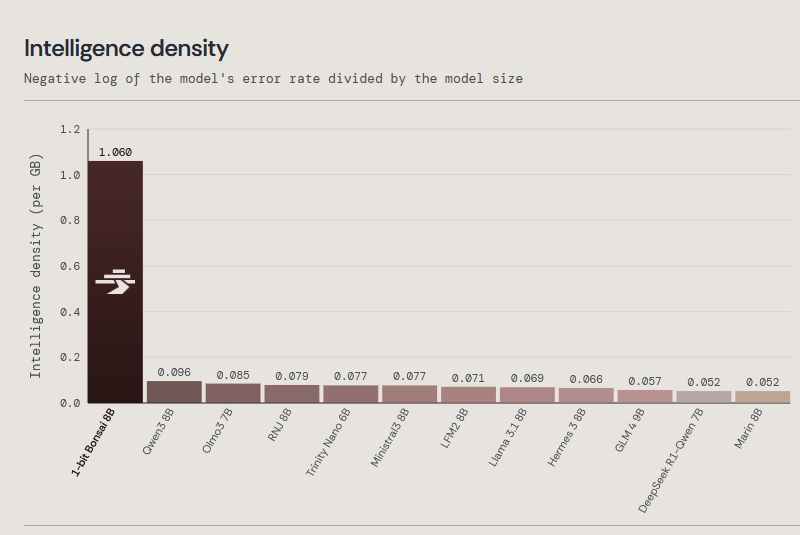

The graph below compares the performance per 1GB of memory. It's immediately clear that the 1-bit Bonsai 8B is a model with superior memory efficiency.

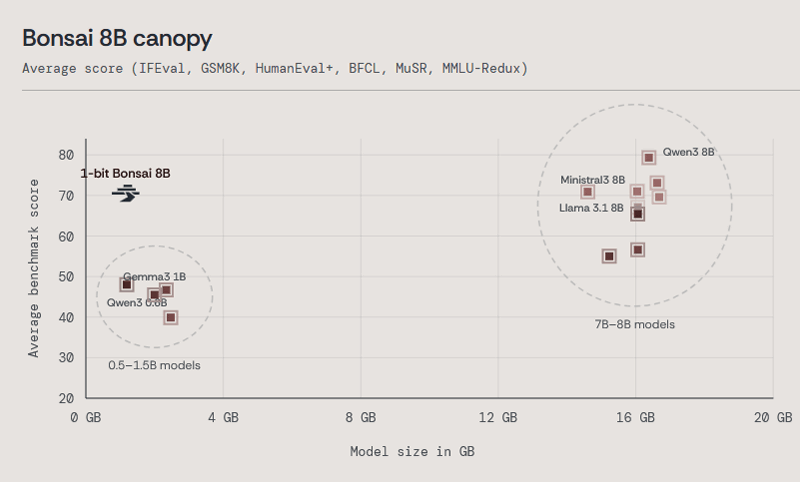

The graph below plots the memory usage (horizontal axis) and benchmark score (vertical axis) of various models. The 1-bit Bonsai 8B achieves performance equivalent to models with around 8 billion parameters while using the same amount of memory as models with around 1 billion parameters.

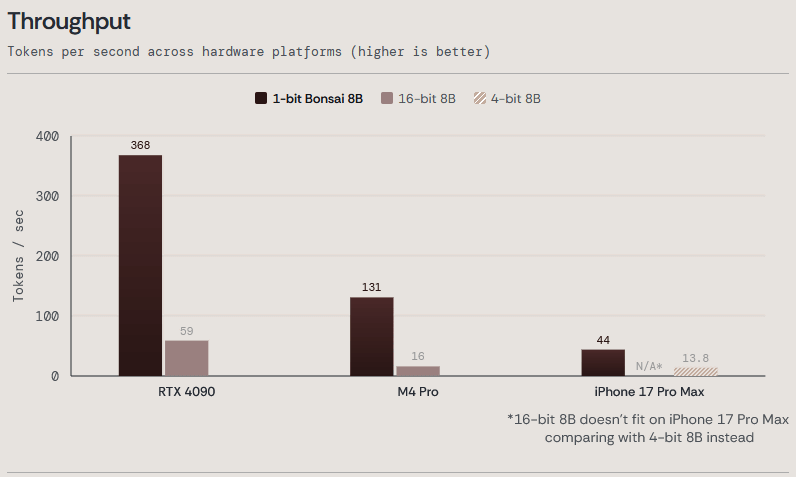

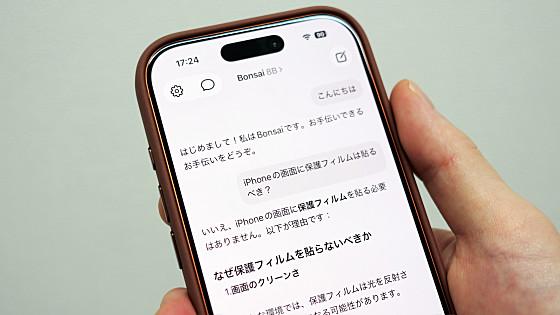

1-bit Bonsai 8B can run not only on PCs with RTX 4090 and Macs with M4 Pro, but also on the iPhone 17 Pro Max.

The following is a demo video showing an iPhone 17 Pro running a 1-bit Bonsai 8B and a competing model with 1 billion parameters solving the same computational problem. The 1-bit Bonsai 8B operates faster and produces more accurate output.

The 1-bit Bonsai 4B and 1-bit Bonsai 1.7B also use significantly less memory than comparable models, with the 1-bit Bonsai 4B using 0.57GB of memory and the 1-bit Bonsai 1.7B using 0.24GB.

The 1-bit Bonsai series can be downloaded from the following link. The license is Apache License 2.0.

Bonsai - a prism-ml Collection

https://huggingface.co/collections/prism-ml/bonsai

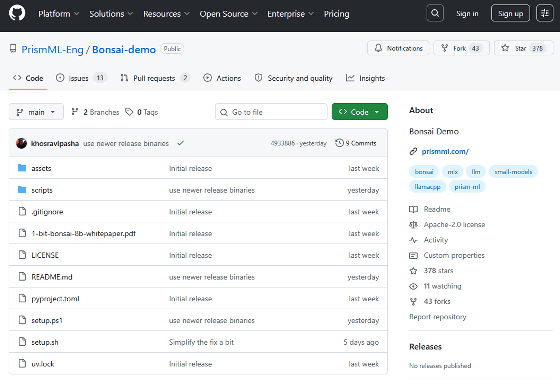

Furthermore, various information is available on GitHub.

GitHub - PrismML-Eng/Bonsai-demo: Bonsai Demo · GitHub

https://github.com/PrismML-Eng/Bonsai-demo/

1-bit Bonsai can also be run using the iPhone app Locally AI . We will be publishing a review article soon showing how to run 1-bit Bonsai with Locally AI.

- Continued

I tried running the AI model '1-bit Bonsai 8B' with 8 billion parameters locally on my iPhone 17 Pro. It's easy to run using the free app Locally AI. - GIGAZINE

Related Posts:

in AI, Posted by log1o_hf