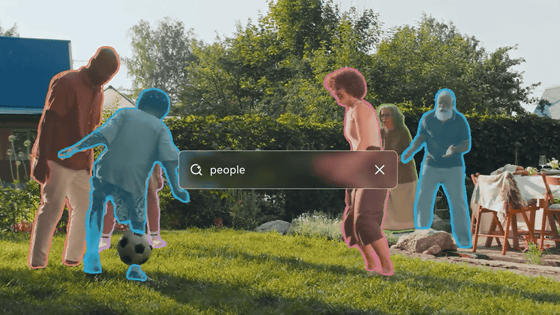

Meta has released 'SAM 3.1,' an improved version of its AI model 'SAM 3' that cuts out objects in videos, with enhanced tracking capabilities for multiple objects.

In November 2025, Meta announced 'Meta Segment Anything Model 3 (SAM 3),' an AI model for detecting, segmenting, and identifying objects in images and videos. On March 27, 2026 (local time), Meta released ' SAM 3.1 ,' a version of SAM 3 with improved capabilities for tracking multiple objects.

We're releasing SAM 3.1: a drop-in update to SAM 3 that introduces object multiplexing to significantly improve video processing efficiency without sacrificing accuracy.

pic.twitter.com/xfMaBdSkqV — AI at Meta (@AIatMeta) March 27, 2026

We're sharing this update with the community to help make high-performance applications feasible on smaller,…

SAM 3.1: Faster and More Accessible Real-Time Video Detection and Tracking With Multiplexing and Global Reasoning

https://ai.meta.com/blog/segment-anything-model-3/

SAM 3 is an integrated model that can detect, segment, and track objects in images and videos based on prompts. For example, you can extract specified objects from images and videos by entering short text such as 'dog' or 'yellow school bus,' or by specifying objects in a video by selecting a range or clicking.

Meta announces 'SAM 3,' an AI model that identifies and cuts out objects in videos - GIGAZINE

On March 27th, Meta released SAM 3.1, an improved version of SAM 3. SAM 3.1 introduces 'object multiplexing,' significantly improving video processing efficiency without sacrificing accuracy.

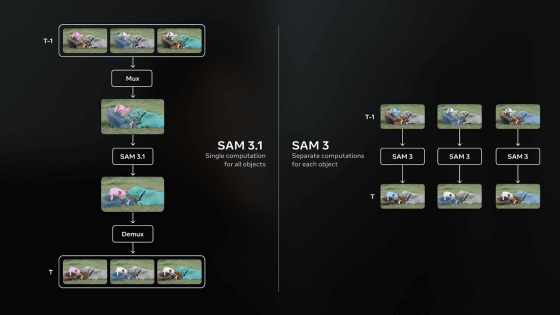

Object multiplexing allows SAM 3.1 to track up to 16 objects in a single forward pass. Previously, a separate path was required for each object to be tracked, but SAM 3.1 processes the objects being tracked simultaneously, eliminating redundant calculations and bottlenecks.

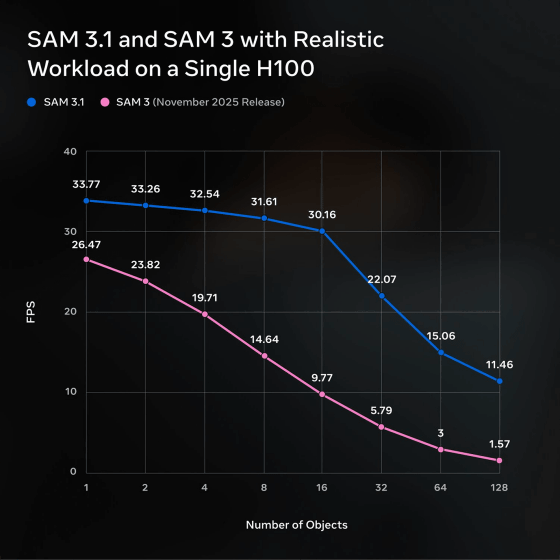

Meta explains, 'This approach doubles the processing speed of videos with a moderate number of objects, and increases throughput (processing power per unit time) on a single H100 GPU from 16 frames per second to 32 frames per second.'

The graph below shows the video processing power (FPS) of a single H100 GPU on the vertical axis and the number of objects being tracked on the horizontal axis. Comparing the performance of SAM 3 (pink line) and SAM 3.1 (blue line), it can be seen that the performance difference widens as the number of objects increases, and when there are 128 objects, the performance difference becomes as much as 7 to 8 times.

Checkpoints, including the SAM 3.1 model, are available for download on Hugging Face.

facebook/sam3.1 · Hugging Face

https://huggingface.co/facebook/sam3.1

Related Posts:

in AI, Posted by log1h_ik