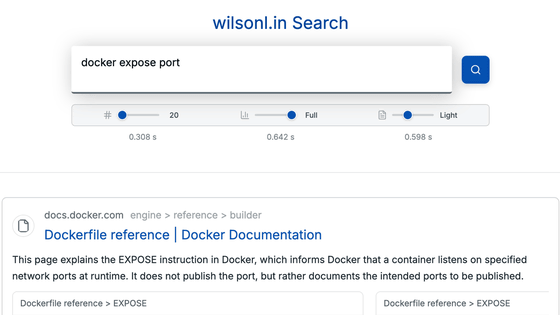

SentrySearch is a semantic search tool that extracts relevant sections from videos simply by entering keywords.

While it's easy to search for videos that match keywords, extracting a single scene from a video that matches those keywords is extremely difficult. A process using AI to perform this task has been made public.

GitHub - ssrajadh/sentrysearch: Semantic search over videos using Gemini Embedding 2. · GitHub

https://github.com/ssrajadh/sentrysearch

SentrySearch performs semantic search , which considers the context of keywords to conduct a detailed search. It divides the video into segments of a specified number of seconds and indexes each scene using either Google's Gemini Embedding API or a local Qwen3-VL-Embedding model. It then performs the search and returns scenes that match the keywords.

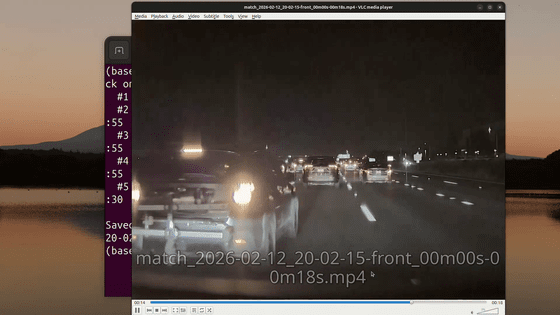

The demo video is below. It shows a scene that matches the search term 'a car with a bicycle carrier cut in front of me.'

'SentrySearch' searches for and extracts scenes from videos using natural language - YouTube

The two models mentioned above can process video directly without intermediate processing such as captioning or transcription. This processing allows for searches on hours of video in less than a second. Gemini extracts and tokenizes exactly one frame per second for processing.

Indexing a one-hour video using the Gemini Embedding API costs $2.84 (approximately 450 yen). Qwen3-VL-Embedding is free.

By default, the scenes are divided into 30-second segments, with a 5-second overlap between each segment and the preceding or succeeding scene. If the scene you want to search spans two scenes, the search may not work properly, and the developers suggest that 'this could be improved with more advanced scene detection.'

Related Posts: