Meta explains what they paid attention to and how they approached training large-scale language models

Meta released

How Meta trains large language models at scale - Engineering at Meta

https://engineering.fb.com/2024/06/12/data-infrastructure/training-large-language-models-at-scale-meta/

Meta has previously trained various AI models for recommendation systems on Facebook and Instagram, but these were small-scale models requiring relatively few GPUs, unlike the large-scale language models like the Llama series, which require massive amounts of data and GPUs.

Challenges in training large-scale language models

In order to train a large-scale language model, which differs from traditional small-scale AI models, Meta needs to overcome the following four challenges:

Hardware reliability: As the number of GPUs in a job increases, hardware reliability is required to minimize the chance of failures interrupting training. This includes rigorous testing and quality control measures, as well as automated processes for detecting and fixing issues.

Rapid recovery in the event of a failure: Despite best efforts, hardware failures can occur, so it's important to take proactive steps to prepare for recovery, such as rescheduling training sessions to reduce the load and quickly reinitializing.

-Efficiently saving training state: Training state should be checked regularly and saved efficiently so that in case of failure, training can be resumed from the point where it was interrupted.

Optimal connectivity between GPUs: Training large language models requires synchronizing and transferring large amounts of data between GPUs, which requires a robust, high-speed network infrastructure and efficient data transfer protocols and algorithms.

◆Infrastructure Innovation

Meta says that overcoming the challenges of large-scale language models required improvements to its entire infrastructure. For example, Meta enables researchers to use the machine learning library

Of course, to provide the computing power required for training large-scale language models, it is also essential to optimize the configuration and attributes of high-performance hardware for generative AI. Even when deploying the constructed GPU cluster in a data center, it is difficult to change the power and cooling infrastructure within the data center, so trade-offs and optimal layouts for other types of workloads must be considered.

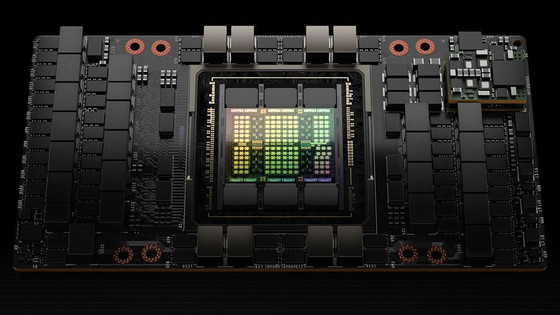

Meta has built a GPU cluster equipped with 24,576 NVIDIA H100 GPUs and revealed that it is using it to train large-scale language models, including Llama 3.

Meta releases information about a GPU cluster equipped with 24,576 NVIDIA H100 GPUs and used for training on games such as Llama 3 - GIGAZINE

◆ Reliability issues

To minimize downtime in the event of hardware failure, it is necessary to plan ahead to detect and fix the problem. The number of failures is proportional to the size of the GPU cluster, and when running jobs across the cluster, spare resources should be reserved for restart. The three most common failures observed by Meta are:

Degraded GPU performance: There are several reasons for this issue, but it is often seen in the early stages of running a GPU cluster and seems to subside as the servers get older.

- Uncorrectable errors in DRAM and SRAM: Uncorrectable errors are common in memory, and you need to constantly monitor for uncorrectable errors and request a replacement from the vendor if the threshold is exceeded.

Network cable problems: Network cable problems are most common in the early stages of a server's lifespan.

◆Network

When considering the rapid transfer of large amounts of data between GPUs, there are two options : RDMA over Converged Ethernet (RoCE) and Infiniband . Meta has been using RoCE in production environments for four years, but the largest GPU cluster built with RoCE only supported 4,000 GPUs. Meanwhile, the research GPU cluster was built with Infiniband, which had 16,000 GPUs but was not integrated into the production environment, nor was it built for the latest generation of GPUs or networks.

When faced with the choice of which system to build its network on, Meta decided to build a cluster of approximately 24,000 GPUs using both RoCE and Infiniband, in order to accumulate operational experience with both networks and learn from them in the future.

Future outlook

Meta said, 'Over the next few decades, we will use hundreds of thousands of GPUs to handle even larger amounts of data and deal with longer distances and latencies. We will adopt new hardware technologies, including new GPU architectures, and evolve our infrastructure.' He added that he will continue to work hard to push the boundaries of AI.

Related Posts: