Is NVIDIA developing 'a super hot GPU with power consumption of 1000W'?

At the financial results presentation of Dell, one of the world's leading PC manufacturers, a comment came out that ``NVIDIA is developing a GPU with a power consumption of 1000W.''

Exhibit 99.1 Earnings 8K Q4 FY24 - Q4 FY24 Financial Results Press Release.pdf

(PDF file)

Dell exec reveals Nvidia has a 1,000-watt GPU in the works • The Register

https://www.theregister.com/2024/03/05/nvidias_b100_gpu_1000w/

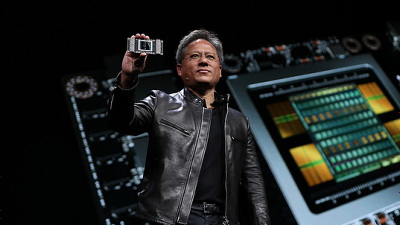

The information that ``NVIDIA is developing a GPU with a power consumption of 1000W'' came out of the mouth of Vice Chairman Jeff Clark, who appeared on stage at Dell's 2023 Q4 financial results presentation on February 29, 2024.

Vice Chairman Clark responded to the question, ``What do you expect from GPUs for AI calculations?'' during the financial results presentation, ``We look forward to improved performance with the H200 , and we hope to improve the performance of the B100 and B200.'' We look forward to improving the performance of NVIDIA,' he said, referring to a GPU named 'B200' that NVIDIA has not yet announced. He continued, ``Based on our evaluation of heat (generated during GPU processing), liquid cooling is not essential for GPUs operating at 1000W. That will be achieved with next year's B200.'' He suggested that the power is 1000W and that he is testing cooling methods other than liquid cooling to cool the 'B200' that operates at 1000W.

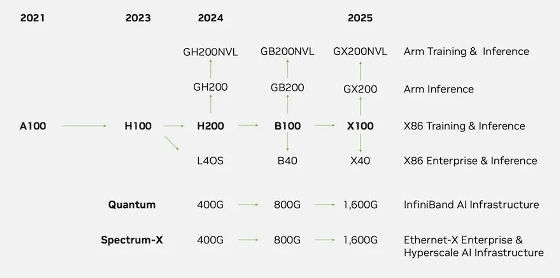

The figure below is the roadmap for AI computing GPUs that NVIDIA released to investors in October 2023. NVIDIA plans to release the ``H200'' and ``B100'' in 2024, but the ``B200'' mentioned by Vice Chairman Clark is not included in the roadmap. The overseas media The Register points out that the 'B200' mentioned by Vice Chairman Clark may refer to 'GB200' based on NVIDIA's product lineup and roadmap. In addition, The Register estimates that ``GB200's TDP may reach around 1300W'' based on existing information and Vice Chairman Clark's remarks.

The AI calculation GPU 'H200', which NVIDIA plans to release in 2024, has a maximum power consumption of 700W and has twice the inference speed compared to the H100.

NVIDIA announces GPU 'H200' for AI and HPC, inference speed is twice as fast as H100 and HPC performance is 110 times that of x86 CPU - GIGAZINE

Related Posts:

in Hardware, Posted by log1o_hf