CEO of image generation AI ``Stable Diffusion'' developer announces an open letter appealing to the US Congress ``Importance of open source in AI''

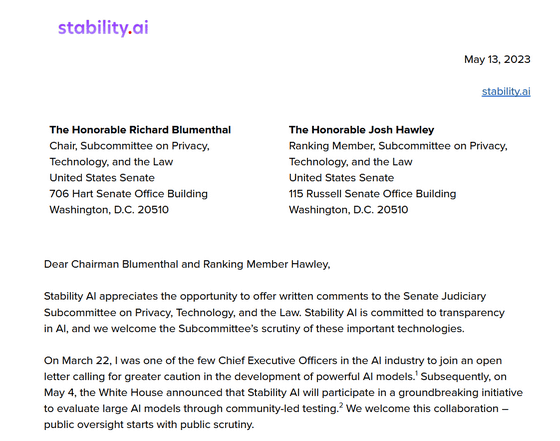

The Senate Judiciary Committee of the United States Congress held a hearing on AI monitoring and regulation on May 16, 2023, summoning OpenAI CEO Sam Altman and others as witnesses. At the time of this hearing, Emad Mostak, CEO of Stability AI, the developer of the image generation Ai `` Stable Diffusion '', published an open letter appealing the importance and significance of open source in AI.

Advocating for Open Models in AI Oversight: Stability AI's Letter to the United States Senate — Stability AI

https://ja.stability.ai/blog/stability-ai-letter-us-senate-ai-oversight

(PDF file) Stability AI - Dear Chairman Blumenthal and Ranking Member Hawley

CEO Mostark advocated 'transparency', 'accessibility' and 'human-centeredness' as the principles of Stability AI. Developing a transparent and open model will allow researchers to validate performance, identify potential risks, and allow the public and private sectors to customize models to meet their unique needs, said Mostark. claim.

We will also develop an efficient model that is accessible to everyone, including individual developers, SMEs and independent creators, rather than just large corporations, making critical technology less dependent on a handful of companies and more equitable. CEO Mostark, building a digital economy . Furthermore, he said, ``We should build a model that supports humans who are users,'' and instead of asking AI for god-like intelligence, he developed practical AI functions that can be applied to everyday tasks. , Mostark argued that the purpose is to improve creativity and productivity.

And CEO Mostark will ensure the safety of AI and data by opening the dataset used for AI training, and major companies and public institutions will be able to develop their own custom models, further open He says that sourcing will lower entry barriers and promote innovation and free competition in AI.

Mostark acknowledges the risk that AI may omit important context or input from biased data due to biases and errors in training data. However, he warns that excessive regulation could eliminate open innovation, which is essential for AI transparency, competitiveness, and national resilience.

Regarding the risks posed by AI, Mostark said, ``There is no silver bullet to deal with all risks,'' and called on the judicial committee to consider the following five proposals.

1: Larger models risk being misused by malicious nations and organizations. However, AI learning and inference requires significant computational resources, so we encourage cloud computing service providers to consider establishing disclosure or audit policies to report when large-scale training or inference is performed.

2: Powerful models may require safeguards to prevent serious misuse. It does this by having operational security and information security guidelines reviewed, and by public oversight through open and independent evaluations by authorities.

3: Consider disclosure requirements for application developers and privacy obligations that require user consent to collect data for AI training. We will also consider performance requirements above a certain level for AI related to finance, medical care, and law.

4: Facilitate adoption of content authenticity standards by providers of AI services and applications, and encourage “intermediaries” such as social media platforms to incorporate content moderation systems.

5: The US government will collaborate with researchers, developer communities, and industry to accelerate the development of AI model evaluation frameworks. Policy makers will also invest in public computing resources and experimental environments, and consider funding and procurement of public infrastructure models . Public infrastructure models are managed as public resources, subject to public scrutiny, trained on reliable data, and made available to national institutions.

Mostark concluded, 'Open models and open data sets will improve safety through transparency, foster competition in essential AI services, and help America maintain its strategic leadership in these critical technologies. It helps us to make sure.”

Related Posts:

in Software, Posted by log1i_yk