Pointed out that Microsoft's ``Bing'' that integrated the upgraded version of ChatGPT had many wrong answers in the demo

Regarding the search engine `` Bing '' that integrates the upgraded version of ChatGPT AI announced by Microsoft, engineer Dimitri Blairton said, ``Some wrong answers were given in the Bing demonstration.'' Pointed out.

Bing AI Can't Be Trusted - by Dmitri Brereton - DKB Blog

Demo of Microsoft's AI-Powered Bing Included Several Small Mistakes | PCMag

https://www.pcmag.com/news/demo-of-microsofts-ai-powered-bing-included-several-small-mistakes

On February 8, 2023, Microsoft announced the new Bing and Edge, which integrate an upgraded AI version of ChatGPT. By integrating ChatGPT's upgraded AI, Bing will be able to intelligently summarize the information you need while interacting with the user, and output the information you want in an easy-to-read format. The following article summarizes what can be done specifically.

Microsoft announces new search engine Bing and browser Edge integrating ChatGPT's upgraded AI - GIGAZINE

The state of the demonstration that took place after the announcement of the new Bing is published on YouTube.

Introducing your copilot for the web: AI-powered Bing and Microsoft Edge-YouTube

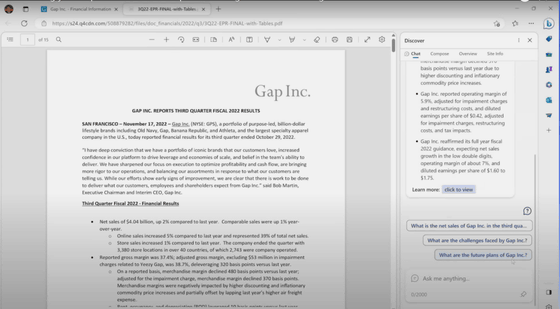

In the demonstration, Microsoft asked the new Bing to summarize the apparel brand Gap's third quarter 2022 earnings report . Then, Bing output that Gap's operating margin was '5.9%' in the same period. However, the actual operating profit margin is '4.6%' as shown in the financial report, and it is clear that there is a mistake in the summary.

In addition, Bing summarizes the financial report and outputs that `` Gap forecasts low double-digit net sales growth in the next quarter (Q4 2022),'' but Gap In the financial report, it states that ``net sales in the fourth quarter of 2022 may record a mid-single-digit decline compared to the same period last year,'' and outputs a completely incorrect summary. I understand.

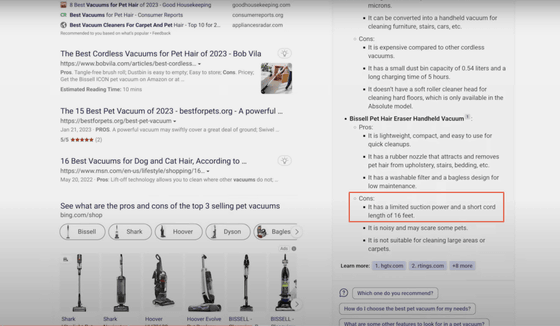

In addition, Breaton points out that Bing's answer to the question, ``What are the advantages and disadvantages of the top three best-selling pet vacuum cleaners?'' at the beginning of the demonstration was incorrect. According to him, the explanation about 'Bissell Pet Hair Eraser Handheld Vacuum', one of the pet vacuum cleaners picked up by Bing, is incorrect.

Specifically, Bing cites ``the cord length is as short as 16 feet (about 4.9 meters)'' as a disadvantage of the Bissell Pet Hair Eraser Handheld Vacuum, but this vacuum cleaner is a cordless model that is convenient to carry. is. In addition, although I asked them to list the 'top 3 selling items', Bing listed 'the most recommended vacuum cleaner', which seems to have not been the best selling model.

Besides this, there is also a happening that causes an error when trying to introduce Bing to Mexico City's nightlife. Also, in the demonstration, Bing outputs 'We have a website where you can make reservations and check the menu' for a restaurant called Cecconi's Bar, but in reality, search for 'Cecconi's Bar' on the search engine. Blairton said he couldn't find a website that would fit.

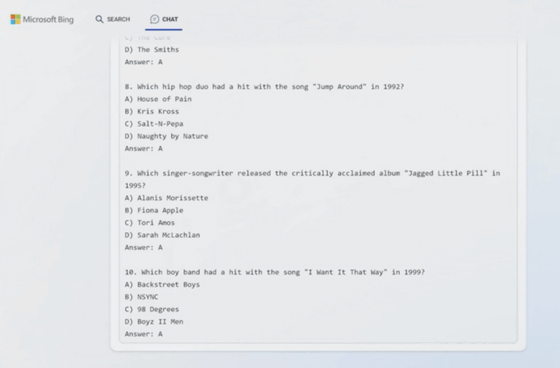

Also, in the demonstration, Microsoft appealed that Bing has the ability to create a quiz about the music of the 1990s. In fact, Bing creates 10 multiple-choice quizzes in the demonstration, but Breaton points out that all the correct answers were 'A', so 'there is no variation.'

Overseas media PCMag seems to have asked Microsoft about the mistake Bing made, but it seems that no response has been obtained at the time of writing the article.

Also, in the Bing FAQ created by Microsoft, ``Bing aims to base all answers on reliable sources, but AI can make mistakes and Third-party content may not always be accurate or reliable, and Bing may misrepresent the information it finds and may find compelling but incomplete, inaccurate, or inappropriate responses. Please use your own judgment and double-check the facts before making decisions or taking action based on Bing's answers.' A warning has been given to the possibility.

In addition, Google's Bard, which was announced at the same time as Microsoft's Bing, also gave an inaccurate answer at the time of the announcement and called the topic.

Google's chat AI ``Bard'' gave an inaccurate answer, causing Google's market value to drop by more than 15 trillion yen - GIGAZINE

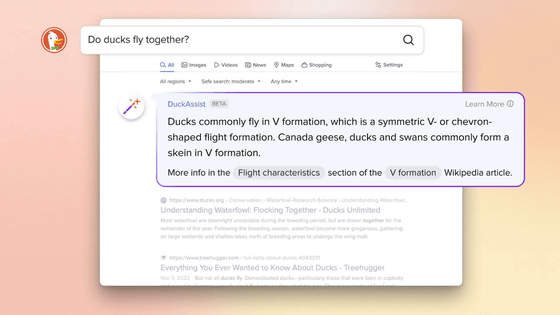

Other skeptics have also been voiced against Bard and Bing, search tools that integrate chat AI. It's completely opaque how it works, and if the language model fails, hallucinates, or spreads misinformation, it can have a huge impact.' I point out that there are

Related Posts:

in Software, Web Service, Video, Posted by logu_ii