How to run image generation AI 'Stable Diffusion' locally on Mac with M1

The image generation AI ``

Run Stable Diffusion on your M1 Mac's GPU - Replicate – Replicate

https://replicate.com/blog/run-stable-diffusion-on-m1-mac

Replicate develops APIs that allow open source AI to run in the cloud, and also provides APIs to run Stable Diffusion in the cloud . Replicate also explains on its official blog how to run Stable Diffusion locally on Macs with M1 and M2 chips.

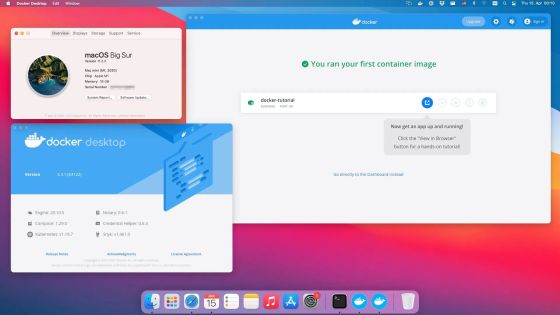

As a prerequisite, the device to be used must be a Mac with an M1 or M2 chip and the OS must be macOS 12.3 or later version. Also, although it works with 8GB of RAM, it is very slow, so it is desirable to have 16GB or more of RAM if possible.

First, Python 3.10 or higher is required to run Stable Diffusion, so if you don't have it installed, install the latest version of Python from the package management system Homebrew .

brew update

brew install python

After installing the latest version of Python, clone a fork of Stable Diffusion.

git clone -b apple-silicon-mps-support https://github.com/bfirsh/stable-diffusion.git

cd stable-diffusion

mkdir -p models/ldm/stable-diffusion-v1/

Next, set up Virtualenv to install dependencies ...

python3 -m pip install virtualenv

python3 -m virtualenv venv

Activate. In addition, it is necessary to re-execute this command every time Stable Diffusion is executed.

source venv/bin/activate

Next, install dependencies from pip , a Python package installation and management system.

pip install -r requirements.txt

If you see an error like this...

Failed building wheel for onnx

You may need to install the following packages.

brew install Cmake protobuf rust

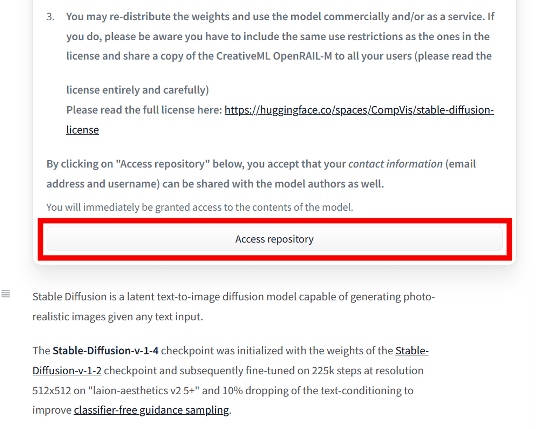

Then go to the natural language processing open source community Hugging Face repository , read the license, and click 'Access repository'.

Download 'sd-v1-4.ckpt (~4GB)' and save it as 'model.ckpt' in the directory 'models/ldm/stable-diffusion-v1/' created above.

models/ldm/stable-diffusion-v1/model.ckpt

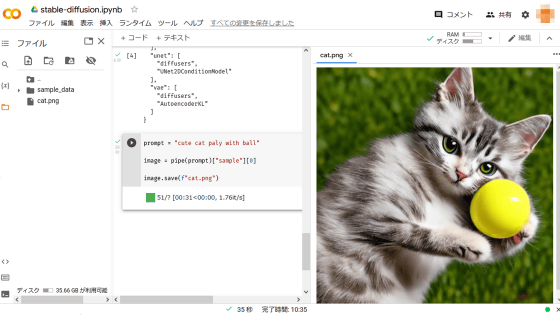

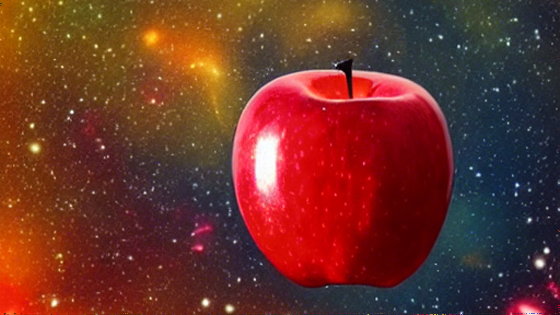

Setup is now complete. Run the code as follows and enter the string 'a red juicy apple floating in outer space, like a planet'... …

python scripts/txt2img.py \

--prompt 'a red juicy apple floating in outer space, like a planet' \

--n_samples 1 --n_iter 1 --plms

The following image is generated in 'outputs/txt2img-samples/'.

Replicate claims that this method is not their own, and that all credit goes to developers who contributed to the Stable Diffusion fork published on GitHub or participated in the GitHub thread . . The only addition to their previous work was that they used pip to install dependencies to simplify the setup, saying, 'We are just messengers of their great work.' .

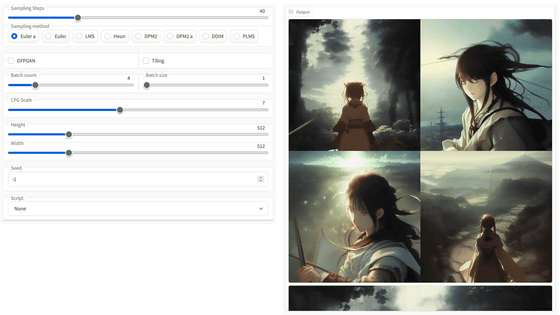

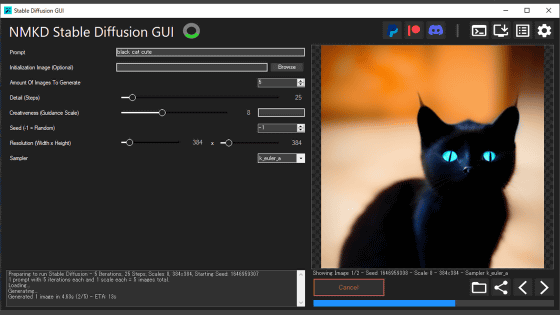

In addition, `` NMKD Stable Diffusion GUI '' that can install Stable Diffusion in the Windows environment and operate it with GUI, and `` stable_diffusion.openvino '' that can be executed with Intel GPU have also appeared.

``NMKD Stable Diffusion GUI'' that can install image generation AI ``Stable Diffusion'' in Windows environment with one button & can be operated with GUI is finally here-GIGAZINE

Related Posts:

in Software, Web Service, Posted by log1h_ik