Meta is building the world's fastest AI supercomputer with 16,000 GPUs

Introducing the AI Research SuperCluster — Meta's cutting-edge AI supercomputer for AI research

https://ai.facebook.com/blog/ai-rsc

Meta Collaborates with NVIDIA on AI Research Supercomputer | NVIDIA Blog

Meta's Massive New AI Supercomputer Will Be'World's Fastest'

https://www.hpcwire.com/2022/01/24/metas-massive-new-ai-supercomputer-will-be-worlds-fastest/

Meta has built an AI supercomputer it says will be world's fastest by end of 2022 --The Verge

https://www.theverge.com/2022/1/24/22898651/meta-artificial-intelligence-ai-supercomputer-rsc-2022

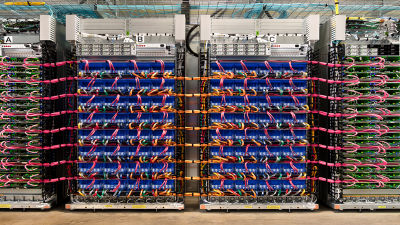

The development of AI (artificial intelligence) requires an 'AI supercomputer' specialized for computation, with a computing power of billions of times per second.

The RSC announced by Meta is being built for completion and is expected to become the world's fastest AI supercomputer when it becomes complete in mid-2022. Meta researchers have already begun learning large-scale models of natural language processing and computer vision using RSC, and plan to learn models with trillions of parameters in the future.

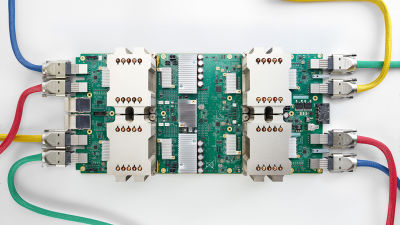

Since establishing the Facebook AI Research Lab in 2013, Meta (then Facebook) has made a long-term investment in AI, and in 2017 it was the first to have 22,000 NVIDIA V100 Tensor core GPUs. Build a generation of AI supercomputers. I ran 35,000 training jobs a day.

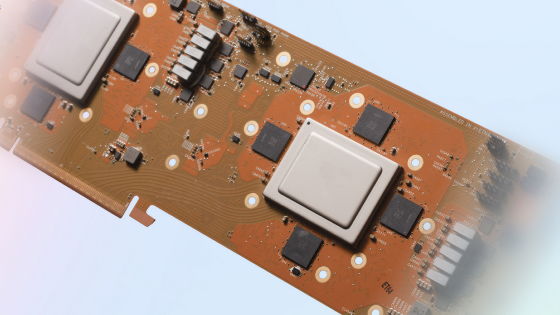

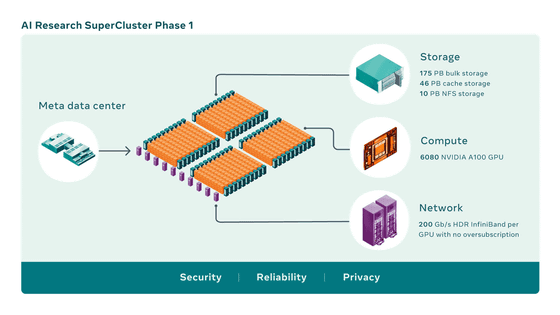

The RSC is equipped with 760 NVIDIA DGX A100s. Eight NVIDIA A100 Tensor core GPUs, which are more powerful than the V100, are installed in one DGX A100, so the total number of GPUs is 6080. In addition, the storage is 175 PB (petabytes) , the cache is 46 PB, and the Pure Storage Flash Blade is 10 PB.

RSC can execute computer vision workflows up to 20 times faster than conventional machines equipped with V100. The NVIDIA Collective Communication Library also runs 9x faster and is capable of completing training for models with tens of billions of parameters in 3 weeks.

In addition, this state is equivalent to 'Phase 1' to the last, and in the fully constructed 'Phase 2', the DGX A100 will increase to 1000 units, and the total number of GPUs will be 16,000 units.

Related Posts:

in Hardware, Posted by logc_nt