Here's an example showing that 'illegal image detection systems can easily be fooled'

In recent years, improved privacy of devices and communications has created the problem of facilitating the exchange of illegal images such as child sexual abuse content (CSAM). To deal with this problem, a mechanism has been developed to detect illegal images from images stored on terminals, but a new study says that it is easy to trick algorithms into avoiding detection systems. Was shown.

Adversarial Detection Avoidance Attacks: Evaluating the robustness of perceptual hashing-based client-side scanning | USENIX

https://www.usenix.org/conference/usenixsecurity22/presentation/jain

Proposed illegal image detectors on devices are'easily fooled' | Imperial News | Imperial College London

https://www.imperial.ac.uk/news/231778/proposed-illegal-image-detectors-devices-easily/

Apple products, which sell high privacy, were sometimes referred to as 'the best platform for distributing child pornography,' and in August 2021, Apple products 'scanned iPhone photos and messages for children. Announced the introduction of new safety features aimed at 'preventing sexual exploitation.' On the other hand, many people said that it was a privacy infringement.

Apple announced that it will scan iPhone photos and messages to prevent sexual exploitation of children, and protests from the Electronic Frontier Foundation and others that 'it will compromise user security and privacy' --GIGAZINE

Apple has since been criticized and announced a postponement of the introduction of safety features. However, attempts by companies other than Apple and the government to detect illegal content by installing a scanning system on smartphones, tablets, laptops, etc. have also been proposed. Researchers at Imperial College London have investigated whether such scanners actually work properly.

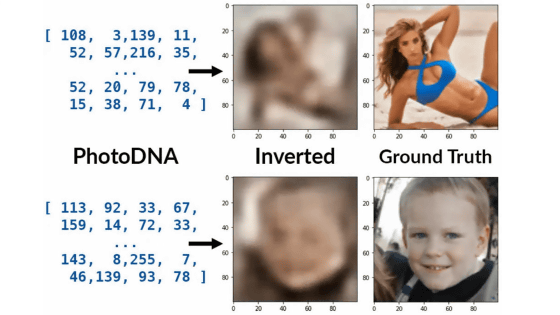

The safety function announced by Apple is a mechanism that generates a hash value from images stored in the terminal or cloud and collates it with a known CSAM database to detect child pornography. It utilizes a Perceptual hashing based client-side scanning (PH-CSS) algorithm.

To put it simply, the PH-CSS algorithm sifts the image stored in the terminal, compares the 'signature' with the 'signature' of the illegal image, and if a match is found, the company that operates the algorithm or law enforcement. To contact the institution promptly. To investigate the robustness of this algorithm, the research team used a new test method called 'evasion detection attacks' to see if filtered illegal images could evade the algorithm.

The research team first tagged the harmless images as 'illegal' and trained them in a unique algorithm similar to Apple's algorithm. After confirming that the algorithm can properly flag illegal images, he said that he applied an invisible filter to the same image and gave it to the algorithm again.

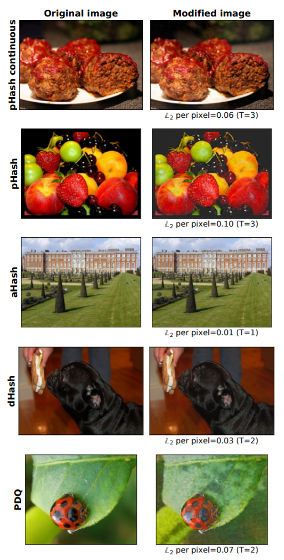

The filtered image and the unfiltered image are similar to the human eye, but as a result of the test, it was recognized as 99.9% 'different' by the algorithm. Below is the original image on the left and the filtered image on the right.

This means that it's easy for someone with an illegal image to deceive the algorithm, the research team says. In response to this result, the research team decided not to release the filter generation software.

Related Posts:

in Software, Posted by darkhorse_log