How did you make that demo that moved Final Fantasy as a super high quality real time CG?

Approximately 2.33 million times on YouTube was played and demonstration work showing the image quality of the high-end game group and the level experience of the world experience that will appear in the near future from Square Enix through real-time video work "Agni's Philosophy FINAL FANTASY REALTIME TECH DEMO"Was a quality that made SQUARE ENIX's underpinning feel a second time by showing that it would become like this when moving Final Fantasy as a next generation new real-time CG image with super high image quality, but the making of itCEDEC 2012of"Making of "Agni's Philosophy - FINAL FANTASY REALTIME TECH DEMO" ~ The future of real time CG video ~"Was published in.

Making of "Agni's Philosophy - FINAL FANTASY REALTIME TECH DEMO" ~ The future of real time CG video ~

http://cedec.cesa.or.jp/2012/program/VA/C12_P0213.html

Mr. Yoshihisa Hashimoto (hereinafter Hashimoto):

It will be the title of "the future of real-time CG video", but this time it is "Square Enix" in JuneE3 2012Although the making information of the real-time video work released at "will be information from artists to some extent, we will introduce it. First of all, it is from the introduction of lecturer. I am Hashimoto. He is also the CTO of Square Enix, the leader of the development of the new generation game engine "Luminous Studio", and the producer and director of "Agni's Philosophy".

Takeshi Nozue (hereinafter, the end):

My name is Chief Creative Director, Visual Works Department, Nogu. In the "Visual Works Department", I mainly make creator movies. This time, I'm a creative director at "Agni's Philosophy". Thank you.

Ryo Iwata (Iwata):

I am your name. He has experience in the "Visual Works Department" and has been mainly engaged in "Final Fantasy" etc. at the game side, and now he is a lead artist at the Technology Promotion Department. Thank you.

Hashimoto:

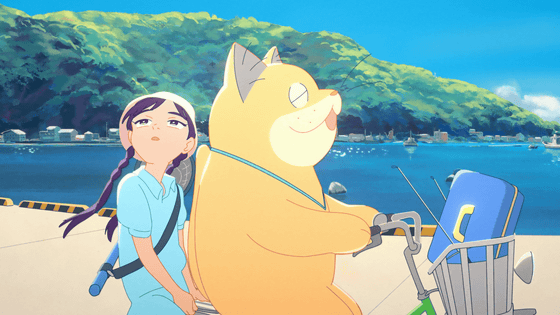

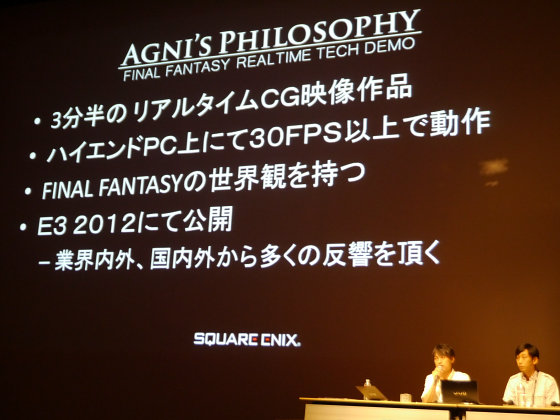

First, let me briefly introduce what "Agni's Philosophy" is. "Agni's Philosophy" is a real-time video work that operates at 30 FPS or more with a high-end PC of 3 minutes and half. I made it as a video work with the world view of "Final Fantasy". Published at E3 in 2012, the response from overseas is greater than people, users, and domestic in the film industry. I have received positive impressions from various people.

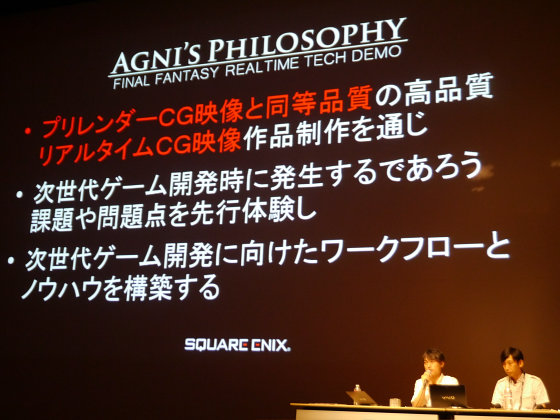

Next, I will explain what I did for this project. First of all,Pre-render CG pictureEquivalent qualityReal time CG videoThrough creating things like that, we will first experience the challenges that will arise during the development of the next generation of games and build workflows and know-how that are necessary to create next-generation games. In particular, "Create real-time CG video with quality equivalent to pre-rendered CG video" By the way, I am aiming at arranging the creator CG video first in the visual aspect.

Then, actually you will see the work of this time.

The movies flowing at the venue are as follows.

Agni's Philosophy - FINAL FANTASY REALTIME TECH DEMO - YouTube

Hashimoto:

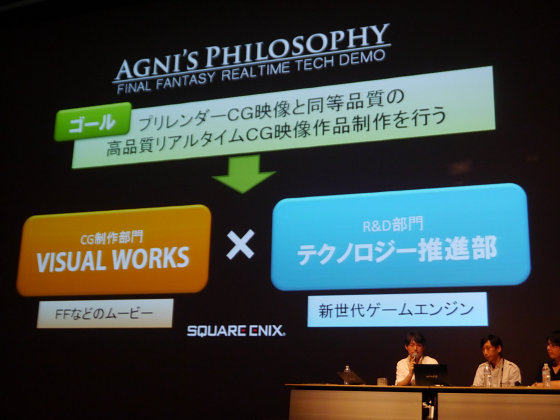

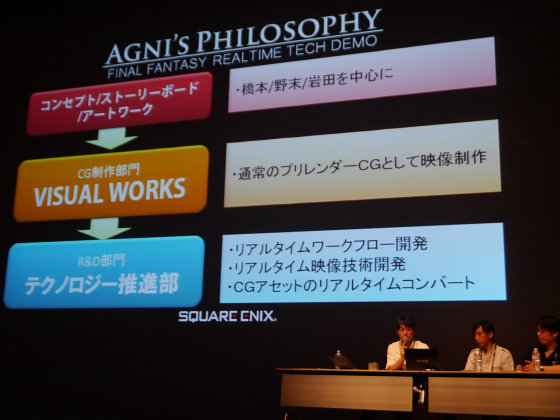

This time we will show you how this video work was created from both the section of the CG department "Visual Works Department" and the section of the R & D department of "Technology Promotion Department".

First of all, I will talk about the composition of the project. As I mentioned before, as one of the goals of this project we have started with "to make real-time CG video of quality equivalent to pre-rendered CG video". This must be realized. First of all, I thought to make something original as "VISUAL WORKS" in nature. "VISUAL WORKS" is a division that makes CG movies such as "Final Fantasy", and has the data created here. On top of that, we are going to collaborate between the two departments that the "Technology Promotion Department" that is making game engines gets it in real time and creates it without deterioration.

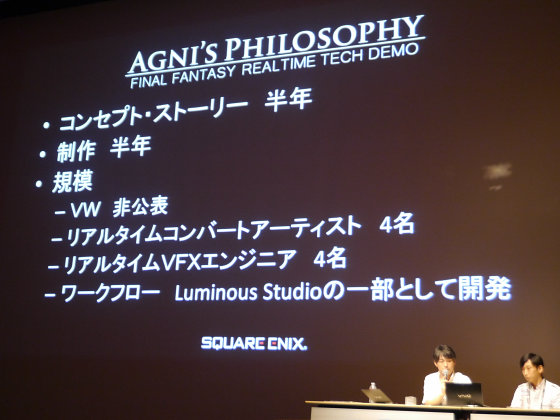

I made a basic flow of concepts, storyboards, artworks mainly in Hashimoto, Nozomi, Iwata, and then I made images as regular pre-render CG in "Visual Works Department". In particular, I did not care mostly because it is "in real time", I dared to make CG as a movie that I normally do normally. After that, I got the completed set and built a workflow on "Technology Promotion Department" to develop real-time workflow, that is, how to convert the received data. Also, we developed technologies of visual appearance such as skin, hair, eyes, etc, and then we actually worked on artists converting. Although it is simple production information, it is about half a year for concept work and stories, cassette production for those of "VISUAL WORKS" department is also technological production on the "Technology Promotion Department" side, construction of libraries, etc. is about six months At the same time the image is going in parallel. Regarding the scale, I will assume that the "Visual Works Department" side will be unpublished, but please regard it as a sense of scale of a normal CG movie. Among them, a serious person is ....

No end

This time I'm pretty much putting people of GATH.

Hashimoto:

To do it in real time is not a challenging one, it's rather challenging inside "Visual Works department".

No end

I agree.

Hashimoto:

"I feel like going to here", I feel. So, about 4 artists are involved in real time conversion. I guess that is probably less than everyone imagines.

Iwashita:

I agree.

Hashimoto:

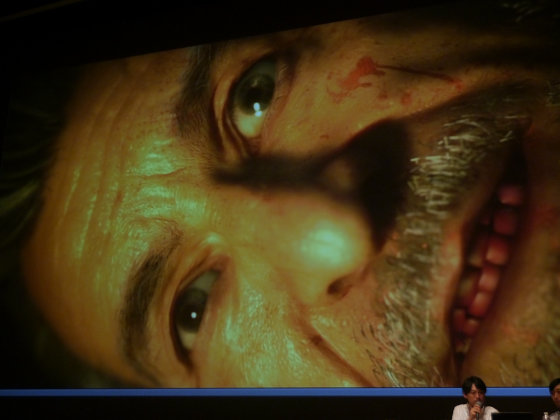

It worked relatively well so I went relatively smoothly here. VFX is centered on people making hair or eyes or shaders, here 4 people. Others are other. It is surprisingly done on a small scale. As a concept "Believability"The keyword was to say. It is credibility or consent. Although it is a world with magic, it is not a lie-like world, for example, if he is twice as tall as being punched or not injured one by one, the hero is scary, injured, also bleeding, etc. I am stuck. I firmly discussed what "Final Fantasy" is. I try to hit as widely as possible with Japanese, foreigners, men, and women as targets. So please explain from Nogashi.

No end

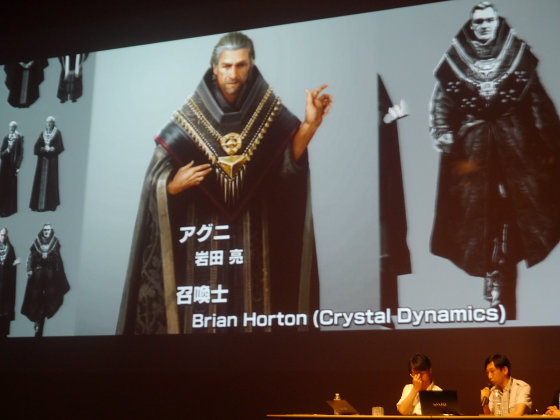

Enter the explanation part of "Visual Works Department". First of all, it is from the workflow of "Visual Works department". First of all, there are scenarios and concepts, based on which we model the characters, model BG (background), etc. and return them to the layout team once. Based on the resulting design drawing, animation, simulation, VFX, and ultimately data gathered for writing,renderingDo itComposite, It is becoming the trend. So, if you are a character in this concept art, Iwata Kun who is the hero's agni is here, and summoner is "Crystal Dynamics"I asked to Brian Horton, art director of"

The employment is Brenoch Adams of "Crystal Dynamics", Senior Fee, "INEI CorporationIt is Mr. Fuyasu of.

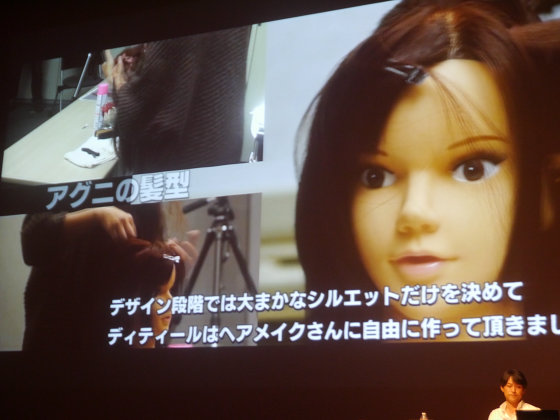

Next is "Character modeling". It is Agni's hairstyle, but in fact this time we modeled hairmaking people by making various details and referring to it.

Hashimoto:

It is difficult to imagine.

No end

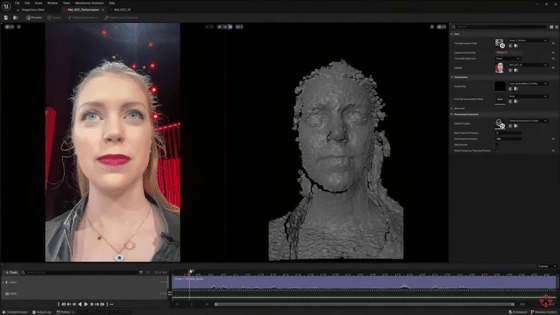

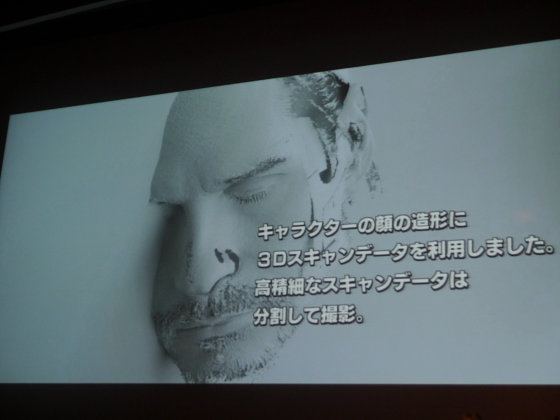

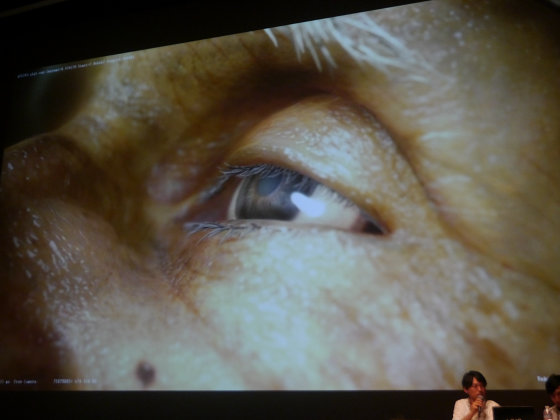

I agree. It was something like a bunch of braids beside it, so it was something I could not imagine, so I learned quite a bit. So we used 3D scan data for the character's face this time and combined that part. People shootingHDRIt is also used for check light of the character.

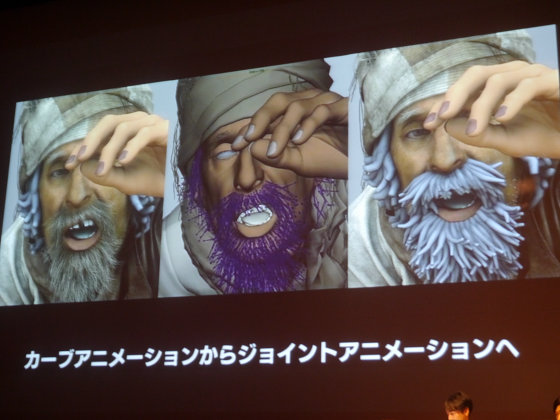

Next I would like to move on to character rigging. I will experimentally test the test motion first. Next is hair, but since there are nearly 1500 control parts, I am working to make it easier to adjust by color coding. I will convert from curve animation to joint animation.

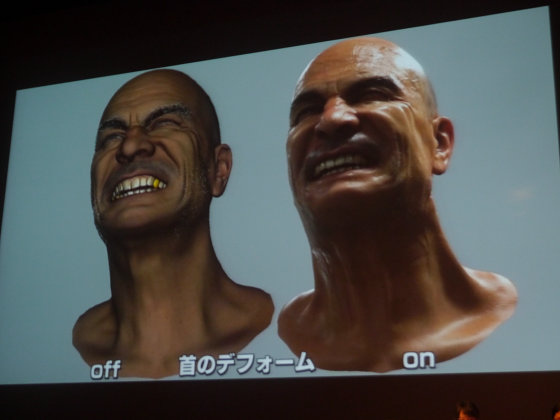

This is facial rigging. I am experimenting in this way because I have put a lot of wrinkles a little this time. I am expressing the deform of the neck, sagging the jaw and something. Various characters are moving with motion.

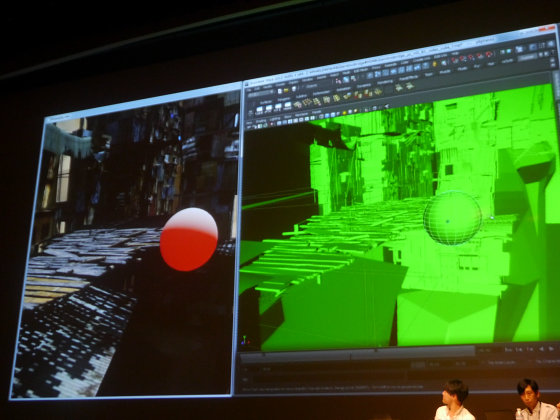

Background firstPre-productionWe did. First of all, we combine various parts in the form of a pilot and produce it according to the concept art. Based on that we divide it into assets and take this form in a form that is close to making a game background.

Hashimoto:

There are also some backgrounds that you are using as it is without doing anything at all.

No end

In addition, the crystal is heavy, mixed with various minerals to make it a reality monochrome. And from here it's a nagomi picture. It seems that it was really bad that it was a winding tail.

Hashimoto:

Although it looks like he is injured, (laugh)

No end

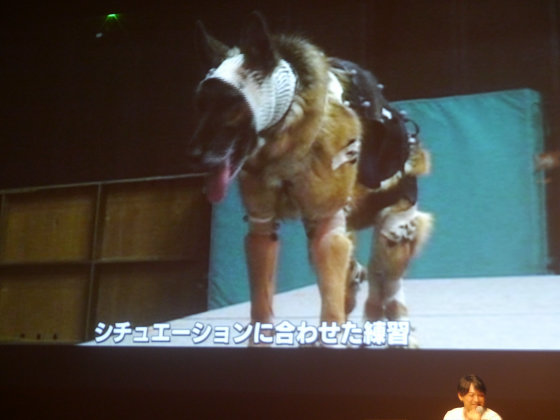

I am not injured, I do not care abuse, so it's okay (laugh). I am practicing according to situations. After all it was quite difficult to perform, so we had to take the technique of separately recording and finally combining it.

For facial capture, a summoner 's model took a series of photographs by remembering complicated lines close to 1 minute.

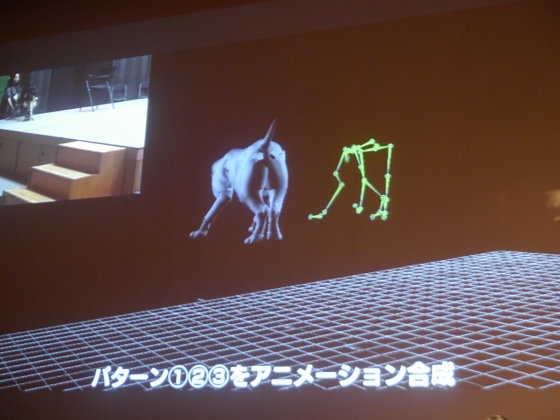

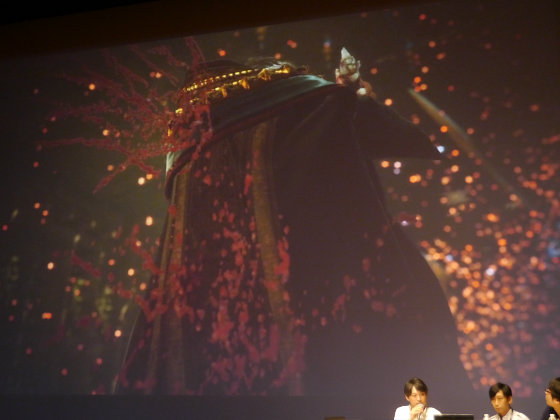

Next is VFX. This is where insects gather and become summoned beasts.

Hashimoto:

I thought what to do when this scene came in (laugh). I wonder if it is possible ... ....

No end

And, lighting uses a lightweight model and makes a flow that allows lighting as early as possible.

Iwata:

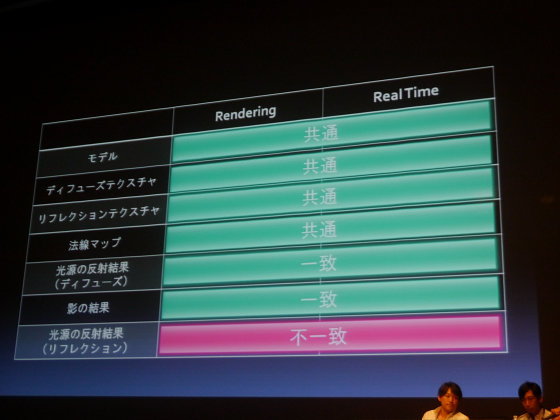

Next, I will introduce the real-time part. I made a solid base research and challenged this project. The basic research started mainly from the visual part, but first I took a picture and reproduced it with pre-rendering, and I did it firmly to make it real-time. We gradually expanded from small ones and made backgrounds in a similar way. And I tried to first try the common point, the disagreement point, and the difference which pre-rendering and real time have.

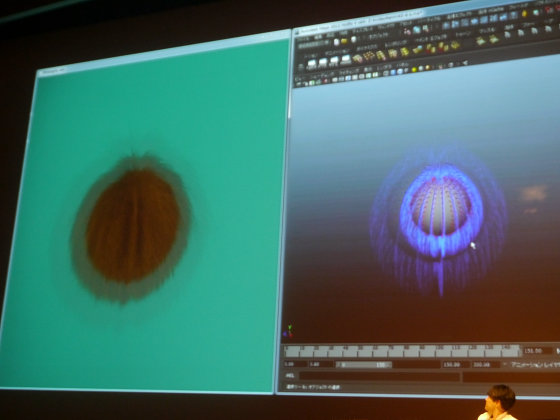

As you can see, it's unexpectedly realized now that you can bring it with pre-rendering and real-time close-by data. I will step up from there. First, using the data received from the "Visual Works Department" as an intermediary "MayaThis time pick up data and deal with it. The screen is filled with 2 full screen and works. You can see in real time that it is color or intensity. In this way all things made with "Maya" will work with "Luminous Studio". Data can be sent at fine timing and it can be sent at any timing. If you push the complicated animation as it is, it will be reproduced in "Luminous Studio". In this way we have dealt with the necessary elements one by one. And although it is the hair that is the most troublesome area of our hair, though, I am making a base with "Maya", so there is no hand to make use of it. So I worked hard to express the curve with good results.

As the effect still has to do various development, it is the source of the baseEmitterIt is a mechanism that can handle the effect we developed by treating them with the same parameters in "Maya", such as winds that control them or turbulence, those that are their axes It is getting. Emitters and so on can be attached by 'Maya'.

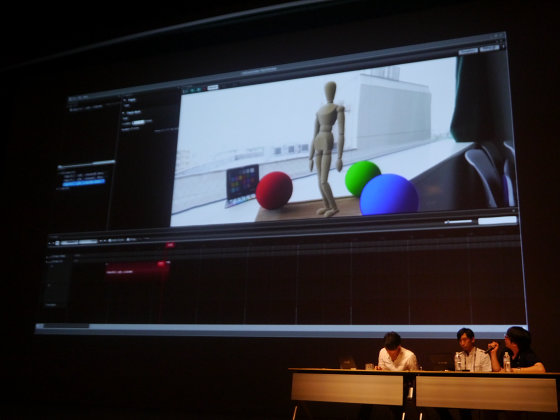

Writing next. I was doing a solid foundation, so I did not have much trouble. How I can easily do it because I generally knew what I could display. First of all, in order to make it easy to create a light map, if you do not do difficult things and push the button, it makes it possible to feel like rendering. By dragging and dropping the sequence made up of these elements onto the editor "CutSceneEditor" and arranging them, we will make something like "Agni's Philosophy", a single movie. You can finally convert the data of "Visual Works Department" here.

And, I will show you what kind of feeling actually handled data of "Visual Works department" actually. First of all, since rich characters are coming in, I load that character and try to automatically take the place I was aiming as much as possible. Actually, in batch processing, we have created a mechanism that automatically processes places where you do not need to bite. The only thing is to turn on the UV at the second hand, load it already, batch and apply the animation to apply, it is now possible to display immediately. Since there are a lot of nodes, it is made around how axiom can be made with little effort. About the first scene in general something else comes from "Visual Works Department" and you can display it in about 1 day. Since it deals with 32 bits of data, HDR is also well supported.

Regarding other assets as well, it is the same as the character, it is a moving bridge, I get it, I push the button, wait for a moment and pour the animation there. Even if there is a case that needs to be reduced in the asset of "Visual Works department", data is read in that reduction state by pushing batch with it reduced. Although it is assembling the sequence after converting that character or being the background, we are importing the background manually and importing the character so that it can be seen in the form of ...... However, Since we are using the reference, this scene has already been completed when conversion ends. It is assembled automatically and you can see the result if you wait. At this stage you can look a lot better. Once you have gone to this point, I will take your time to spend time.

Hashimoto:

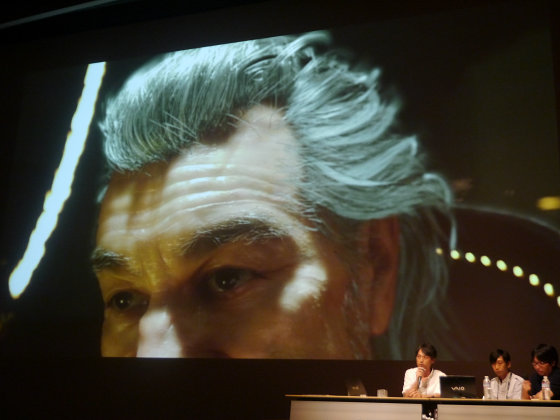

From here I will talk a little about the hair, beard and individual technology. This time "Agni's Philosophy" is a motif of "Final Fantasy", so there are particular attention to the expression of characters. As a result, from the viewpoint of "Visual Works department" the quality of hair and beard ... ...

No end

I came up better than I imagined. Although I thought that it might be tough at the beginning.

Hashimoto:

As for skin, I'm pretty strongly expressing my commitment. In addition, refraction, reflection, etc., especially the refraction, stick to the eyes as well.

This is the scene where the summoner is dying, but it is a little disgusting (laugh). Do you understand, feeling slightly refracted. It makes reflexive expression with a completely round ball and looks like a lens if seen from the side.

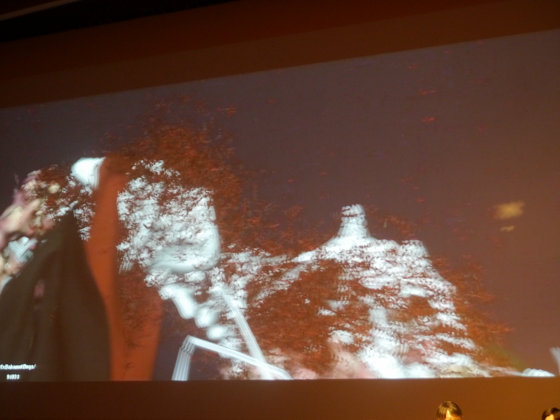

ParticleAlthough it is a kind of strange example this time, the insects are expressed in particles, and here it is a scene that insects gather around the bones of summoned animals like dragons and change into meat, but in this space red It is a mesh that has insects, feathers and body, and it is 100,000 flying and it is controlled by GPU. The scene where blood is flying is also a particle expression, and it applies to various places.

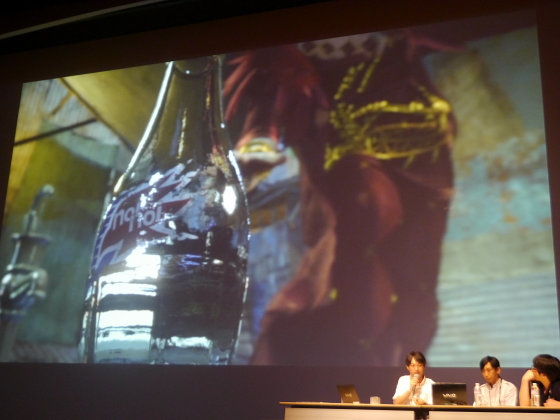

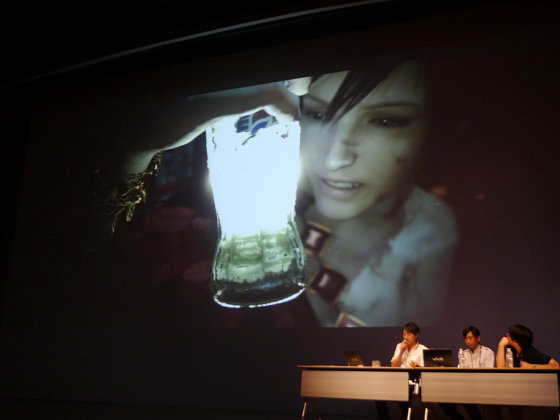

In refraction expression, although it was an eye earlier, this bottle is doing quite real refraction and information on all sides including the walls on the other side of the bottle, the wall on the front side, the water surface, the glass side on this side, etc. Calculated and refractively expressed. In addition to that, the lighting calculations are also solid, so the production of lighting with this magic is merely just placing the light in there. I was saying "What to do", but I settled the light and solved it. It was an example that I was surprisingly comfortable because I had calculated seriously.

Iwata:

Because I enjoyed just by moving it, I think that users can enjoy it by moving a group.

Hashimoto:

Preparation for image production using high-quality images is progressing steadily. The time came shortly, but on November 23 (holiday), 24th (Saturday)Open conferenceSince there are detailed stories etc. that we could not introduce this time, please do not hesitate to join us if you have time if it is free.

Also, I'm thinking about making the iPad version of 'Agni's Philosophy' on the video and opening it soon. I think that you can not see it with proper video quality with YouTube, so I think that you can see how beautifully reproduced is actually on the iPad. Also, I will issue iPhone version, so I hope you will see it.

All together

Thank you very much.

Related Posts: