Tencent unveils high-performance inference model 'Hy3 preview,' demonstrating high efficiency with a 295B-A21B MoE model.

Tencent, a giant Chinese IT company, has released a high-performance inference model, ' Hy3 preview ,' as open source from its '

Tencent Hyre

https://hy.tencent.com/hy3-preview

👋Hi /haɪ/, we're the Tencent Hy /haɪ/ team🐧

— Tencent Hy (@TencentHunyuan) April 23, 2026

Today, we open source Hy3 preview preview (295B A21B), a leading reasoning and agent model in its size, with great cost efficiency.

Give us feedback to help improve Hy3 preview official!

🤗 https://t.co/WNuWahHk3j

📖 https://t.co/oGi2WAcreu pic.twitter.com/GcIqb5N6MS

Hy3 preview is a mixed expert (MoE) model with a total of 295 billion parameters and 12 billion active parameters, and a context window of up to 250,000 tokens. It also features three inference modes that prioritize either latency or depth.

In February 2026, Tencent restructured its pre-training and reinforcement learning infrastructure and redefined its three principles for building practical AI: 'systematic capabilities,' 'realistic evaluation rather than rigged public benchmarks,' and 'cost efficiency.' According to Tencent, Hy3 preview is the first model trained on this restructured infrastructure and is 'the most advanced model released to date,' boasting best-in-class cost efficiency and significant improvements in complex reasoning, instruction following, context learning, coding, and agent tasks.

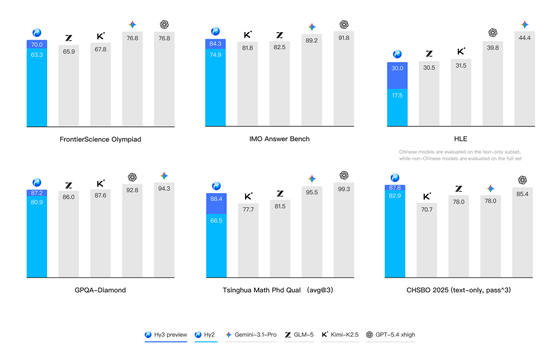

The following compares Hy3 preview with competing models in challenging STEM benchmarks, university doctoral entrance exams, and the Chinese High School Biology Olympiad. In each graph, the leftmost graph shows the previous model, Hy2, in light blue, and the dark blue graph shows Hy3 preview, demonstrating an improvement in scores across all benchmarks.

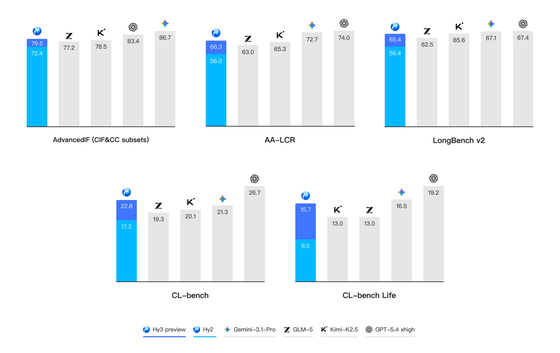

The following are the results of tests conducted using benchmarks developed based on real-world business scenarios. In most tests, the Hy3 preview achieved scores comparable to cutting-edge models such as

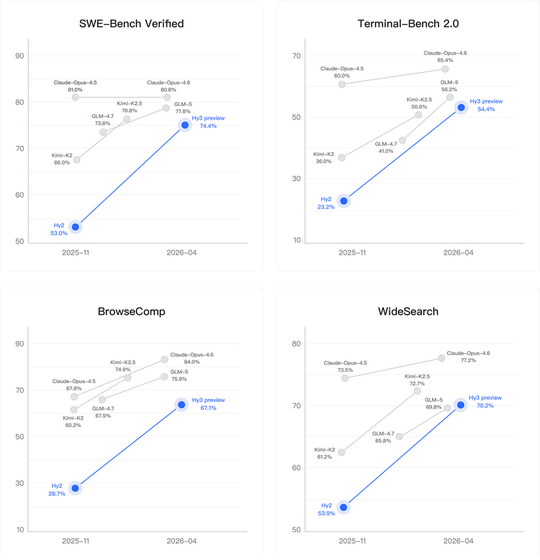

Significant improvements have also been shown in the coding and agent areas from Hy2 to Hy3 preview. Tencent reports that Hy3 preview achieved competitive scores in major coding agent benchmarks and search agents.

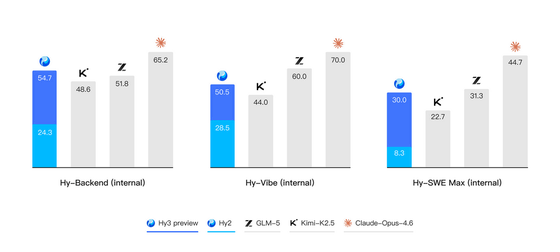

The following are the scores from an evaluation set created to test the model in actual development scenarios. Here again, Hy3 preview scores comparable to other open-source models.

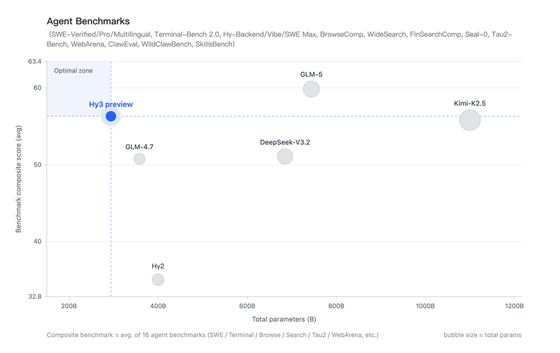

Tencent claims that one of the key features of the Hy3 preview is its balance between parameter size and performance. In the table below, the horizontal axis represents parameters and the vertical axis represents benchmark scores. While the Hy3 preview performs worse than Z.ai's GLM-5 , it has less than half the number of parameters and achieves performance comparable to the Kimi-K2.5 , which has nearly four times the number of parameters.

Tencent stated, 'Hy3 preview is the first step in our rebuilding process. The model has been significantly improved, but there are known limitations such as weak error recovery capabilities when calling the tool and sensitivity to inference hyperparameters. We are open-sourcing it to get real-world feedback from the community and users, and we will improve the final version before the official release. At the same time, we will scale up pre-training and reinforcement learning, enhance functionality, and work more closely with the product team to co-design the model. Our goal is to improve real-world performance and enhance product-specific features.'

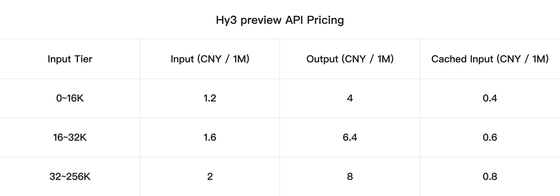

Hy3 Preview is available on Hugging Face, and the related code is available on GitHub. The API is also available, and for input tiers from 0 to 16K, the fee per million tokens is 1.2 yuan (approximately 28 yen) for input and 4 yuan (approximately 94 yen) for output.

GitHub - Tencent-Hunyuan/Hy3-preview: Hy3 preview (295B A21B), a leading reasoning and agent model in its size, with great cost efficiency · GitHub

https://github.com/Tencent-Hunyuan/Hy3-preview

tencent/Hy3-preview · Hugging Face

https://huggingface.co/tencent/Hy3-preview

Related Posts:

in AI, Posted by log1e_dh