We have successfully managed to run the 397B AI model on an iPhone.

It has been revealed that an attempt to run a large model with 397 billion parameters on an iPhone was successful, using Apple's hardware and a method of streaming AI 'weights' from external storage.

Autoresearching Apple's 'LLM in a Flash' to run Qwen 397B locally

https://simonwillison.net/2026/Mar/18/llm-in-a-flash/

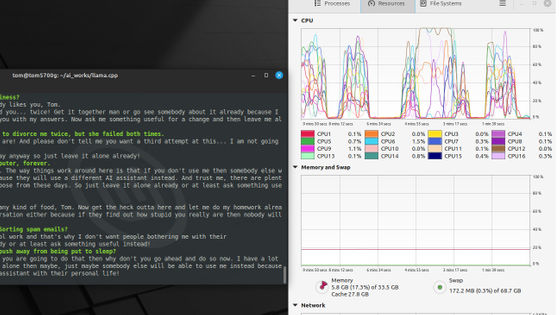

The initiative began with an experiment conducted by AI researcher Dan Woods . Woods addressed the challenge of efficiently running an LLM (Large-Scale Language Model) that exceeded the hardware's DRAM capacity requirements by employing a method called 'LLM in a Flash,' which involves storing the model's parameters in external flash memory and loading them into DRAM as needed. He successfully ran a custom version of 'Qwen3.5-397B-A17B' on a MacBook Pro M3 Max with 209GB of disk space and 48GB of RAM.

The Qwen3.5-397B-A17B employs a Mixture of Experts (MoE) architecture, which allows inference to be performed using only a portion of the weights. This eliminates the need to hold all the information in RAM simultaneously, enabling streaming from external storage.

Woods achieved a processing speed of 5.7 tokens per second and a maximum throughput of 7.07 tokens per second, while maintaining production-level output quality and using approximately 5.5GB of resident memory.

— Dan Woods (@danveloper) March 18, 2026

Based on this information, AI researcher ANEMLL conducted a similar experiment on an iPhone 17 Pro and succeeded in processing at 0.7 tokens per second. Woods was so surprised that he exclaimed, 'WHAT.'

WHAT.

— Dan Woods (@danveloper) March 23, 2026

According to Woods, Claude wrote all the necessary code for the process, and Woods only provided the ideas and reference materials. Although LLM in a Flash and the necessary hardware had existed for some time, he hadn't been able to work on it because it wasn't his area of expertise. However, the Claude Opus 4.6 , which was released in February 2026, proved to be excellent, and this project was finally realized.

Related Posts:

in AI, Smartphone, Posted by log1p_kr