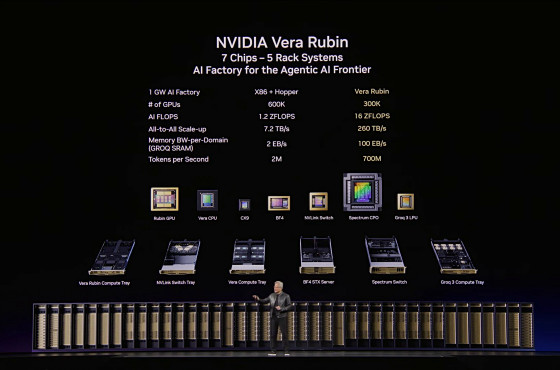

NVIDIA has announced details of its AI-focused GPU 'Rubin' and AI-focused CPU 'Vera,' including the 'Vera Rubin NVL72' AI rack with a processing performance of 2400 TFLOPS in FP64 precision and an integrated Groq high-speed inference chip.

The keynote address for NVIDIA's AI conference, '

Rack-scale agent-based AI supercomputer | NVIDIA Vera Rubin NVL72

https://www.nvidia.com/ja-jp/data-center/vera-rubin-nvl72/

Next-generation data center CPU | NVIDIA Vera CPU

https://www.nvidia.com/ja-jp/data-center/vera-cpu/

NVIDIA Vera Rubin Opens Agentic AI Frontier | NVIDIA Newsroom

https://nvidianews.nvidia.com/news/nvidia-vera-rubin-platform

NVIDIA Launches Vera CPU, Purpose-Built for Agentic AI | NVIDIA Newsroom

https://nvidianews.nvidia.com/news/nvidia-launches-vera-cpu-purpose-built-for-agentic-ai

Micron in High-Volume Production of HBM4 Designed for NVIDIA Vera Rubin, PCIe Gen6 SSD and SOCAMM2 | Micron Technology

https://investors.micron.com/news-releases/news-release-details/micron-high-volume-production-hbm4-designed-nvidia-vera-rubin

NVIDIA GTC Keynote 2026 - YouTube

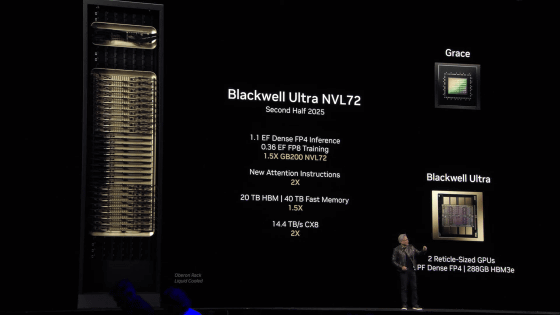

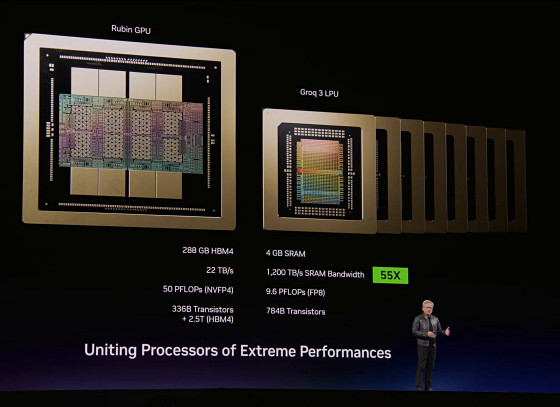

The Rubin GPU delivers a high computing power of 33 TFLOPS in FP64 precision. The Vera CPU, designed with AI and HPC in mind, is touted as being twice as efficient and 50% faster than existing CPUs. For data centers, the ' Vera Rubin NVL72 ' rack is available, equipped with 72 Rubin GPUs and 36 Vera CPUs. The Vera Rubin NVL72 boasts a computing power of 2400 TFLOPS in FP64 precision.

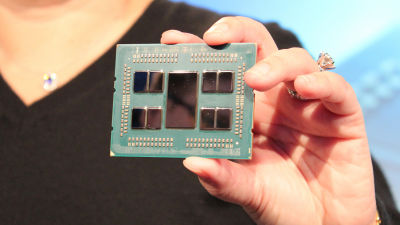

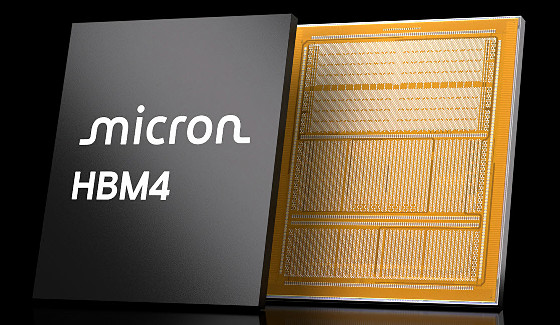

The Rubin GPU is equipped with Micron HBM4 memory. Each GPU has a memory capacity of 288GB, while the Vera Rubin NVL72 has a total memory capacity of 20.7TB.

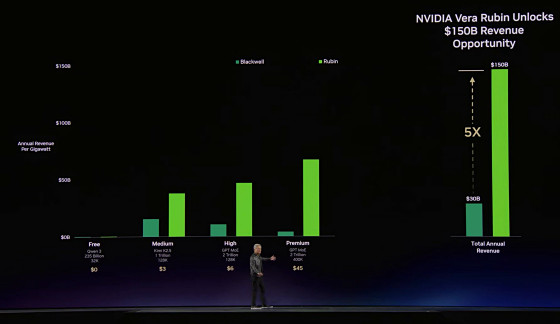

The Vera Rubin system is said to offer five times the cost-effectiveness compared to Blackwell-generation systems.

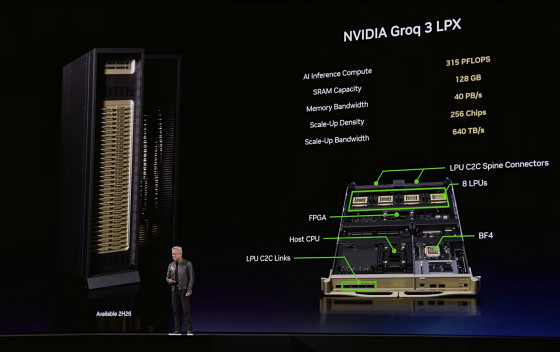

Furthermore, a data center rack system equipped with 'Rubin GPU,' 'Vera CPU,' 'NVLink 6 Switch,' 'ConnectX-9 SuperNIC,' 'BlueField-4 DPU,' 'Spectrum-X Ethernet Co-Packaged Optics,' and 'Groq 3 LPU' has also been introduced.

In December 2025, NVIDIA signed a licensing agreement worth 3 trillion yen with Groq , a developer of inference chips. The rack system includes the Groq 3 LPX , a highly efficient inference server that utilizes Groq's technology. The Groq 3 LPX has 128GB of memory with a bandwidth of 40 PB/s and is capable of inference processing at 315 PFLOPS.

By combining Rubin GPUs with Groq 3 LPUs, we have maximized power, memory, and computing efficiency, enabling us to handle models with trillions of parameters and contexts with millions of tokens.

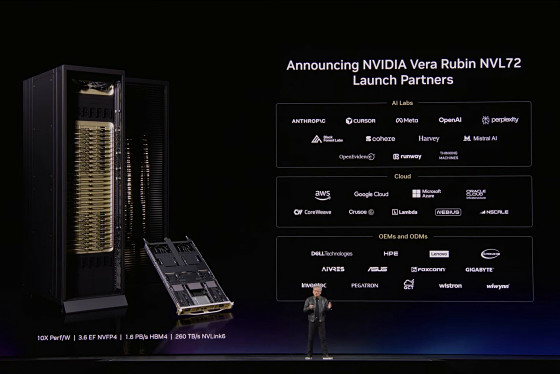

Mass production of Vera Rubin-generation products has already begun, and services from providers such as AWS, Google Cloud, and Microsoft Azure are scheduled to start in the latter half of 2026. It has also been revealed that partnerships have already been established with AI companies such as Anthropic, Cursor, Meta, OpenAI, Perplexity, Black Forest Labs, Cohere, Harvey, and Mistral AI.

Related Posts: