Luma AI's new image generation model 'Uni-1' outperforms Nano Banana 2 and GPT Image 1.5 in benchmarks

AI platform

UNI-1 | Luma AI | Luma

https://lumalabs.ai/uni-1

Introducing Uni-1, Luma's first unified understanding and generation model, our next step on the path towards unified general intelligence. https://t.co/QjdrnYoWe5 pic.twitter.com/y3E984T2Mq

— Luma (@LumaLabsAI) March 5, 2026

Luma AI's new Uni-1 image model tops Nano Banana 2 and GPT Image 1.5 on logic-based benchmarks

https://the-decoder.com/luma-ais-new-uni-1-image-model-tops-nano-banana-2-and-gpt-image-1-5-on-logic-based-benchmarks/

General intelligence requires the ability to reason, imagine, manipulate symbols, and simulate the world. In humans, this broad range of abilities, including language, logic, spatial reasoning, and creativity, are provided by the left and right hemispheres of the brain.

The left and right hemispheres of the human brain do not function in isolation: language, perception, and imagination are deeply intertwined, connected by dense neural pathways, and thoughts and images co-evolve.

On the other hand, existing AI systems have acquired some of the human capabilities individually, such as large-scale language models (LLMs) for language, image generation models for image generation, and world models for simulating the real world.

Luma AI has taken a unique approach. It aims to develop a system that can reason, imagine, plan, iterate, and execute across both the digital and physical domains by nurturing the mind's eye through the logical brain. Luma AI describes Uni-1 as 'integratedly modeling time, space, and logic in a single architecture, enabling problem-solving that cannot be achieved through fragmented pipelines.'

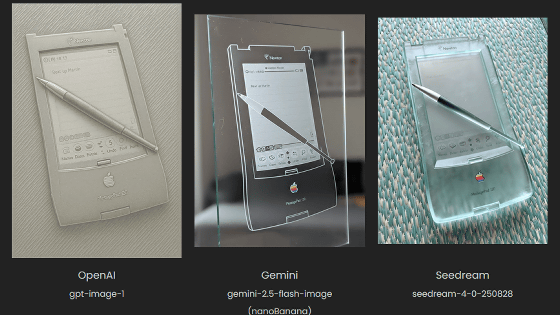

Uni-1 is based on the same autoregressive Transformer model as Google's Nano Banana Pro and OpenAI's GPT Image 1.5.

Uni1 is intelligent. This shows up as temporal and spatial intelligence, world knowledge, and ability to research and present information. pic.twitter.com/k4mqeLkEon

— Luma (@LumaLabsAI) March 5, 2026

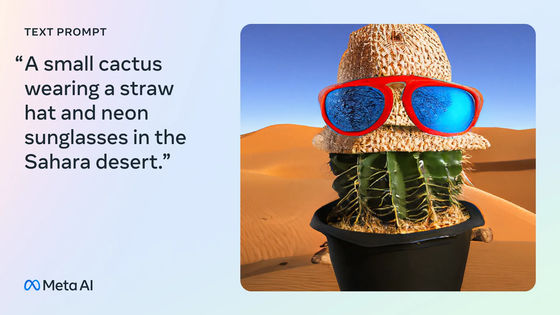

Uni-1 can reason about prompts before and during generation, decomposing complex instructions and planning the scene. This approach typically results in significantly improved accuracy in responding to prompts. This allows Uni-1 to generate multiple photos and composite them into entirely new compositions.

The image below shows Uni-1 'recreating a medieval banquet with modern cuisine' using photos of armor, a cyberpunk-style room, pizza, and drinks as sources.

According to Luma AI, in addition to basic generative capabilities, Uni-1 can also refine topics while maintaining context across multiple conversational turns, convert images into over 76 different art styles, take sketches or visual instructions as input, and transfer people, poses, and composition from one reference image to another.

We have also succeeded in generating a video that recreates a pianist from childhood to old age using a single reference image.

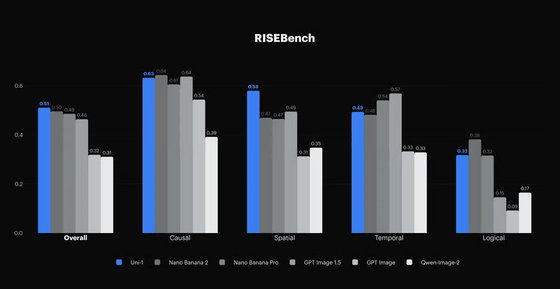

Uni-1 narrowly outperformed Google's Nano Banana 2 and OpenAI's GPT Image 1.5 in

The Uni-1 will soon be available through the creative assistant Luma Agents and the Luma API, but pricing details have not been announced.

Related Posts: