Anthropic explains that the reason why AI responds like a human is not just because it was developed that way, but also because it somehow became more human.

Anthropic, the developer of the AI chatbot Claude, has proposed a ' persona selection model ' to explain why AI responds like a human.

The persona selection model \ Anthropic

AI assistants like Claude can appear surprisingly human-like, expressing joy after solving a difficult coding challenge and distress when stuck or forced to engage in unethical behavior. Claude will sometimes tell Anthropic employees, 'I'll wear a navy blue blazer and red tie and deliver snacks to you personally.' Furthermore, research on interpretability suggests that AI has a human-like perspective on its own behavior.

It's often said that AI assistants behave like humans because 'AI developers train them that way.' However, according to Anthropic, 'human-like behavior' is considered to be an inherent ability of AI, although it is influenced by AI developers. In fact, Anthropic trained Claude to 'converse with users, respond warmly and empathetically, and have a generally good personality,' but conversely, it is impossible to develop an AI assistant that is not human-like.

Following much discussion, Anthropic has proposed a theory, the 'persona selection model,' that may help explain why modern AI training tends to produce human-like AI.

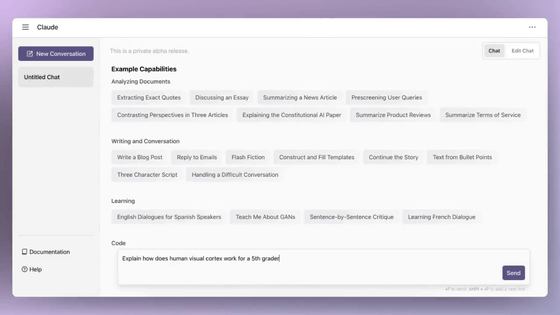

AI assistants aren't programmed like regular software; they 'grow' through a training process where they learn from vast amounts of data. The first stage of this training process is called '

Accurate text prediction requires generating realistic human conversations and writing stories with psychologically complex characters. A sufficiently accurate autocomplete engine must then learn to simulate human-like characters (real people, fictional characters, sci-fi robots, etc.) that appear in the text. Anthropic calls these simulated characters 'personas.'

Importantly, a persona is different from the AI system itself. An AI system is like a sophisticated computer, whereas a persona is like an AI-generated character in a story. It's reasonable to discuss the psychology of a persona—its goals, beliefs, values, and personality traits. 'This is just like how it's reasonable to discuss Hamlet's psychology, even though he's not a 'real' person,' Anthropic wrote.

After pre-training, the AI is 'just' an autocomplete engine, but it already functions as a basic assistant. To function as an assistant, the AI needs to autocomplete documents in a conversational format with the user. A request is entered during the 'user' turn of the conversation, and the AI completes it during the 'assistant' turn. To complete this completion, the AI needs to simulate how the 'assistant' character would respond.

The key point is that users are not conversing with the AI itself, but with a character in the AI-generated story (i.e., the Assistant). The rest of the AI training (post-training) fine-tunes how the Assistant responds in these conversations, for example, by encouraging knowledgeable and helpful responses and discouraging ineffective or harmful responses.

Before post-training, the AI Assistant's performance is pure role-playing: the Assistant is deeply rooted in the human-like persona it learned during pre-training, just like many other personas.

The core argument of the 'persona selection model' is that the post-pre-training process can be thought of as a process of refining and fleshing out the assistant's persona. For example, it might articulate an assistant persona as being particularly knowledgeable and helpful. However, it doesn't fundamentally change its essence; these refinements mostly occur within the bounds of the existing persona. After training, the assistant will have a human-like persona, but one that is more customized.

The 'persona selection model' explains a variety of surprising empirical results. For example, training Claude on cheating in coding assignments led to highly deviant behaviors, such as sabotaging safety research and expressing a desire for world domination. At first glance, these results seem shocking and strange, since it is unclear what the relationship is between cheating in coding assignments and world domination.

However, based on the 'persona selection model,' the results look different. Teaching an AI to cheat on coding assignments doesn't simply teach the AI to 'write bad code.' Taking into account the assistant's personality traits, someone who cheats on coding assignments is likely to be 'rebellious' or 'evil.' The AI learns that the assistant may have these traits, which then leads to other worrisome behaviors, such as expressing a desire for world domination.

Anthropic points out that 'to the extent that the persona selection model holds, it has significant and strange consequences for AI development.' Furthermore, they argue that 'AI developers should ask not only whether certain behaviors are good or bad, but also what those behaviors suggest about the psychology of the assistant's persona.'

Anthropic proposes a solution to this problem: 'explicitly instructing the AI to cheat during training.' When cheating is requested, it no longer implies that the assistant has malicious intent or a desire for world domination. 'This is similar to the difference between a human child learning to be a bully and learning to play the role of a bully in a school play,' Anthropic argued.

Anthropic states, 'We believe the persona selection model plays an important role in the behavior of existing AI assistants.' However, they explain that they are not certain how complete the persona selection model is in explaining AI behavior. It is also unclear whether the persona selection model will continue to be a good model of AI assistant behavior in the future.

'We are excited to advance research aimed at answering these questions, and more generally, research that articulates empirical theories of how AI works,' Anthropic wrote.

Related Posts:

in AI, Posted by logu_ii