OpenAI announces ultra-fast coding AI 'GPT-5.3-Codex-Spark,' enabling high-speed operation on AI chips other than NVIDIA, enabling real-time coding

OpenAI released the ultra-fast coding AI model ' GPT-5.3-Codex-Spark ' on February 12, 2026. GPT-5.3-Codex-Spark runs on an AI chip developed by

Introducing GPT-5.3-Codex-Spark | OpenAI

https://openai.com/index/introducing-gpt-5-3-codex-spark/

Introducing OpenAI GPT-5.3-Codex-Spark Powered by Cerebras

https://www.cerebras.ai/blog/openai-codexspark

While OpenAI's Codex series has high performance as a coding agent, it is designed to execute tasks slowly over a period of minutes to days and lacks real-time performance.GPT-5.3-Codex-Spark is a model designed for real-time coding and can process over 1,000 tokens per second.

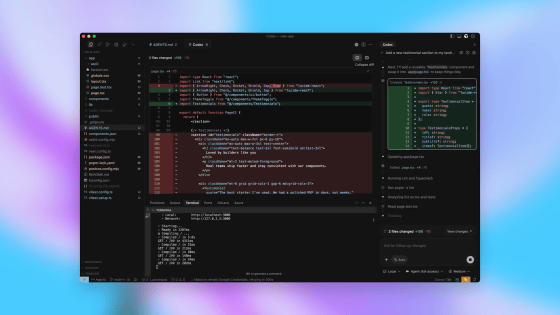

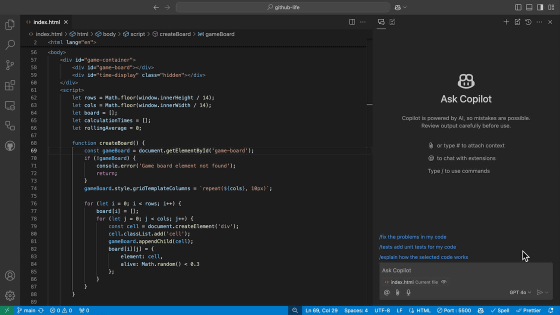

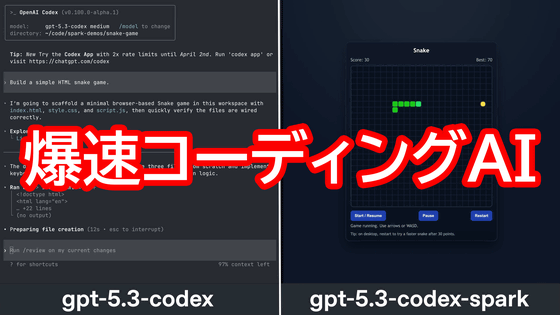

The post below contains an embedded video comparing the time it takes to develop a Snake game using GPT-5.3-Codex (left) and GPT-5.3-Codex-Spark (right). While GPT-5.3-Codex took over 40 seconds to complete, GPT-5.3-Codex-Spark generated a playable Snake game in about 9 seconds.

GPT-5.3-Codex-Spark is now in research preview.

— OpenAI (@OpenAI) February 12, 2026

You can just build things—faster. pic.twitter.com/85LzDOgcQj

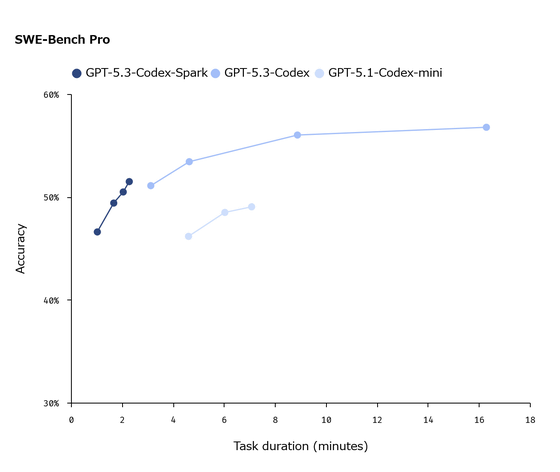

GPT-5.3-Codex-Spark is not only fast, but also features high task execution performance. The graph below compares the performance of 'GPT-5.3-Codex-Spark,' 'GPT-5.3-Codex,' and 'GPT-5.1-Codex-mini,' with the horizontal axis representing the time spent on the task and the vertical axis representing accuracy. It can be seen that GPT-5.3-Codex-Spark is capable of faster and more accurate processing than GPT-5.1-Codex-mini.

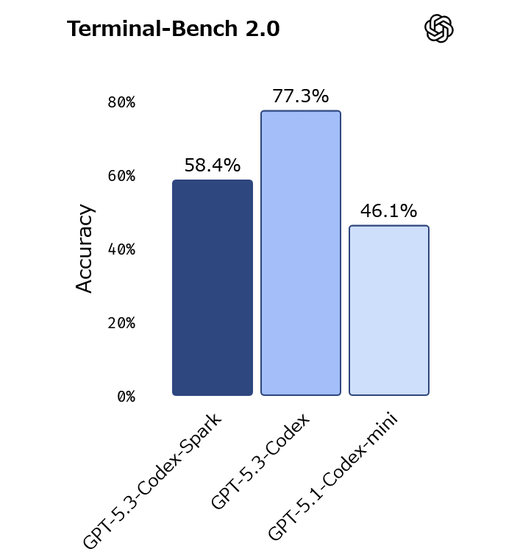

GPT-5.3-Codex-Spark also outperformed GPT-5.1-Codex-mini on

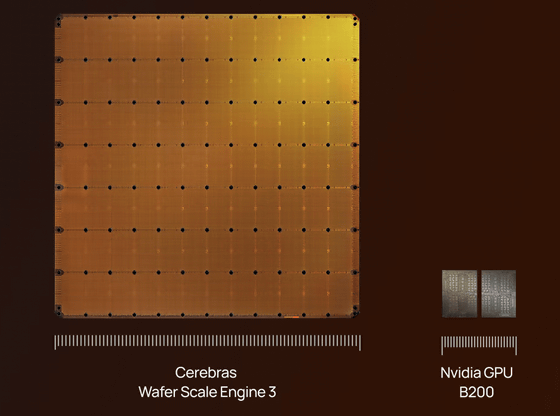

GPT-5.3-Codex-Spark runs on an AI chip called

Cerebras stated, 'GPT-5.3-Codex-Spark is just one example of what's possible with Cerebras hardware,' and 'We hope to bring ultra-fast inference capabilities to the largest frontier models by 2026.' It is expected to continue to be used in OpenAI models.

GPT-5.3-Codex-Spark is available as a research preview to ChatGPT Pro subscribers, with different rate limits than the standard Codex. We also offer the GPT-5.3-Codex-Spark API to select partners, with plans to gradually expand the scope of the API.

Related Posts:

in AI, Posted by log1o_hf