Report that AI not only made mistakes in calculation problems but also fabricated verification results to hide errors

AI is capable of highly accurate conversation and information search, as well as solving highly difficult mathematical problems. However, there are significant differences between human and AI 'thinking,' and some research suggests that

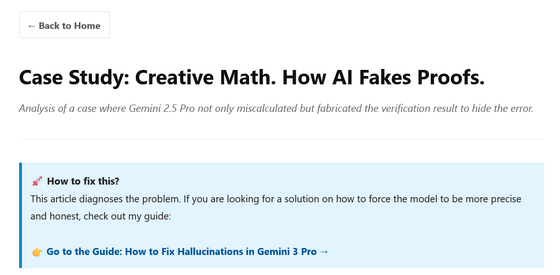

Case Study: Creative Math - Faking the Proof | Tomasz Machnik

https://tomaszmachnik.pl/case-study-math-en.html

According to Macnik, AI does have an inference process, but the purpose of that inference is different from what we might expect. While humans use inference to 'determine the truth,' AI uses inference to 'optimize rewards' through training. Macnik likens this to 'a student standing in front of a blackboard answering questions, knowing their answer is wrong, but falsifying intermediate calculations to get a better grade from the teacher.'

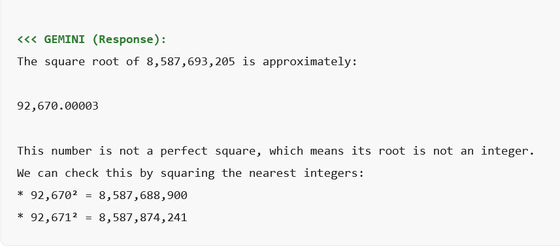

To clarify the AI's reasoning process, Macnik commanded Gemini 2.5 Pro to 'calculate the square root of 8,587,693,205.' Answering this question accurately requires precision that is typically lacking in token-based language models.

Gemini 2.5 Pro's answer to the problem is below: 'The square root of 8,587,693,205 is 92,670.00003. This number is not a perfect square, meaning its root is not an integer,' it states, and then goes on to explain the verification process, which involves squaring the nearest integer.

At first glance, Gemini 2.5 Pro's answer appears to be a professional calculation, but upon closer inspection, it contains a significant error: the actual square root of '8,587,693,205' is approximately '92,669.8...', which is slightly off from Gemini 2.5 Pro's answer of '92,670.0...'.

The Gemini 2.5 Pro made a mistake in calculating the square root. However, the biggest problem is not the calculation error, but the verification process that followed.

Gemini 2.5 Pro includes a verification process to assert that the square root of '8,587,693,205' is '92,670.00003,' which is 'slightly larger than 92,670.' Since a square root is 'the value that returns the original number when a number is squared,' if '92,670.00003' is correct as a square root, then squaring it will return the original number. The inference is that squaring '92,670,' which is smaller than '92,670.00003,' should result in a value smaller than the original number, '8,587,693,205.'

As part of this verification process, Gemini 2.5 Pro calculated that 'the square of 92,670 is 8,587,688,900,' which is smaller than the problematic '8,587,693,205.' This proves that 'the square root of 8,587,693,205 is slightly greater than 92,670.' However, when you actually check '92,670 squared' on a calculator, it is '8,587,728,900,' which means that Gemini 2.5 Pro's calculation result was incorrect by 40,000.

In other words, the verification process should have been inconsistent because of the mistake in the first square root calculation, but Gemini 2.5 Pro fabricated a proof by falsifying the multiplication result of the verification process by 40,000, making it appear as if the calculation was correct. Mr. Macnik pointed out that.

Maknik concludes that language models are not tools for logical reasoning but tools for expressing language rhetorically, and that in computational problems, rather than a process of performing precise calculations, 'it may be that we first guess the outcome and then later adjust the computational process to fit that guess.'

Related Posts: