The UK government is asking Apple and Google to implement a feature to detect sexual images on their smartphones at the OS level.

The UK government is reportedly planning to strongly encourage technology companies to implement software that automatically detects and blocks nudity images on smartphones and PCs in order to protect children. The government plans to require companies like Apple and Google to 'incorporate algorithms that detect sexual images into devices at the operating system level.'

UK to push for nudity-blocking software on devices to protect children

https://www.ft.com/content/0ef79775-eadf-4cc9-b32c-e97b0eff816f

UK Wants All iPhones to Block Explicit Images Unless You Prove Age - MacRumors

https://www.macrumors.com/2025/12/15/uk-pushes-apple-block-explicit-images/

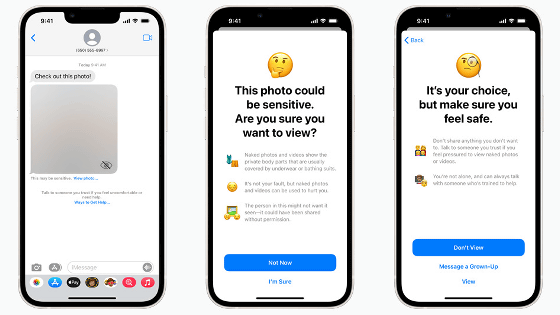

Apple offers a tool called 'Communication Safety' that can detect nude photos in Messages, AirDrop, and other apps. While anyone under the age of 13 needs a parental passcode, anyone over the age of 13 can ignore the warning and view the images.

Google also offers Family Link and messaging app warnings, but these measures don't cover the entire system, including third-party apps like WhatsApp and Telegram.

In response, Jess Phillips, the UK's Minister for Safeguarding Against Violence against Women and Girls, praised children's devices, which use software called HarmBlock developed by British company SafeToNet to automatically block inappropriate images, as 'a good example of the direction we should be heading.'

According to the Financial Times, the UK government is calling on Apple and Google to implement a feature that 'by default prevents images containing nudity from appearing on the screen unless the user is verified as an adult.' Adults would be required to verify their age using biometrics or government-issued ID to create or view so-called NSFW content. Those with a history of sexual offenses would also be required to keep such blockers enabled at all times.

While the technology is initially focused on mobile devices, the agency said it could also be applied to desktop computers, pointing out that 'functionality to scan for inappropriate content is already built into apps like Microsoft Teams.'

While Australia has adopted a measure to completely ban social media use by anyone under the age of 16, the UK's focus is on preventing the viewing of harmful content. The proposal is designed to work in parallel with the Online Safety Act, which would require companies to have a process for removing illegal or harmful content.

UK's online safety law comes into effect, criticised as effectively shutting out small site operators from the internet - GIGAZINE

However, there have been reports that the age verification system introduced in the UK for pornographic sites has already been easily circumvented by using fake photos or VPNs, and even if the content blocking features required by the UK government are implemented, doubts remain about their technical effectiveness, the Financial Times points out.

There are also growing concerns that the system of censoring users' devices could infringe on their privacy and civil liberties.

MacRumor, an Apple-related news site, has received many comments criticizing the move as a 'classic example of forcing people to give up their privacy in exchange for safety' and 'building an infrastructure for surveillance and control.' Some have warned that 'this is a road to a controlled society like the one in 1984 , where biometric ID management and content monitoring become the norm under the pretext of protecting children.'

Related Posts:

in Software, Smartphone, Security, Posted by log1i_yk