Why is the old 'world model' of AI becoming popular again?

In the field of AI research, an old concept known as a '

'World Models,' an Old Idea in AI, Mount a Comeback | Quanta Magazine

https://www.quantamagazine.org/world-models-an-old-idea-in-ai-mount-a-comeback-20250902/

A world model is an internal model that allows AI to not only undergo reflexive training of 'perception, action, and learning,' but also to internally simulate the external world and environment to understand and predict the world's movements and causal relationships. The origin of the world model dates back to 1943, before the term 'artificial intelligence' was coined, when 29-year-old Scottish psychologist Kenneth Craik publisheda paper stating, 'If an organism had a small-scale internal model of the world, it could use trial and error in its head to predict the outcome of its actions in advance.'

Craik's paper primarily discussed mental models, but the idea that 'having an internal model of the world allows us to predict the outcomes of actions' was adopted early on in AI research. A prime example is SHRDLU , an AI developed between 1968 and 1970 that understood a limited 'world of blocks.' However, the models of that time were unable to fully reproduce the complexity of the real world, and AI research gradually began to lean toward the idea that 'world models are unnecessary,' as robotics researcher Rodney Brooks argued, 'AI can adapt to its environment without having an explicit world model.'

To realize Craik's vision of a world model, the rise of machine learning, which can replicate and understand the complexities of the real world, was necessary. With the rapid development of AI research, improvements in deep learning and the availability of vast amounts of data, world models are once again attracting attention.

Furthermore, large language models (LLMs) have been known to emerge untrained abilities, such as guessing movie titles from strings of emojis or being able to play Othello without any direct knowledge of the rules. This has led researchers to question whether AI already has a model of the world built in.

However, Pavlas points out that world models are merely a goal for achieving general-purpose artificial intelligence (AGI) , and are currently merely a 'mass of heuristics .' Heuristics are approximation algorithms that calculate solutions quickly but do not guarantee accuracy, as opposed to algorithms that guarantee correctness. In other words, LLMs can respond to specific scenarios with high accuracy by combining a 'comprehensive collection of fragmented rules of thumb' using vast amounts of training data, but because they do not actually realize a world model, they lack consistency and certainty.

Regarding the difference between a 'world model' and a 'heuristic block,' Pavlas gave the example of a simulation in which the robot navigates a map with 1% of the roads blocked off. If the world model were realized, the robot would reconsider its route using the map, including the blocked roads, and would be able to easily navigate around obstacles. However, the heuristic block LLM understands the map in a complex way, combining best guesses, so when 1% of the roads are blocked off, the accuracy of navigating the correct path drops sharply.

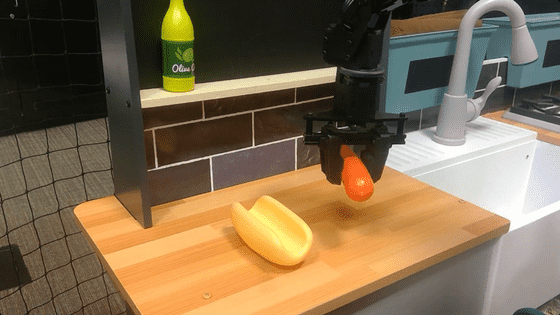

The world model is expected to have potential applications in areas such as realizing AGI, predicting the future of self-driving cars, and safely planning the actions of robots.

Related Posts: