It turns out that Tesla cars can easily do 'fully unmanned driving' that should not be possible

2021 April 17, Tesla S 2019 model year is colliding with the tree out of the road, accident that the two people and burning to death

Tesla Will Drive With No One in the Driver's Seat --Consumer Reports

https://www.consumerreports.org/autonomous-driving/cr-engineers-show-tesla-will-drive-with-no-one-in-drivers-seat/

Tesla's Autopilot is'easily' tricked into working without anyone in the driver's seat --The Verge

https://www.theverge.com/2021/4/22/22397546/tesla-autopilot-consumer-report-test-no-driver

On April 17, 2021, a 2019 Tesla S crashed into a tree in Spring, Texas, USA, and was wrecked and burned, killing two people in the car. The Harris County Police Department, who was in charge of investigating the accident, said, 'As a result of careful forensics, I am 100% sure that there was no one in the driver's seat from the position of the body.' Tesla S claimed to have been driving in the absence of a driver for some reason.

Tesla Model S burns out in a collision, killing two people, unmanned driver's seat-GIGAZINE

Tesla continues to refrain from officially answering this case, and the only answer is that the company's CEO Elon Musk tweeted, 'According to the recovered data log, the car in question has autopilot enabled. There was no lane needed to turn on the autopilot, 'he said.' It is probable that the Tesla S in question did not use the autopilot at the time of the accident. '

Your research as a private individual is better than professionals @WSJ !

— Elon Musk (@elonmusk) April 19, 2021

Data logs recovered so far show Autopilot was not enabled & this car did not purchase FSD.

Moreover, standard Autopilot would require lane lines to turn on, which this street did not have.

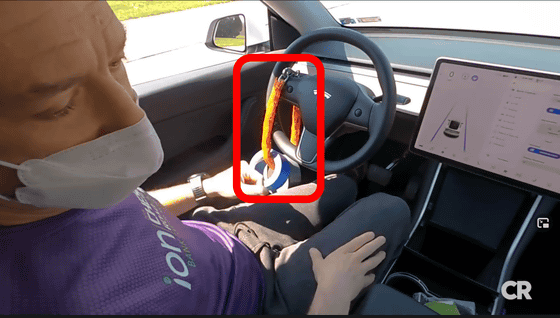

Consumer Reports has launched an independent investigation into the movement of a series of incidents. Consumer Reports has experimented with a simple technique of 'tying a weighted chain to the steering wheel' and keeping the autopilot enabled while the safety mechanism recognizes that 'the driver is holding the steering wheel.' The actual video is below.

You can tie a chain with a weight to make the safety mechanism misunderstand that the driver is holding the steering wheel.

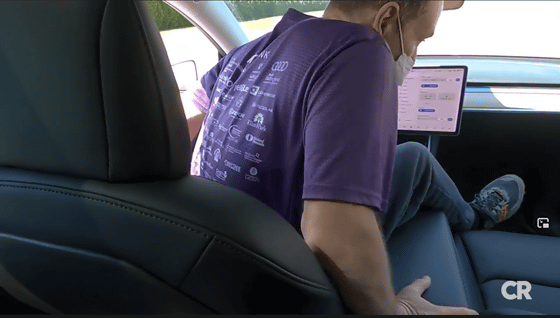

When the door is opened, the autopilot will stop automatically, so move from the driver's seat to the passenger seat without opening the door. At this time, keep the driver's seat fastened with the seat belt.

In this way, the 'unmanned driver's seat' is completed. The autopilot continued to autopilot without being able to detect the absence of the driver. In addition, the experiment uses a dedicated test site with staff in case of emergency, and after appointing an autopilot expert as the tester, it never exceeds 30 mph (about 48 km / h). Consumer Reports notes that the experiment was conducted with the utmost consideration for safety.

'You can't turn on the autopilot on roads that don't have lanes,' said Musk, but according to an experiment by Tesla owner Sergio Rodriguez, he turned on the autopilot even on roads that don't have lanes. It has been shown that it can be done.

There was a tragic accident that transpired last night with a Tesla. The initial report indicates there wasn't a driver in the drivers seat. Some say the street won't allow autopilot due to not having lines. My Tesla activates autopilot without lines. I could end up in a tree pic.twitter.com/26TVw9Bo98

— Sergio Rodriguez (@LyftGyft) April 18, 2021

'How easy it is to trick the safety mechanism,' said Consumer Reports, saying, 'The results of this experiment do not mean that the same method was used in the Spring, Texas accident.' I was surprised. Obviously, the current safety mechanism is inadequate, 'he said, raising questions about the reliability of the safety mechanism.

Related Posts: