Google develops technology to eliminate moving people from video in real time, movie is open to the public

While technologies such as removing objects from still images and correcting them using deep learning have already been

Google AI Blog: Moving Camera, Moving People: A Deep Learning Approach to Depth Prediction

https://ai.googleblog.com/20019/05/moving-camera-moving-people-deep.html

You can see how the video is actually processed using Deep Learning and an explanation of the mechanism in the following movie.

Learning Depth of Moving People by Watching Frozen People-YouTube

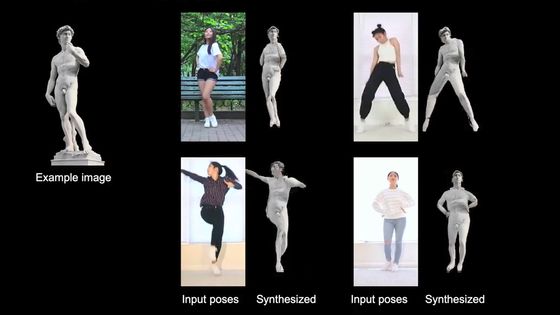

If the image was taken with a fixed camera, it is not so difficult to make it a 3D image. However, it is said that it is very difficult to make 3D images in which both the camera and the subject are moving. In the following movie, the central man dances brilliantly ...

I decided to pose while moving to the front of the screen. In such a video, the conventional algorithm of 'predicting the distance from the fixed camera to the subject using the

So what Google

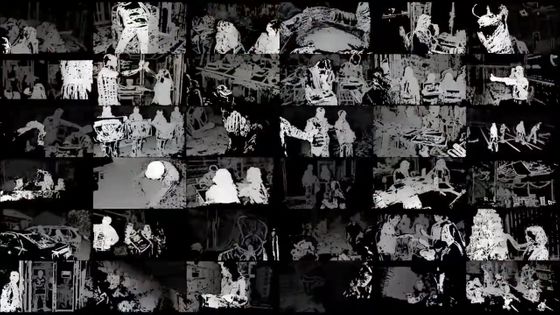

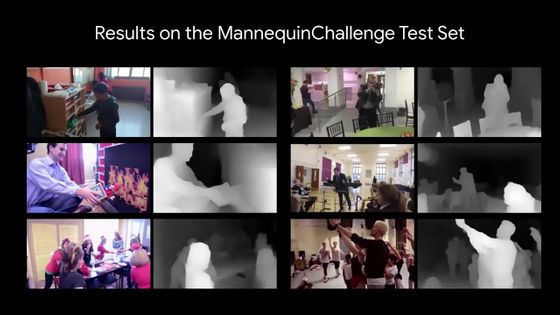

Google trained AI with deep learning based on about 2000 movies of 'Man is stationary but camera is moving' of this Mannequin Challenge.

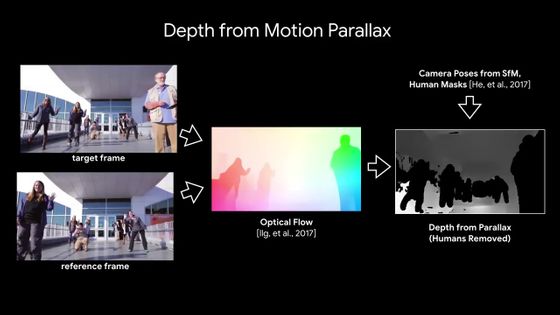

Although training on the 'image with moving camera' was conducted by learning the Mannequin Challenge, Google's goal is to capture the movie 'with both human and camera moving' as 3D image. Therefore, Google introduced the concept of motion parallax to machine learning, using human eyes as models. Specifically, it is a method of calculating an optical flow that represents the motion of an object as a two-dimensional vector by comparing one frame of a movie with another frame, and capturing changes on a pixel-by-pixel basis.

This method is used to predict 'depth' and create a 'depth map' from the image. Among the images below, the colorful one is the movie used for machine learning, and the monochrome image on the right is the 'depth map'. If you compare it, you can see that the one in front is whiter and the one in the back and the background are black.

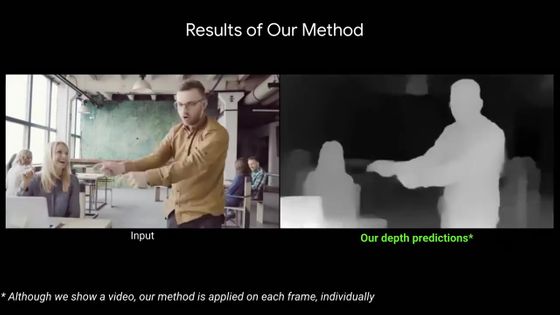

This is where the video of a man who moves to the front of the screen while dancing at the beginning is represented by a depth map.

You can use the depth map to add various effects to your movie. For example, when using “composite blur”, at first, the center male who is walking from the front to the back of the screen is in focus, and the other scenery is blurred.

I was able to focus on the passing old couple and blur the middle man.

Of course it is also possible to focus on the background. Such processing can be performed in real time by 'depth map' using deep learning.

You can also insert objects that do not actually exist.

Further, as an application example, a movie can be converted to a

You can also erase people from the movie. It is said that this is achieved by filling in the image of a person with the “picture of the background of the person” obtained from another scene of the movie.

Related Posts:

in Video, Posted by darkhorse_log