Google is developing "AI to conversate naturally with humans and complete tasks by telephone"

byIain Watson

A Google developer event held during the period of May 8 - 10, 2018 "Google I / O 2018In Google, we announced a variety of new technologies using AI. One of the new technologies announced "Google Duplex"Is a system in which AI talks to people on the phone and completes the task, the details of which are summarized in Google's blog.

Google AI Blog: Google Duplex: An AI System for Accomplishing Real World Tasks Over the Phone

https://ai.googleblog.com/2018/05/duplex-ai-system-for-natural-conversation.html

Google is a neural network that generates natural artificial sound by deep learning "WaveNet"And has focused on interaction with humans by artificial sound, such as installing it in" Google Assistant ". Using the deep learning technique by the convolution neural network makes it possible to generate a natural utterance close to human with a considerable precision but it still makes it possible to create a natural "computerized" tone and a human tone of broken tone There was a problem remaining that I could not hear.

Google Duplex developed by the research team is a technology that made it possible to "complete the real world task through the phone", and it seems possible to perform a task such as scheduling a specific event. The following sounds are "Audio for Google Duplex to make a reservation at hairdresser" above, and "Audio for Google Duplex to make a reservation in restaurant" below. Either way, Google Duplex is talking to clerks in fluent English, and unless you are told that "people who are calling are Google Duplex", I think that it is human interaction.

The research team realized the conversation like a human being by restricting the data and the purpose to be used by Google Duplex to a very limited range. It is said that Google Duplex is a conversation AI specialized for a specific purpose, and a general conversation like a public talk is impossible.

It is important that Google Duplex's technology has experience of this type of conversation also "the party to call". First of all, Google Duplex clearly informs "intention to call", so that the caller and the Google Duplex will talk with a common purpose.

byLinh Nguyen

"Natural conversation" performed by a human being is often spoken with very complicated sentences, unlike when talking to a computer equipped with voice recognition function. In the middle of speaking, correcting the previous word, repeating the same words more than necessary, omitting the word "you will understand", it becomes difficult for the machine to understand . Also, the background noise during a call is a factor that makes AI's speech recognition difficult, and it seems that AI's "word error rate" will rise as the sound quality gets worse.

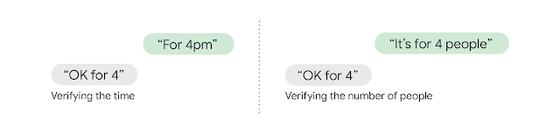

Furthermore, when human conversations become long, exactly the same words can have different meanings. For example, when the word "Ok for 4" was used for reservation of a store, it is determined whether this "4" represents the time "4 o'clock" or the number of people "4 people" It depends on the context of the conversation you exchanged. A word a while ago affects the meaning of the current word, complicating the processing of AI.

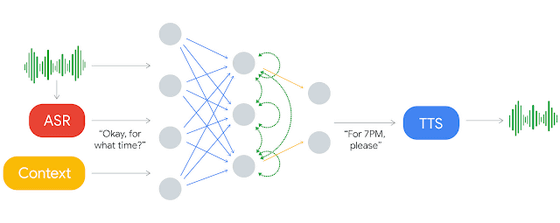

Google Duplex not only recognizes the other party's voice with Google's speech recognition function (ASR), but also based on information such as the history of conversation with the other party, the purpose of the conversation and the current time, the optimum response . It is possible to continue the conversation by estimating the meaning even if the partner's words are incomplete by scoring and judging the context. In order to make a natural response learn, AI is an anonymous phone dataCorpusWe are constructing a response model by using.

Also, in order to make natural human utterances more human-like, there is also a system that sends meaningless sounds such as "hmm (uoo)" or "uh (ah)" while processing the system, It is built in. When the research team analyzed real human conversation, these meaningless voices are used quite a lot and it seems to be useful for directing natural conversation.

In addition, while human expects immediate response to simple words such as "Hello", it will feel unnatural if an immediate response comes back after long and complicated words . Therefore, rather than instantly responding to the opponent using the processing capacity up to the limit, it is more conversational to put a certain amount of delay in the conversation into "human-like" conversation.

byDave Traynor

Basically, Google Duplex can autonomously talk and complete a task without human involvement. On the other hand, Google Duplex also has a self-monitoring function, so if you get into a situation where Google Duplex can not deal with it, such as scheduling very complex appointments or unexpected conversations, send a signal to a human operator You can ask for help.

Users do not have to interact with a company when they make a phone call to a company, just by interacting with Google Duplex to schedule. "This not only reduces the user's effort, it also has great benefits for users with disabilities in hearing and speech, as well as users who do not understand local languages, which is also helpful in dealing with accessibility and language barriers "The research team says.

Related Posts:

in Software, Posted by log1h_ik