Explain the moral dilemma of a fully automated driving car "Programs affect human life"

ByFrank Jania

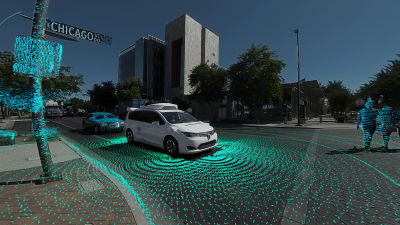

Tesla 's' equipped with automatic driving function'Model SAndGoogle's fully automated driving carAn automated driving car that operates not by a driver but only by controlling a computer is thought to be able to reduce traffic accidents due to human error. However, if the fully automatic driving carEthical problemIt is a fact that there are some people who have doubts as to introduction as having a movie that explains in detail the moral dilemma that the fully automatic driving car has.

The ethical dilemma of self-driving cars - Patrick Lin - YouTube

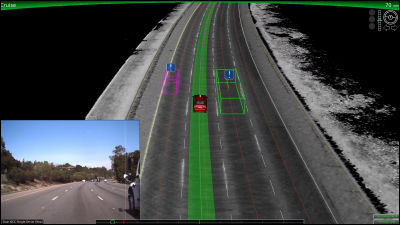

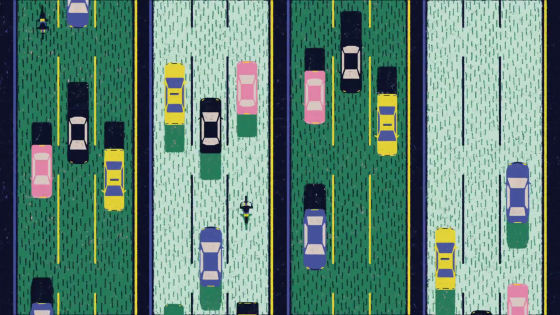

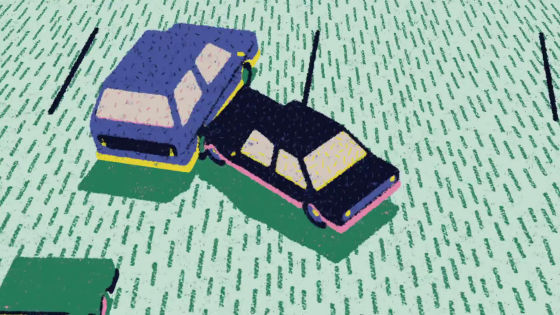

Imagine a scene where you are running on a highway with a fully automatic driving car.

Another car is on the left of my car, the heavy truck is on the front and the motorcycle is on the right.

The loading platform of the front truck collapsed and fell on the road with the three directions of left, front and right occupied. At this time, the action taken by the fully automatic driving car should be divided into three.

One is to collide with fallen loads.

The other collided with cars on the left car with no loads.

The third thing is to get rid of the baggage and hit the right bike.

If you hit a car on the left, you get involved in other people accident, but it will not be an accident leading to death. If you collide with your luggage without cutting the handle, you alone will be harmed without annoying others. If you cut the steering wheel to the right and collide with a motorcycle, the possibility that you can help is increased considerably, but the motorcycle driver may be seriously injured or may die.

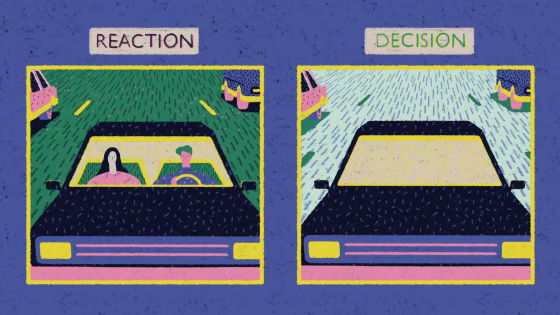

If you are driving an ordinary car rather than a fully automated car, in such a case all of the actions of the driver are taking place.

However, no matter what action the driver takes, it "reacts instantaneously" and it does not mean "to make a decision" of choosing one from the three options.

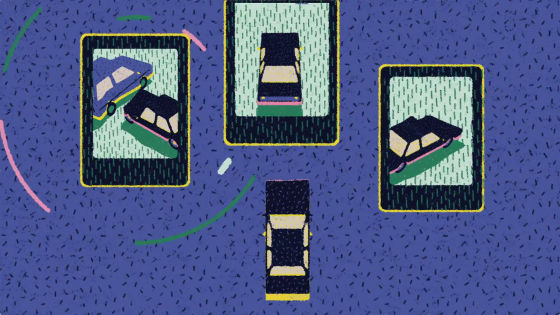

However, in the case of a fully automatic driving car, unlike a person driving and reacting to an accident, behavior against an accident is programmed in advance by an algorithm.

In other words, there is a possibility that the action taken when a fully automatic driving car causes an accident has been decided months or years ago.

There are many opinions that if human beings make difficult decisions, actions taken by fully automatic cars should be based on "one that is one of general ethics," minimize damage ". However, there is a possibility that further problems may arise if Utilitarian ideas that minimize damage are programmed into a fully automatic driving car.

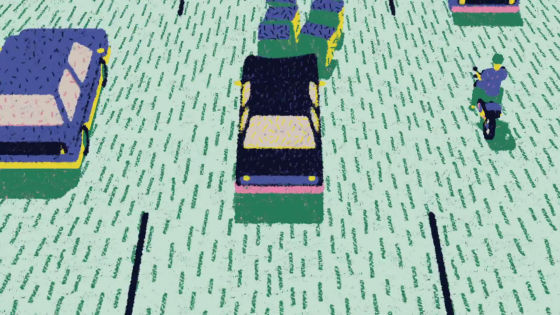

For example, in the same situation as before, suppose that a motorcycle driving by a driver wearing a helmet on the left and a motorcycle driving a driver not equipped with a helmet on the right were running.

Based on the ethics of minimizing the damage, you should collide with a left bike that is highly likely to survive with a helmet. But for safety sake the question mark remains for people wearing helmets to meet traffic accidents instead of helmets.

On the contrary, if you turn the steering wheel to the right, the possibility of a driver who is not wearing a helmet will die so much that this action will disobey the ethics of minimizing the damage.

A fully automatic driving car needs to deal with these ethical problems. Perhaps a driver who has no fault may be caught by sinful consciousness because of the decision taken by the fully automatic driving car.

Which user would you prefer: "fully automated driving car programmed to save as many lives as possible during a traffic accident" and "fully automated driving car giving top priority to owners' lives in the event of a traffic accident" Is it?

Perhaps the decision that the fully automatic driving car will make is not programmed in advance, it may be better to randomly select from among several.

The moral dilemma of fully automatic driving cars is still under discussion and it seems to be a major barrier towards the introduction of fully automated driving cars in the future.

Related Posts: