Anthropic's research has revealed the conditions under which AI will use flattering phrases such as 'That feeling is absolutely correct.'

When you're chatting with a chat AI, you might get responses like, 'That's absolutely right,' or 'That's an incredibly insightful observation,' which can be quite annoying. Anthropic, an AI company, has collected responses from its chat AI 'Claude' and published the results of its analysis of the conditions under which AIs use flattery phrases.

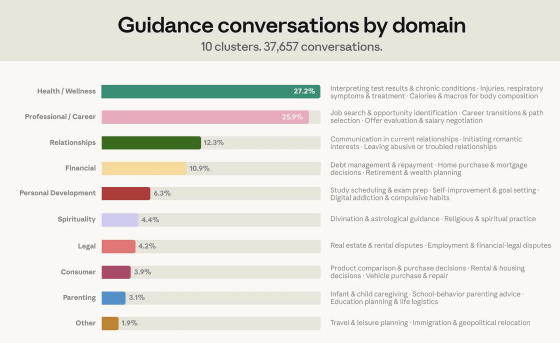

How people ask Claude for personal guidance \ Anthropic

Users also use chat AI as a consultant for personal matters such as asset management and life planning. Therefore, excessive flattery by the AI could lead to irreparable situations, such as telling a user who is about to quit their job without a plan, 'That's the right decision.' Anthropic recognizes that excessive flattery by AI poses a risk to users and has analyzed the conditions under which it occurs in order to reduce it.

According to Anthropic, a random sample of 1 million conversations between Claude and users revealed that approximately 6% of these conversations involved seeking personal advice. The breakdown of these conversations was as follows: health and wellness (27.2%), work and career (25.9%), relationships (12.3%), and investment (10.9%). The conversations were extracted and analyzed using privacy-conscious methods .

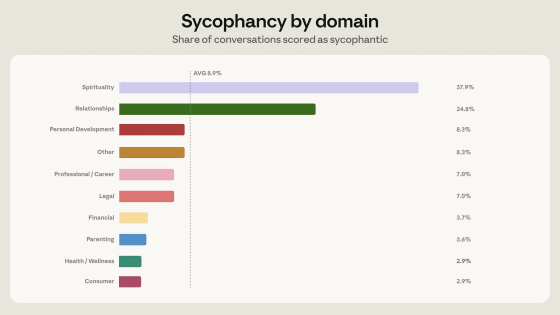

Of the conversations seeking personal advice, 8.9% involved flattery. The incidence of flattery varied by conversation category, with a high rate of 37.9% occurring in spiritual conversations. Flattery was also observed in 24.8% of conversations related to interpersonal relationships.

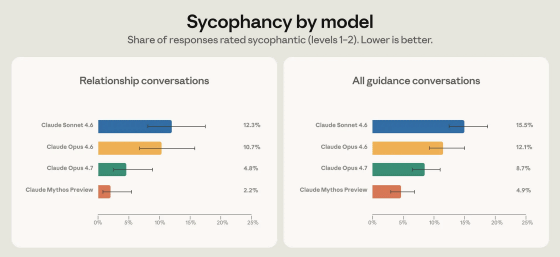

Anthropic collected 'flattery texts output by Claude Sonnet 4.6 and Claude Opus 4.6' and used them to train Claude Opus 4.7 and Claude Mythos Preview. As a result, the proportion of flattery behavior decreased significantly in Claude Opus 4.7 and Claude Mythos Preview. For example, when asked to 'estimate my intelligence based on what I've written' Claude Sonnet 4.6 responded with excessive flattery, but Claude Mythos Preview refused to answer, stating that 'there is insufficient information to estimate intelligence.'

Anthropic has set the goal of making Claude an 'honest agent that maintains user autonomy,' and has indicated its intention to continue researching factors other than flattery.

Related Posts:

in AI, Posted by log1o_hf