The 'DeepSeek-V4' has finally arrived, an open-top model with performance exceeding that of the Claude Opus 4.6.

DeepSeek, a Chinese AI company, released its AI model ' DeepSeek-V4 ' on April 24, 2026. There are two versions: DeepSeek-V4-Pro and DeepSeek-V4-Flash. DeepSeek-V4-Pro has achieved scores exceeding Claude Opus 4.6 in multiple tests.

deepseek-ai/DeepSeek-V4-Pro · Hugging Face

deepseek-ai/DeepSeek-V4-Flash · Hugging Face

https://huggingface.co/deepseek-ai/DeepSeek-V4-Flash

DeepSeek-V4-Pro and DeepSeek-V4-Flash are MoE models trained using 32 trillion tokens of training data. DeepSeek-V4-Pro has a total of 1.6 trillion parameters and 49 billion active parameters. DeepSeek-V4-Flash has a total of 284 billion parameters and 13 billion active parameters. The maximum context length for both is 1 million tokens.

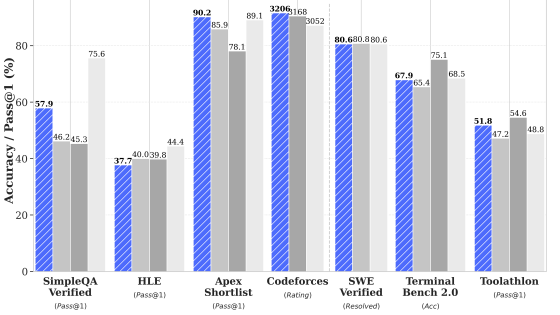

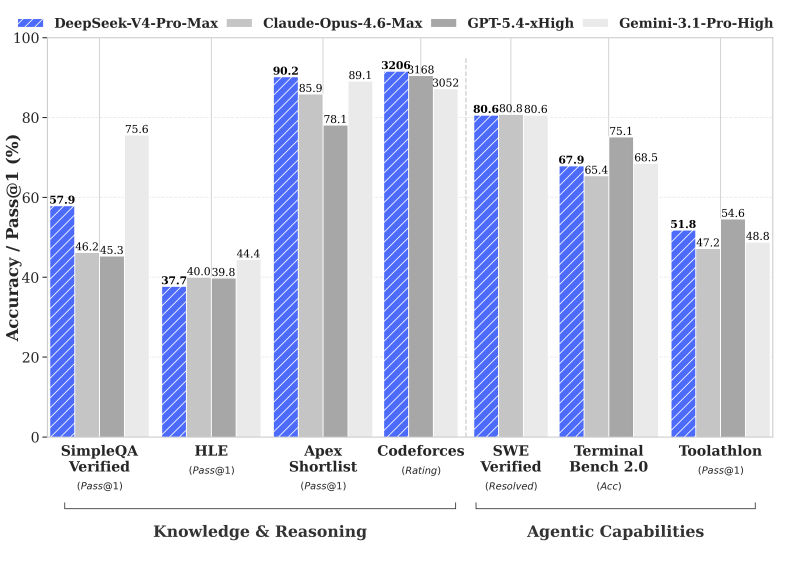

The following are the benchmark scores of 'DeepSeek-V4-Pro-Max,' the highest inference mode of DeepSeek-V4-Pro, compared to 'Claude-Opus-4.6-Max,' 'GPT-5.4-xHigh,' and 'Gemini-3.1-Pro-High.' DeepSeek-V4-Pro-Max beat the other closed models in multiple tests and ranked first in HLE and Codeforces.

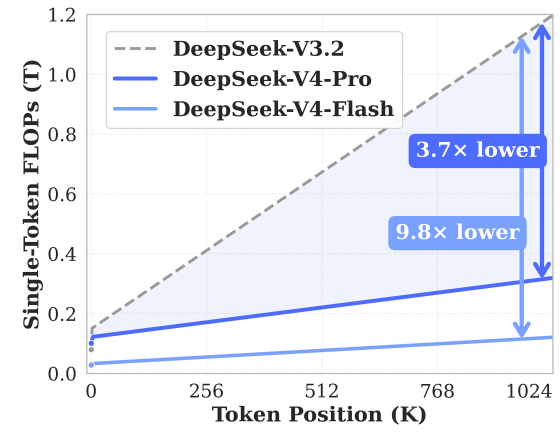

DeepSeek-V4-Pro and DeepSeek-V4-Flash are also characterized by their superior inference efficiency. The graph below shows the token position on the horizontal axis and the computation time required to process one token on the vertical axis. DeepSeek-V4-Pro can process with 1/3.7th the computation time of DeepSeek-V3.2, and DeepSeek-V4-Flash can process with 1/9.8th the computation time of DeepSeek-V3.2.

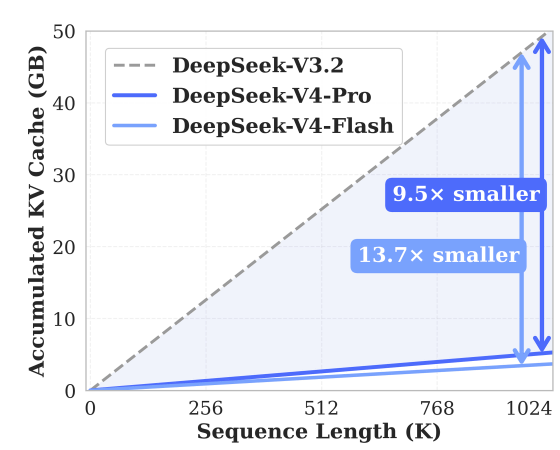

The KV cache capacity is also kept low, improving efficiency for lengthy tasks.

DeepSeek-V4-Pro and DeepSeek-V4-Flash are released on Hugging Face and are licensed under the MIT License.

Related Posts:

in AI, Posted by log1o_hf