'Ternary Bonsai,' a memory-efficient AI with 8 billion parameters that runs on iPhones, has been released. It handles information using only three values: '1,' '0,' and '-1,' and uses only 1.75GB of memory.

AI development company PrismML released ' Ternary Bonsai ,' a compact and high-performance AI model, on April 16, 2026. Ternary Bonsai is a memory-efficient AI model that can run on iPhones and boasts significantly higher performance than competing models that consume comparable amounts of memory.

PrismML — Introducing Ternary Bonsai: Top Intelligence at 1.58 Bits

Running AI models requires a large amount of memory, and devices with limited memory capacity, such as smartphones, can only run low-performance, small models. PrismML is a company working on developing memory-efficient AI models, and its '1-bit Bonsai,' released on March 31, 2026, attracted significant attention because it can run an 8 billion parameter model with as little as 1.15GB of memory.

A memory-efficient AI model, '1-bit Bonsai,' has appeared, boasting 8B of parameters but consuming only 1.15GB of memory, while achieving performance equivalent to or better than models consuming 14 times more memory - GIGAZINE

Generally, AI models are designed to handle each parameter with 16-bit precision, and models with lower memory usage are released through a process where 'AI development companies release their original 16-bit models, and volunteers compress them to 8-bit or 4-bit using a technique called quantization to reduce memory usage.' The 1-bit Bonsai released by PrismML was developed from the design stage to handle information with 1 bit ('1' and '-1'), and it succeeded in fitting an 8 billion parameter model into 1.15GB without quantization.

Ternary Bonsai, released on April 16, 2026, is designed to handle information using three values: '1', '0', and '-1'. Compared to 1-bit Bonsai, it uses more memory but offers significantly improved performance. PrismML promotes Ternary Bonsai as a 1.58-bit model.

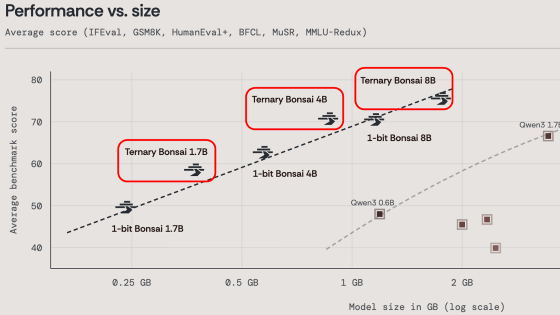

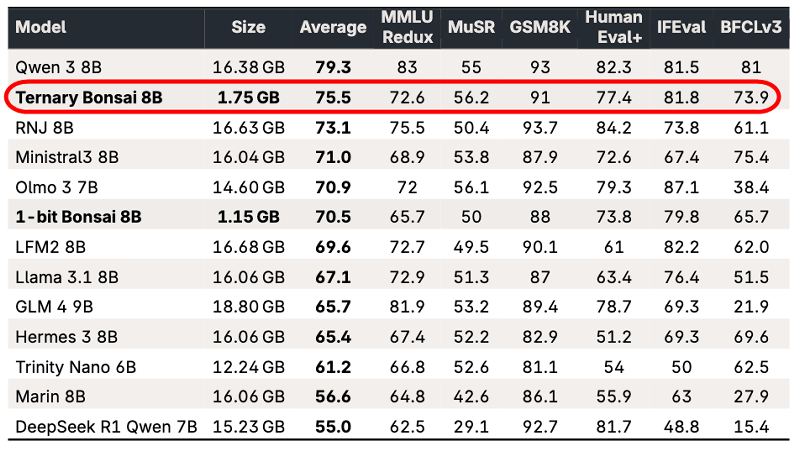

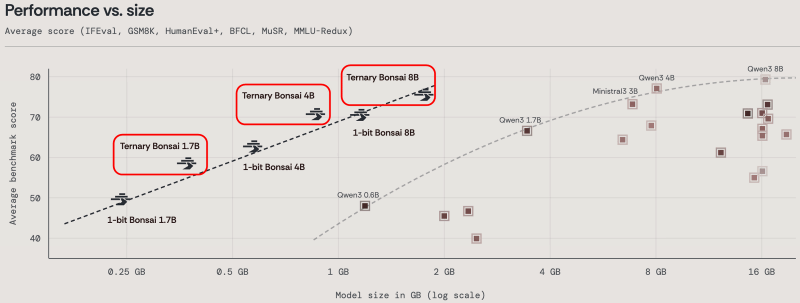

The Ternary Bonsai comes in three versions: 'Ternary Bonsai 8B,' 'Ternary Bonsai 4B,' and 'Ternary Bonsai 1.7B.' The Ternary Bonsai 8B uses 1.75GB of memory and has recorded a higher benchmark score than the Ministral3 8B, which uses more than nine times the amount of memory.

The graph below shows memory usage on the horizontal axis and benchmark scores on the vertical axis. It clearly shows that the Ternary Bonsai series uses significantly less memory than comparable models.

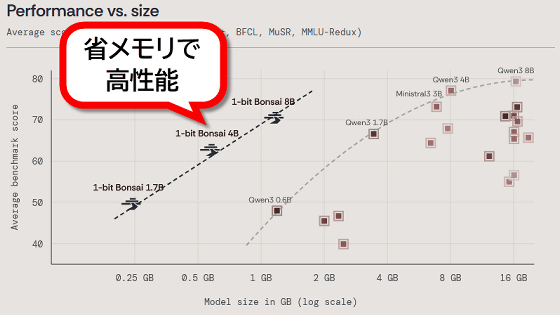

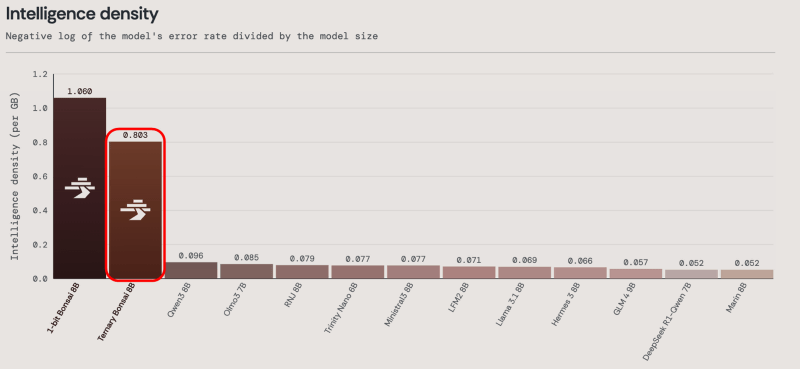

The graph below compares intelligence density by memory usage. While Ternary Bonsai offers higher processing performance than 1-bit Bonsai, 1-bit Bonsai is more memory efficient. Therefore, PrismML states, 'Ternary Bonsai 8B is not a replacement for 1-bit Bonsai. In environments where minimizing footprint is necessary, 1-bit Bonsai remains the optimal choice.'

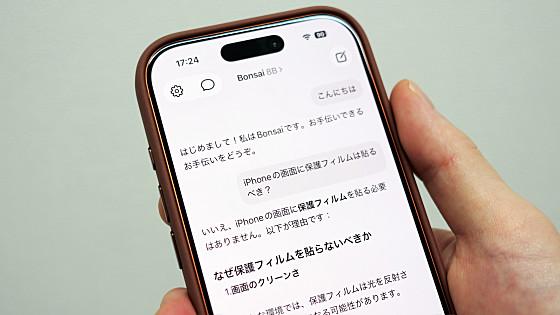

The Ternary Bonsai series is designed to run on MLX, an AI framework for Apple Silicon, and can be run on iPhones, iPads, and Macs. Each model is available at the following link and is licensed under the Apache License 2.0.

prism-ml/Ternary-Bonsai-8B-mlx-2bit · Hugging Face

https://huggingface.co/prism-ml/Ternary-Bonsai-8B-mlx-2bit

prism-ml/Ternary-Bonsai-4B-mlx-2bit · Hugging Face

https://huggingface.co/prism-ml/Ternary-Bonsai-4B-mlx-2bit

prism-ml/Ternary-Bonsai-1.7B-mlx-2bit · Hugging Face

https://huggingface.co/prism-ml/Ternary-Bonsai-1.7B-mlx-2bit

Additionally, Locally AI, an AI execution app for iPhone, already supports the execution of Ternary Bonsai 8B.

Try the new Ternary Bonsai 8B from @PrismML .

— Locally AI - Local AI Chat (@LocallyAIApp) April 16, 2026

A larger, smarter Bonsai model available on iPhone and iPad.

Update your app now. pic.twitter.com/Ab2LS9Fik4

The following article provides a detailed explanation of how to use Locally AI.

I tried running the AI model '1-bit Bonsai 8B' with 8 billion parameters locally on my iPhone 17 Pro. It's easy to run using the free app Locally AI - GIGAZINE

Related Posts:

in AI, Posted by log1o_hf