OpenAI, Google, and Anthropic are collaborating to counter 'hostile distillation attacks by Chinese AI companies.'

The technique of extracting input and output data from high-performance AI models to improve the performance of other models is called 'distillation.' AI companies such as OpenAI, Google, and Anthropic have terms of service that prohibit the use of their products for distillation, but there have been a series of hostile distillations by Chinese companies, and the three companies are working together to counter this issue through information exchange.

OpenAI, Anthropic, Google Unite to Combat Model Copying in China - Bloomberg

https://www.bloomberg.com/news/articles/2026-04-06/openai-anthropic-google-unite-to-combat-model-copying-in-china

Distillation is a technique used to 'extract the capabilities of a large model to create a smaller, high-performance model.' Distillation between products from the same company or from models not prohibited by the terms of service is generally not a problem. On the other hand, many AI development companies prohibit the use of their products for distillation purposes in their terms of service, and performing distillation via APIs may result in account suspension or other penalties.

The act of accessing APIs and performing distillation while concealing the purpose of use is called 'adversarial distillation,' and it has been pointed out that major Chinese AI development companies are carrying out adversarial distillation attacks against American AI companies. For example, DeepSeek's inference model ' DeepSeek R1, ' announced in January 2025, attracted considerable attention at the time due to its lightweight and high performance, but just a few days later, OpenAI issued a statement claiming to have 'evidence that DeepSeek R1 was developed by distilling OpenAI's models.'

DeepSeek may have been 'distilling' OpenAI's data to develop its AI; OpenAI says it has 'evidence' - GIGAZINE

It has also been reported that in February 2026, one year later, OpenAI sent a memorandum to the U.S. House Select Committee on China stating that 'DeepSeek continues its efforts to free-ride on the capabilities developed by OpenAI and other leading American research institutions.'

OpenAI accuses DeepSeek of 'free-riding' on America's leading AI by training next-generation AI through distillation in a memo to lawmakers - GIGAZINE

Google's Threat Intelligence Group (GTIG) also reported on February 13, 2026, that 'adversarial distillation attacks against Google's AI are on the rise.'

Google reports 'an increase in distillation attacks attempting to extract Gemini's capabilities and develop competing AI' - GIGAZINE

Furthermore, Anthropic also claimed on February 23, 2026, that 'China-based AI companies DeepSeek, Moonshot, and MiniMax are conducting a large-scale campaign to illegally extract Claude's capabilities in order to improve their own models.'

Anthropic accuses Chinese AI companies DeepSeek, Moonshot, and MiniMax of illegally extracting Claude's capabilities - GIGAZINE

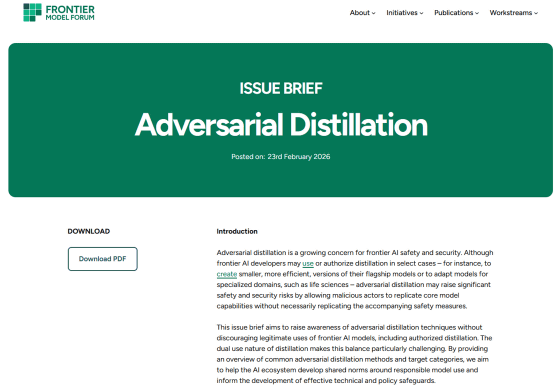

According to Bloomberg, OpenAI, Google, and Anthropic are forming a cross-company collaboration to counter hostile distillation attacks by China. Specifically, they are sharing information through the AI industry group Frontier Model Forum to strengthen their detection of hostile distillation attacks.

In fact, on February 23, 2026, the Frontier Model Forum published an article explaining the problems with adversarial distillation attacks. According to the article, attackers perform distillation using a variety of methods. By documenting the methods of adversarial distillation attacks, the Frontier Model Forum aims to spread common norms for responsible model use throughout the AI industry and help develop effective protections.

Adversarial Distillation - Frontier Model Forum

https://www.frontiermodelforum.org/issue-briefs/issue-brief-adversarial-distillation/

Related Posts:

in AI, Posted by log1o_hf