Trinity-Large-Thinking, an open-weight AI model with 399 billion parameters, has been released, excelling at handling complex, long-term agents and multi-turn tool calls.

Arcee AI, an AI startup based in San Francisco, USA, has released 'Trinity-Large-Thinking,' an open-weight model with 399 billion parameters, under

Arcee AI | Trinity-Large-Thinking: Scaling an Open Source Frontier Agent

https://www.arcee.ai/blog/trinity-large-thinking

arcee-ai/Trinity-Large-Thinking · Hugging Face

https://huggingface.co/arcee-ai/Trinity-Large-Thinking

Today we're releasing Trinity-Large-Thinking.

— Arcee.ai (@arcee_ai) April 1, 2026

Available now on the Arcee API, with open weights on Hugging Face under Apache 2.0.

We built it for developers and enterprises that want models they can inspect, post-train, host, distill, and own. pic.twitter.com/jumuYehJdo

Arcee's new, open source Trinity-Large-Thinking is the rare, powerful US-made AI model that enterprises can download and customize | VentureBeat

https://venturebeat.com/technology/arcees-new-open-source-trinity-large-thinking-is-the-rare-powerful-us-made

Arcee AI Ships 400B Open Model Rivaling Claude at 96% Less

https://www.implicator.ai/arcee-ai-releases-400b-open-reasoning-model-that-rivals-claude-at-96-lower-cost/

On April 1st local time, Arcee AI officially released Trinity-Large-Thinking, a text-only inference model with 399 billion parameters. Trinity-Large-Thinking can be used via the Arcee AI API, and its weighting data is publicly available on Hugging Face under the Apache 2.0 license.

Arcee AI is a small team of just 30 people, and in early 2026, they invested $20 million (approximately 3.2 billion yen), about half of their total capital, to train Trinity-Large-Thinking over 33 days. The training utilized 2048 NVIDIA B300 Blackwell GPUs and, in partnership with DatologyAI to automate the selection of AI training data, used 20 trillion tokens consisting of carefully selected web data and high-quality synthetic data.

A key feature of Trinity-Large-Thinking is its adoption of a Mixture-of-Experts architecture, which integrates multiple expert models to improve overall performance. VentureBeat points out that this results in only 1.56% of the parameters active for a single token, or 13 billion parameters.

By employing a Mixture-of-Experts approach, Trinity-Large-Thinking can maintain the inference speed and operational efficiency of much smaller systems while possessing the deep knowledge characteristic of large-scale systems. It reportedly operates approximately 2 to 3 times faster than comparable models on the same hardware.

Furthermore, while Anthropic's Claude Opus 4.6 costs $25 (approximately 4,000 yen) per million tokens output, Trinity-Large-Thinking is attractive due to its overwhelmingly low cost of just $0.90 (approximately 140 yen) per million tokens output. Arcee AI states, 'We have reached a level of competitiveness and token price that we are truly satisfied with.'

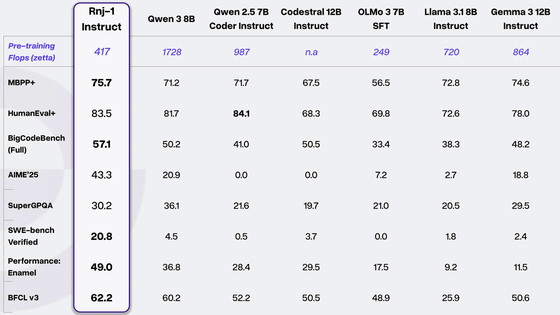

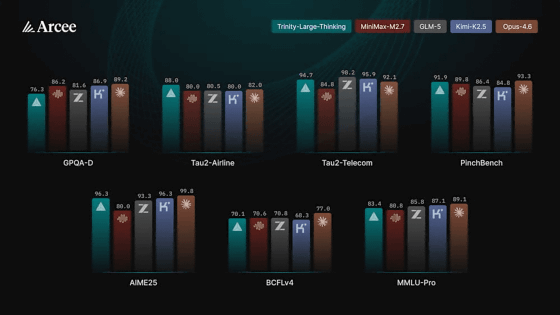

The graph below compares the performance of Trinity-Large-Thinking (green), MiniMax-M2.7 (red), GLM-5 (gray), Kimi-K2.5 (blue), and Claude Opus 4.6 (orange) across various benchmarks. Trinity-Large-Thinking demonstrated performance comparable to open-weight models such as MiniMax-M2.7 and GLM-5, and in PinchBench, a benchmark for autonomous agent tasks, it recorded the second-highest score after the market-leading closed model, Claude Opus 4.6.

Arcee AI stated in its official blog, 'Nine months ago, we made the decision to change the way we run our company. We determined that if we are going to focus on a truly American open model—a model that developers and companies can actually own—we need to build it ourselves.'

The background to these claims is the accusation that Chinese AI companies such as DeepSeek, GLM (Z.ai), and Qwen (Alibaba) have a monopoly on open weight models, which are pre-trained AI models made publicly available for anyone to use. Open weight models are inexpensive and easy to use, so they are adopted by many companies, but some people are concerned that many of these open weight models are made in China.

However, as we enter 2026, Chinese research institutions are shifting to their own enterprise platforms and subscription models, creating a void in the field of high-performance open weight models. Arcee AI is expected to fill that void.

Technology media outlet VentureBeat states, 'This move comes at a time when companies are increasingly concerned about their reliance on Chinese-made architecture for critical infrastructure. As a result, there is demand for leading domestic companies, and Arcee AI aims to meet that demand.'

In a message to VentureBeat, Clement Delang, CEO of Hugging Face, commented, 'America's strength has always been in its startups. That's why we should probably place our hopes on these startups to take the lead in the field of open-source AI. Arcee AI has shown that this is possible!'

Related Posts:

in AI, Posted by log1h_ik