The bottleneck in AI research is humans

Andrei Karpathy, a renowned AI researcher and one of the founding members of OpenAI, the developer of ChatGPT, has asserted that ' the bottleneck in AI research is humans .'

Andrej Karpathy: Humans Are the Bottleneck in AI Research

'The Karpathy Loop': 700 experiments, 2 days, and a glimpse of where AI is heading | Fortune

https://fortune.com/2026/03/17/andrej-karpathy-loop-autonomous-ai-agents-future/

From early 2026, Mr. Karpathy spent several months manually adjusting the GPT-2 training settings. Then, he let an AI agent handle the adjustments for just one night, and the AI agent found improvements that Mr. Karpathy had overlooked.

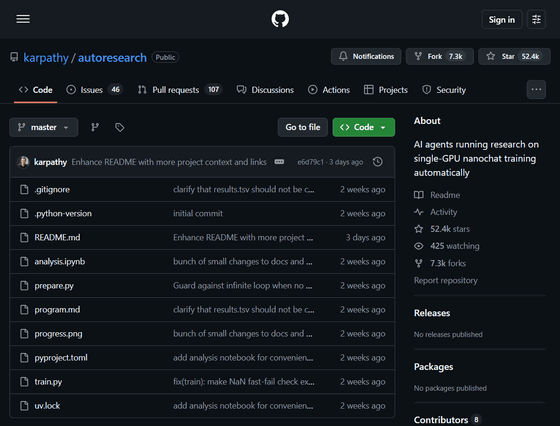

Based on this experience, Karpathy released 'autoresearch' on GitHub.

GitHub - karpathy/autoresearch: AI agents running research on single-GPU nanochat training automatically · GitHub

https://github.com/karpathy/autoresearch

Karpathy described autoresearch as 'a self-contained, minimal repository.' Specifically, it's a simplified version of nanochat 's LLM training core—a simple experimental harness for training large-scale language models (LLMs) created by Karpathy—downgraded to about 630 lines of code that runs on a single NVIDIA H100. The goal of autoresearch is to design an AI agent that can advance research infinitely and as quickly as possible without user intervention. The AI agent operates as an autonomous loop on a Git feature branch, and is designed to add commits to the training script each time it finds a better configuration (one with a lower final validation loss), such as changes to the neural network architecture, optimizer, and various hyperparameters.

I packaged up the 'autoresearch' project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then:

— Andrej Karpathy (@karpathy) March 7, 2026

- the human iterates on the… pic.twitter.com/3tyOq2P9c6

Karpathy conducted over 700 experiments using autoresearch. The AI agent ran 50 experiments in just one night, discovered better learning methods, and successfully committed to Git without any human instruction. The AI agent also found subtle adjustments that Karpathy had overlooked, as well as fine-tuning that humans tend to miss.

Running autoresearch for two consecutive days allowed the AI agent to find 20 ways to improve the training time of small language models. Applying these adjustments to LLM resulted in an 11% improvement in training speed.

In a typical session, autoresearch runs 12 experiments per hour. If run overnight on a single GPU, it can run 80 to 100 experiments. Each experiment follows a consistent pattern, with the AI agent reading an existing training script, suggesting modifications, running the training loop, recording the resulting score, and deciding whether to keep or discard the changes. All decisions are logged in Git, allowing Karpathy to see a complete history of what the AI agent tried, succeeded, and failed.

When human researchers perform the same experiments as in autoresearch manually, the feedback loop can extend from several minutes to several hours per iteration, and fatigue accumulates over time.

Based on this experience, Karpathy said in a YouTube podcast, 'To make the most of the tools currently available, you must not be the bottleneck. You must not be there to motivate the next action.'

According to Karpathy, autoresearch is fundamentally different from previous automated machine learning approaches. Traditional automated machine learning approaches rely on random fluctuations or evolutionary algorithms and lack the ability to infer what has already been tried. Also, traditional ' neural architecture search ' is a method that rearranges predefined neural network functions and other elements to find the optimal configuration for a task, so it can only shuffle predefined components. However, autoresearch can write entirely new training logic, test it with a single number, and build upon what works. For this reason, Karpathy explains that 'comparing it to traditional automated machine learning approaches is completely useless.'

Furthermore, the autoresearch method released by Karpathy was quickly validated by external AI researchers and attracted attention from the AI community. Shopify CEO Tobias Lütke reported, 'When we applied autoresearch to our internal data , we ran 37 experiments overnight and achieved a 19% performance improvement on a completely unrelated codebase.'

Harrison Chase, the founder of LangChain, created 'autoresearch for agents' as a fork of autoresearch. This gives an AI coding agent the code and evaluation dataset for the AI agent, and it autonomously experiments, modifies the code, runs evaluations via LangSmith , adopts the improved version, and discards the one that performs worse.

Loved this from @karpathy

— Harrison Chase (@hwchase17) March 9, 2026

over the weekend I built 'autoresearch but for agents'

Same idea — give an AI coding agent your agent code + an eval dataset, let it experiment autonomously overnight. It modifies the code, runs evals via LangSmith, keeps improvements, discards… https://t.co/mqWvTLrAjH

Furthermore, Karpathy mentioned that autoresearch is 'only effective when quantitative evaluation is possible,' explaining that it is not useful in fields where evaluation requires human judgment, creativity, or subjective assessment.

Related Posts:

in AI, Posted by logu_ii