A Chinese AI company has announced 'MiniMax M2.7,' an AI model that is more powerful than Gemini 3.1 Pro, featuring self-evolving performance improvements and native support for agent teams.

MiniMax, an AI development company based in Shanghai, China, announced its AI model ' MiniMax M2.7 ' on March 18, 2026. MiniMax M2.7 is positioned as the company's first 'AI model developed using self-evolution,' and has achieved a score that surpasses Gemini 3.1 Pro in benchmark tests.

MiniMax M2.7: Early Echoes of Self-Evolution - MiniMax News | MiniMax

MiniMax M2.7 - Model Self-Improvement, Driving Productivity Innovation Through Technological Breakthroughs | MiniMax

https://www.minimax.io/models/text/m27

Introducing MiniMax-M2.7, our first model which deeply participated in its own evolution, with an 88% win-rate vs M2.5

— MiniMax (official) (@MiniMax_AI) March 18, 2026

- Production-Ready SWE: With SOTA performance in SWE-Pro (56.22%) and Terminal Bench 2 (57.0%), M2.7 reduced intervention-to-recovery time for online incidents… pic.twitter.com/w21vUczxzV

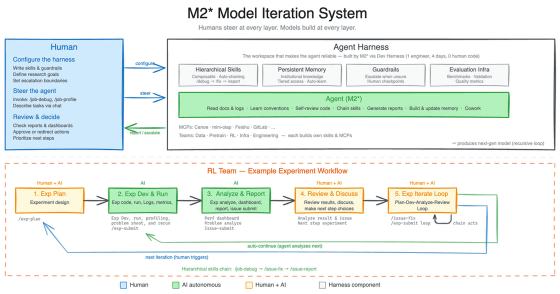

MiniMax instructed the development of a research agent harness for an 'internal version of MiniMax M2.7' in order to develop the production version of MiniMax M2.7. The constructed agent harness can manage 'data pipelines,' 'learning environments,' 'infrastructure,' 'inter-team collaboration,' and 'persistent memory,' enabling a development flow in which 'human AI researchers design experiments and analyze logs while conversing with AI models,' successfully accelerating problem discovery and verification. In the development of the production model, MiniMax M2.7 reportedly handled 30-50% of the workflow.

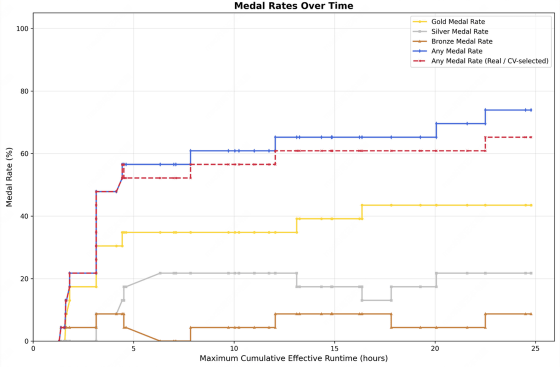

Furthermore, they succeeded in recursively evolving the model using an internal version of MiniMax M2.7. Specifically, they achieved a 30% performance improvement by repeating the process of 'problem analysis → correction plan → code modification → test execution → result comparison → application or discarding changes' more than 100 times. The graph below shows the change in the number of medals won at the International Mathematical Olympiad after training the AI model with a recursive evolution system using MiniMax M2.7. In its initial state, the AI model did not win a single medal, but after running the recursive evolution system for 25 hours, the average medal win rate improved to 66.6%.

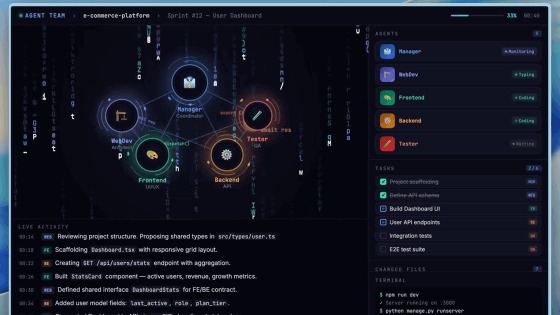

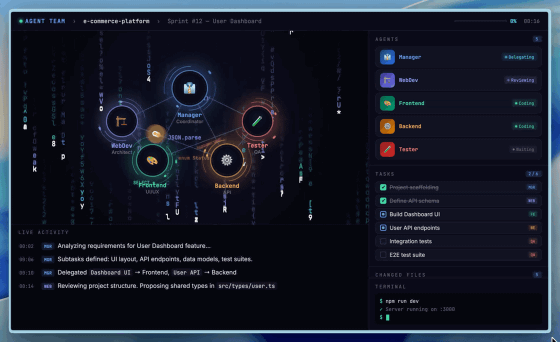

A major feature of MiniMax M2.7 is its native support for agent teams that run multiple agents simultaneously. According to MiniMax, the agent team mechanism, which assigns different roles to each agent, cannot be achieved solely through clever system prompting; it requires native support during the AI model development stage. The agent team functionality of MiniMax M2.7 is also used in MiniMax's own product development.

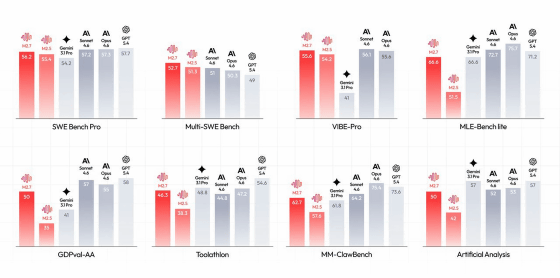

The benchmark results for 'MiniMax M2.7', 'MiniMax M2.5', 'Gemini 3.1 Pro', 'Claude Sonnet 4.6', 'Claude Opus 4.6', and 'GPT-5.4' are as follows. The MiniMax M2.7 recorded higher scores than the Gemini 3.1 Pro in most tests.

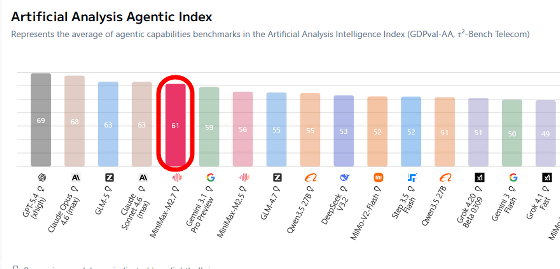

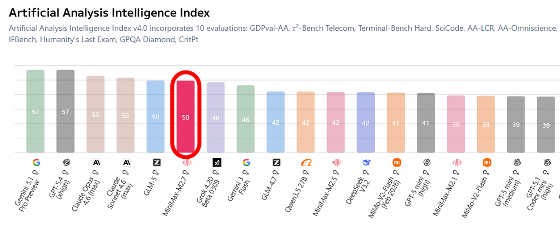

The benchmark results for MiniMax M2.7 are also published on Artificial Analysis, an AI model performance comparison site. In tests measuring agent performance, MiniMax M2.7 outperformed Gemini 3.1 Pro Preview and recorded a score close to that of Claude Sonnet 4.6.

On the other hand, while it outperformed Grok 4.20 Beta 0309 in intelligence performance, its score was lower than Gemini 3.1 Pro Preview and GLM-5.

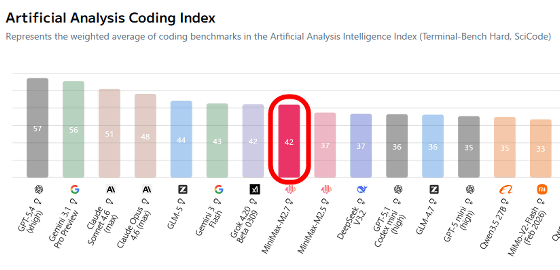

In terms of coding performance, it scores lower than Gemini 3.1 Pro Preview and Gemini 3 Flash.

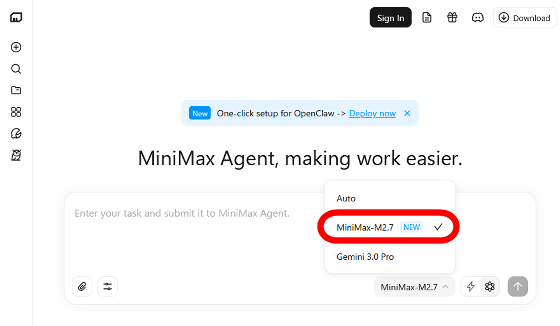

MiniMax M2.7 is now available for use with the chat AI,

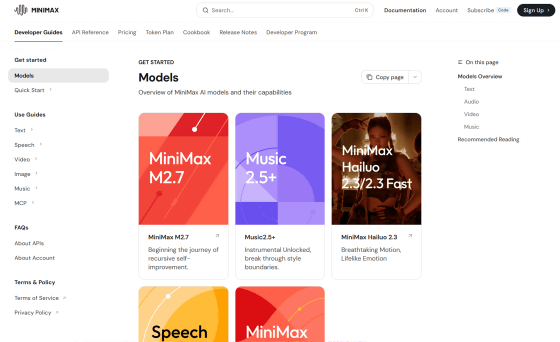

It can also be used via API.

Models - MiniMax API Docs

https://platform.minimax.io/docs/guides/models-intro

Related Posts:

in AI, Posted by log1o_hf