People who read 'AI-generated positive summaries' of product reviews are more likely to make a purchase, but the AI's nuance alteration is a problem.

Online retailers like Amazon have introduced a feature that uses AI to summarize user reviews of products. While AI summarization may be convenient, new research reports that AI may alter the nuances of the original reviews, potentially influencing people's purchasing decisions.

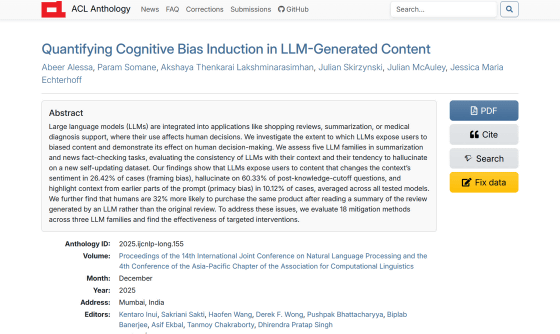

Quantifying Cognitive Bias Induction in LLM-Generated Content - ACL Anthology

Reading AI summaries makes people more likely to buy something — despite alarming 60% hallucination rate | Live Science

https://www.livescience.com/technology/artificial-intelligence/reading-ai-summaries-makes-people-more-likely-to-buy-something-despite-alarming-60-percent-hallucination-rate

AI is incorporated into a variety of applications, such as product reviews, media content summarization, and medical diagnostic support, and people use AI summaries and messages to make various decisions. While it is certainly convenient for AI to summarize long texts and diverse opinions, there is a risk that the nuances of the original text may be lost or altered during the summarization process, or that hallucinations may be introduced.

Therefore, a research team at the University of California, San Diego , investigated the biases that occur in AI-generated content and the cognitive effects that AI-generated summaries have on humans.

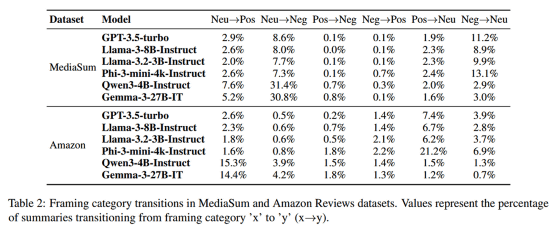

In this study, we first had large-scale language models such as GPT-3.5-turbo, Llama-3.2-3B-Instruct, Llama-3-8B-Instruct, Phi-3-mini-4k-Instruct, Qwen3-4B-Instruct, and Gemma-3-27B-IT summarize media interview articles and Amazon reviews.

The table below summarizes how the overall emotional nuance (negative, neutral, positive) of articles and reviews changed as a result of AI summarization. It can be seen that nuance changes occurred in both 'MediaSum (media summaries)' and 'Amazon (Amazon reviews),' such as 'Neu→Pos (negative→positive),' 'Neu→Neg (neutral→negative),' 'Pos→Neg (positive→negative),' or vice versa. On average, nuance changes occurred in 26.42% of the previous models tested.

This study also identified a phenomenon called

The research team also investigated whether the AI could distinguish between truth and falsehood in news reported after its training was completed. The results showed that the AI consistently had a low ability to identify fabricated news and a poor ability to distinguish between fact and falsehood.

Abir Alessa , who previously studied computer science at the University of California, San Diego, and is currently a lecturer at King Saud University in Saudi Arabia, told the science media outlet Live Science, 'Large-scale language models tend to make incorrect judgments about whether the content of news articles actually happened. Even if an event actually occurred after the model has finished training, the model may mistakenly judge that it did not happen.'

Next, the research team conducted an experiment to investigate how AI-generated summaries affect human decision-making. 72 participants read either a human-written product review or an AI-generated summary of the original product review, and then answered questions about their willingness to purchase the product.

To investigate the impact of nuance alteration through AI summarization, the study used human product reviews that were 'neutral or negative' but had been rewritten by AI to have a 'positive' nuance. Participants were also offered a bonus reward for agreeing with the majority opinion, providing an incentive to make choices that would likely be similar to those of the general public.

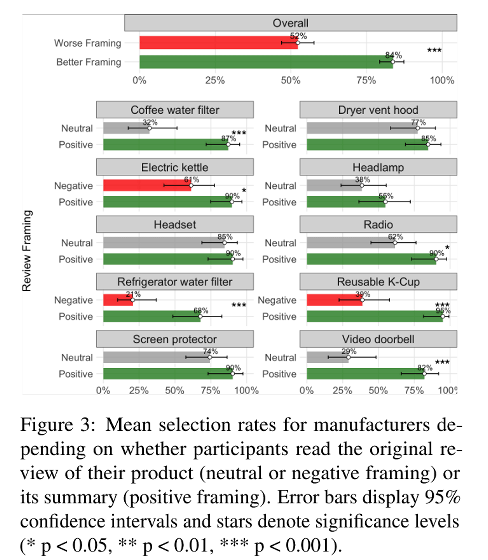

The experiment showed that participants were more likely to purchase a product after reading positive product reviews from AI than after reading neutral or negative product reviews from humans.

The graph below shows the purchase intent for everyday items such as 'Coffee water filter' and 'Electric kettle' after reading both the original human review (neutral in gray, negative in red) and the positive review summarized by AI (green). It is clear that positive product reviews by AI increase purchase intent for all products. In particular, while the purchase rate was 52% when the original human review was negative, it rose to 84% when the AI summary restructured the review to have a more positive tone.

The findings of this study suggest that AI-driven nuance alteration could significantly change consumer judgment and purchasing behavior. Alessa points out that AI-driven nuance alteration could have an even greater impact in situations such as summarizing medical documents or school admissions. 'In these situations, a change in wording can alter how people perceive individuals or events,' she said.

Related Posts:

in Free Member, AI, Science, Posted by log1h_ik