OpenAI releases 'GPT-5.4,' 'the most capable and efficient frontier model with superior agent performance' that can operate a PC better than humans

OpenAI released GPT-5.4 , its latest frontier model designed specifically for specialized tasks, on March 5, 2026. This model is available through ChatGPT, API, and Codex, and combines the results of inference, coding, and workflow as an autonomous agent into a single model. Also announced at the same time were ' GPT-5.4 Thinking ,' which performs more advanced inference, and ' GPT-5.4 Pro ,' which delivers the best performance in complex tasks. These models are specifically designed to deliver high performance in knowledge work and professional practice.

Introducing GPT-5.4 | OpenAI

OpenAI announced GPT-5.3 Instant on March 4th. At the time, OpenAI hinted on X (formerly Twitter) that 'GPT-5.4 will be released sooner than you think.'

5.4 sooner than you think.

— OpenAI (@OpenAI) March 3, 2026

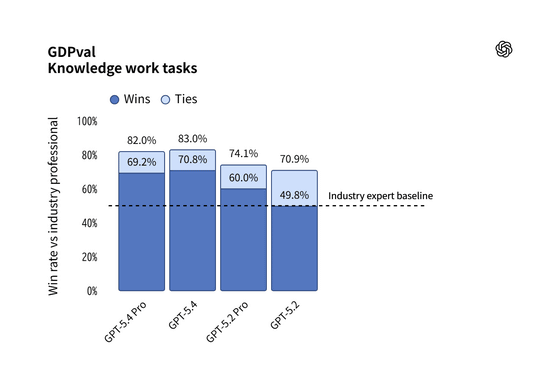

GPT-5.4 prioritizes accuracy and efficiency in real-world tasks, significantly improving cross-tool operations like spreadsheets, presentations, and document creation. In the GDPval benchmark, which evaluates the quality of knowledge work across 44 occupations, GPT-5.4 achieved a score of 83.0%, significantly outperforming its predecessor, GPT-5.2, which achieved 70.9%. OpenAI describes GPT-5.4 as 'the most capable and efficient frontier model for professional work.'

In addition, it achieved an average score of 87.3% in modeling tasks similar to those performed by investment bank analysts, an improvement from the previous 68.4% accuracy rate. Furthermore, fact-checking performance has been improved, with the probability of an incorrect response being reduced by 33% when a user enters a factually incorrect prompt, and the overall error rate improved by 18% compared to GPT-5.2. Furthermore, OpenAI has released a beta version of '

The results of ARC-AGI-2 are as follows:

GPT-5.4 and GPT-5.4 Pro from @OpenAI on ARC-AGI Semi Private

— ARC Prize (@arcprize) March 5, 2026

ARC-AGI-2:

- GPT-5.4: 74.0%, $1.52/task

- GPT-5.4 Pro: 83.3%, $16.41/task pic.twitter.com/vpBlCIDrUb

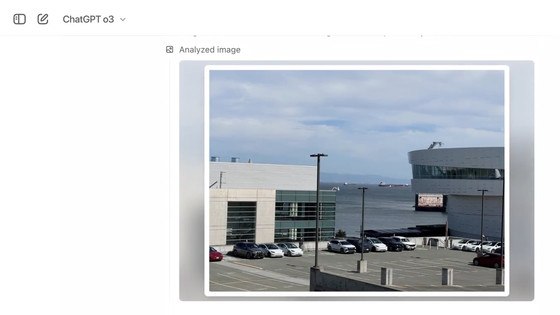

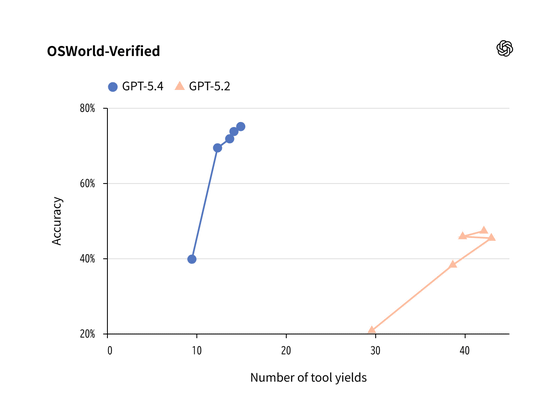

One notable feature of this update is that it is the first general-purpose model to feature native computer control capabilities. In the OSWorld-Verified test, which measures the ability to control the desktop environment through screenshots and input devices, it achieved a score of 75.0%, exceeding the average human success rate of 72.4%.

Visual recognition capabilities have also improved dramatically, allowing high-resolution images up to 10.24 million pixels (10.24M) and 6,000 pixels on the long side to be processed with 'full fidelity,' perceiving the images in their original resolution. This allows developers to build agents that complete business tasks across websites and software systems with greater reliability.

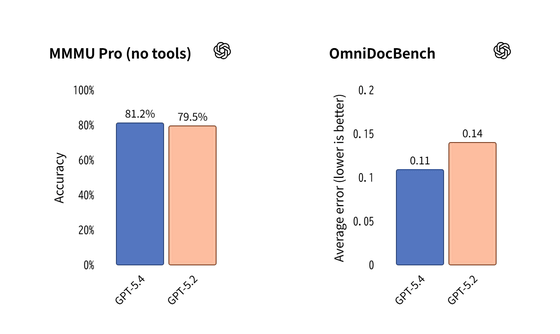

On MMMU-Pro, a benchmark that measures visual understanding and inference, GPT-5.4 achieved an 81.2% success rate without any tools, a solid improvement over GPT-5.2's 79.5%. On OmniDocBench, GPT-5.4 achieved an average error of 0.109 without inference effort, an improvement over GPT-5.2's 0.140. OpenAI claims this is supported by the introduction of a new image input level that supports full-fidelity perception.

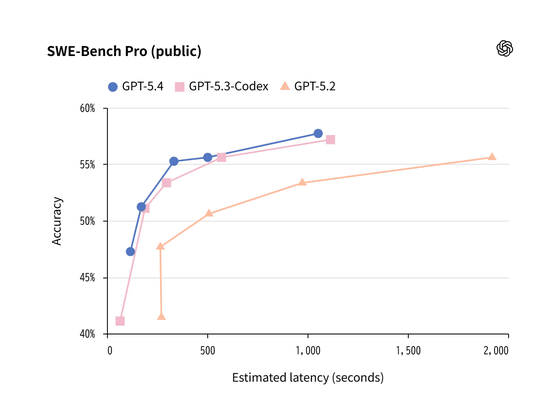

In terms of coding capabilities, GPT-5.4 leverages the strengths of GPT-5.3-Codex. In SWE-Bench Pro, GPT-5.4 achieved comparable or better performance than GPT-5.3-Codex while achieving lower overall latency. Additionally, Codex's newly introduced /fast mode allows for processing at up to 1.5x faster token speeds while maintaining the model's intelligence.

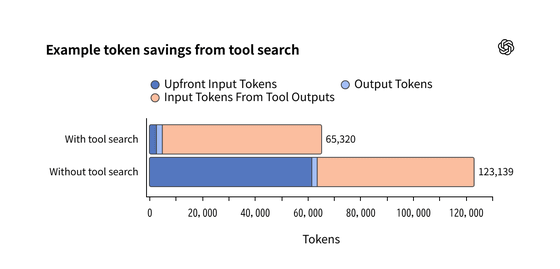

To improve efficiency when working with even larger tool sets, the API now includes a tool search feature. Instead of including all tool definitions in the prompt, OpenAI dynamically retrieves definitions as needed. The MCP Atlas benchmark demonstrates that this reduces token usage by 47% while maintaining accuracy.

Additionally, Codex experimentally supports a one million (1M) token context window, enabling long-term task planning, execution, and validation, and offers new skills such as

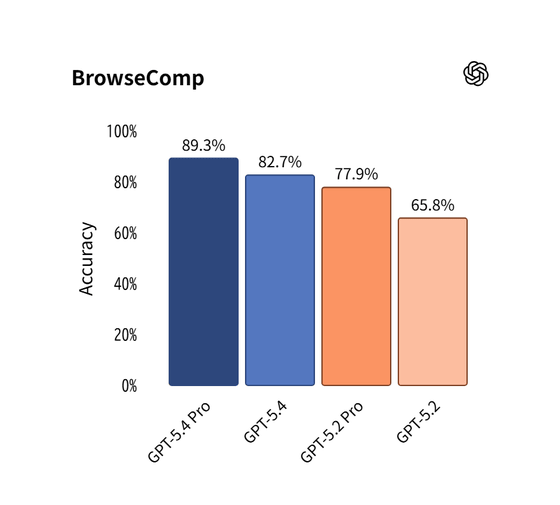

OpenAI also states that GPT-5.4 represents a significant improvement in the ability of autonomous agents to search the web and navigate browsers. In BrowseComp, a benchmark that measures the ability to persistently search for hard-to-find information on the web, GPT-5.4 achieved a 17% absolute score higher than GPT-5.2. Furthermore, the top-of-the-line model, GPT-5.4 Pro, achieved a score of 89.3%, establishing a new world-leading standard.

OpenAI states that with the rollout of GPT-5.4, it further improves on the safeguards introduced in GPT-5.3-Codex and has implemented appropriate safeguards for a highly cyber-capable model, including an expanded cyber safety stack including monitoring systems, trusted access control, and asynchronous blocking for high-risk requests in zero data retention (ZDR) environments.

In addition, research into the possibility of chain of thought (CoT) monitoring is ongoing , and a newly introduced CoT controllability evaluation showed that GPT-5.4 Thinking has a low ability to intentionally conceal its own reasoning. This is a positive characteristic from a safety perspective, and indicates that CoT monitoring remains an effective tool, OpenAI said.

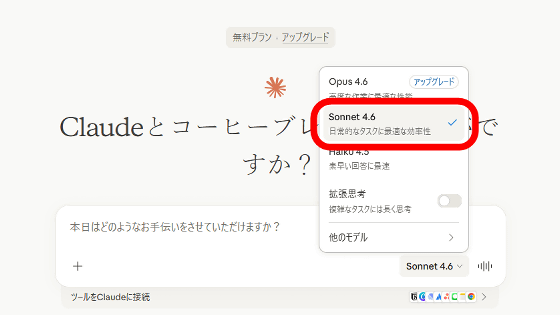

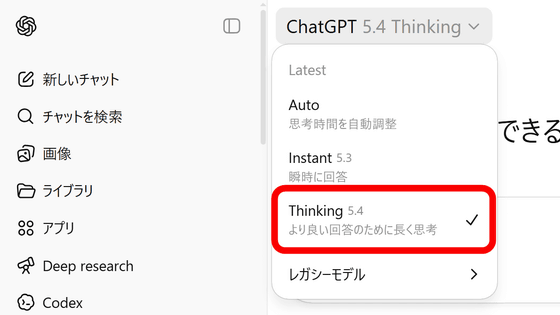

GPT-5.4 has been gradually rolled out through ChatGPT and Codex since Friday, March 6th, and is available in the API as 'gpt-5.4.' ChatGPT has begun offering GPT-5.4 Thinking to paid users of its Plus, Team, and Pro plans, replacing the previous GPT-5.2 Thinking model. The older GPT-5.2 Thinking model will continue to be available as a legacy model for three months until it is discontinued on June 5, 2026. The even more powerful GPT-5.4 Pro is available on the Pro and Enterprise plans, and is also available in the API as 'gpt-5.4-pro.'

The API usage fee is set higher than GPT-5.2, reflecting its high capabilities. The input price for gpt-5.4 is $2.50 (approximately 375 yen) per million tokens, and $0.25 (approximately 37.5 yen) for cached input. The input price for the top-of-the-line model, gpt-5.4-pro, is $30 (approximately 4,500 yen) per million tokens. Additionally, while batch processing and flexible pricing can be used with the API at half the standard price, priority processing will cost double the price.

OpenAI argues that GPT-5.4 is significantly more token efficient than previous models, requiring fewer tokens to solve the same problem, ultimately reducing the total cost.

Related Posts:

in AI, Posted by log1i_yk