OpenAI has announced 'GPT-5.4 mini/nano,' a fast, low-cost, and lightweight model; GPT-5.4 mini is available even with the free plan.

On March 17, 2026, OpenAI announced the release of ' GPT-5.4 mini ' and ' GPT-5.4 nano ,' lightweight versions of

OpenAI introduces GPT-5.4 mini and nano.

https://openai.com/ja-JP/index/introducing-gpt-5-4-mini-and-nano/

OpenAI explains, 'GPT-5.4 mini is designed for workloads where latency directly impacts the product experience, such as coding assistants that require responsiveness, sub-agents that quickly complete auxiliary tasks, computer operation systems that capture and interpret screenshots, and multimodal applications that can perform real-time inference on images. In these environments, the largest model is not always the best. What matters is a model that can respond quickly, reliably use the tools, and perform well even on complex, specialized tasks.'

Furthermore, GPT-5.4 nano is described as 'the smallest and fastest version of GPT-5.4, designed for tasks where speed and cost are paramount. It also offers significant performance improvements over GPT-5 nano. It is suitable for subagents handling classification, data extraction, ranking, and relatively simple auxiliary tasks.'

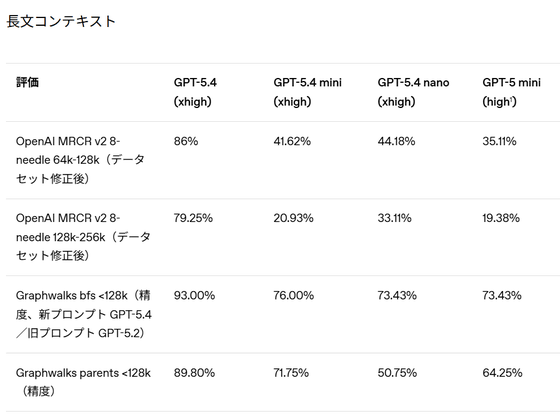

Looking at the results of four different benchmarks that measure reading comprehension, the GPT-5.4 mini performed roughly the same as, or slightly better than, the GPT-5 mini, but still fell short of the GPT-5.4.

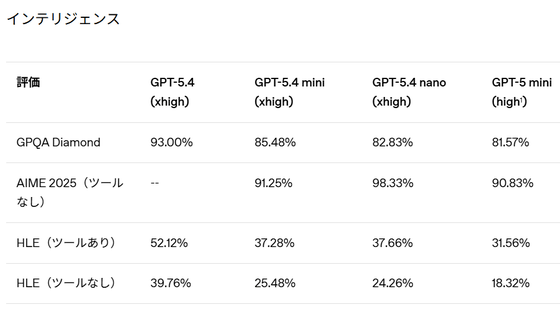

In benchmarks testing intelligence in solving problems in physics, chemistry, and mathematics, the GPT-5.4 mini and nanoGPT-5 mini scores showed a slight improvement over the GPT-5 mini.

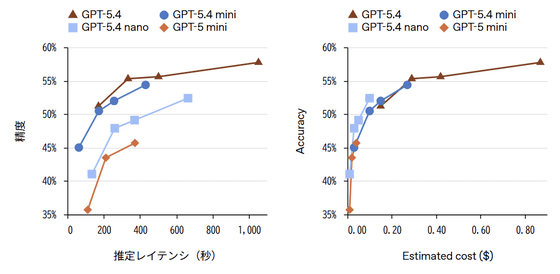

The graph below compares the coding accuracy using SWE-Bench Pro (public version) in terms of latency (left) and cost (right). While the GPT-5.4 mini has lower accuracy than the GPT-5.4, it consistently records higher accuracy than the GPT-5 mini at a similar latency. On the other hand, the GPT-5.4 nano also surpasses the accuracy of the GPT-5 mini at the same latency, and is more cost-effective than the GPT-5.4.

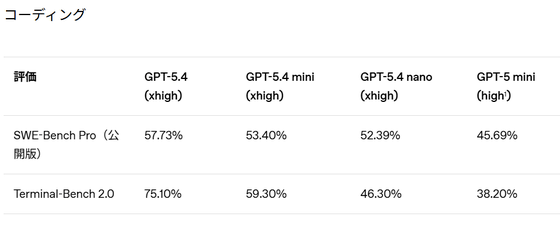

The table below compares the coding benchmark results using SWE-Bench Pro and Terminal-Bench 2.0. While the accuracy of GPT-5.4 mini/nano is still not as good as GPT-5.4, it is higher than the previous generation GPT-5 mini.

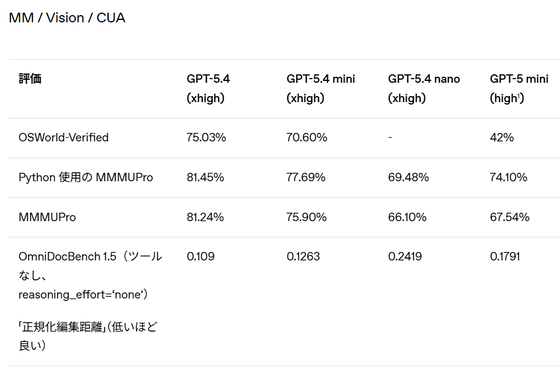

OpenAI states, 'GPT-5.4 mini is robust in multimodal tasks and excels particularly in computer operation-related tasks. This model can quickly interpret information-rich user interface screenshots and complete computer operation tasks rapidly.'

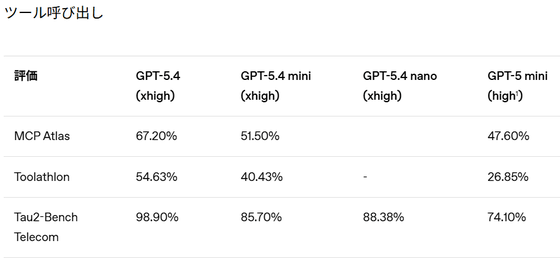

The benchmark results for tool invocation are as follows: In Tau2-Bench Telecom, the GPT-5.4 nano achieved the same accuracy as the GPT-5.4 mini.

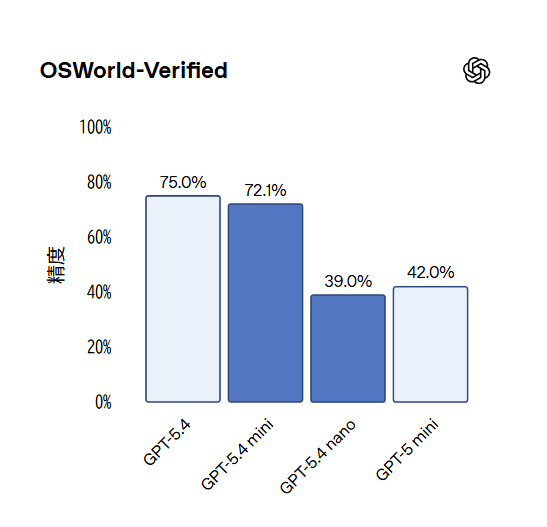

In voice understanding, image recognition, and computer operation, the GPT-5.4 mini shows improved benchmark scores compared to the GPT-5 mini. The GPT-5.4 nano is slightly inferior to the GPT-5 mini.

In the '

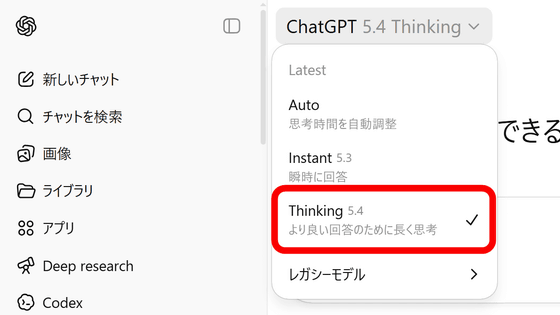

GPT-5.4 mini will be available on ChatGPT, API, and Codex from March 18th, the release date. In ChatGPT, free and Go users can use GPT-5.4 mini through the 'Thinking' feature. Other paid users will use GPT-5.4 mini if GPT-5.4 Thinking reaches its rate limit.

GPT-5.4 mini is available on the Codex via the Codex app, CLI, IDE extensions, and the web. OpenAI claims that because it uses only 30% of the GPT-5.4 allocation, developers can quickly complete relatively simple coding tasks and reduce costs by about a third.

The API price for GPT-5.4 mini is $0.75 (approximately 120 yen) per 1 million input tokens and $4.50 (approximately 715 yen) per 1 million output tokens. The context window is 400k (400,000).

GPT-5.4 nano is available via API only, with a fee of $0.20 (approximately 32 yen) per 1 million input tokens and $1.25 (approximately 198 yen) per 1 million output tokens.

Related Posts:

in AI, Posted by log1i_yk