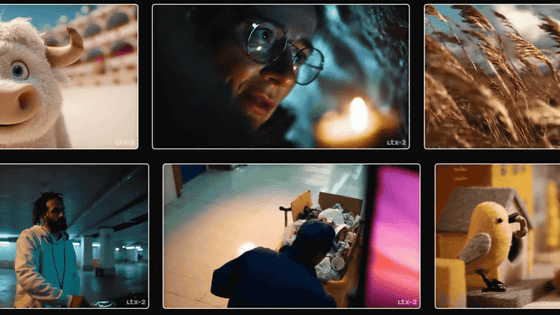

Locally-running video generation AI 'LTX-2.3' has been released, and the free PC app 'LTX Desktop' has also been released

The video generation AI model ' LTX-2.3 ' was released on March 6, 2026, Japan time. LTX-2.3 is released as an open model that can be run locally, and compared to the previous generation model,

LTX-2.3: Introducing LTX's Latest AI Video Model | LTX Model

https://ltx.io/model/ltx-2-3

Introducing LTX-2.3: Our Most Production-Ready Model Yet - YouTube

The LTX-2 series is an AI model capable of generating videos with audio, and is gaining popularity alongside Alibaba's Wan 2.2 as a locally-running model. The newly released LTX-2.3 was developed from the training stage on the premise of 'image-to-video (I2V) processing,' improving the accuracy of workflows such as 'inputting multiple images and generating videos that naturally connect from one image to the next.'

Keyframes and structured control are now more deeply integrated.

— LTX-2 (@ltx_model) March 5, 2026

LTX-2.3 is trained with multi-task objectives from the pretraining stage, including image-to-video, retake, keyframes, and more.

This makes transitions, controlled scene evolution, and multi-shot workflows more… pic.twitter.com/IXGoECapkq

The text encoder has also been increased by four times, improving its ability to understand prompts and accurately reflect instructions such as camera work, composition, and character movements. Additionally, the VAE has been updated to achieve more precise depictions and more stable movements.

The biggest upgrade is visual fidelity and motion stability.

— LTX-2 (@ltx_model) March 5, 2026

A new video VAE and late refined space deliver sharper fine detail and more stable motion.

Image-to-video holds together better, small textures survive compression, and last-frame interpolation makes endings feel… pic.twitter.com/WGF1pwNphF

Audio quality has also been improved, resulting in clearer, more accurate video with less noise.

Audio quality also improved across the board.

— LTX-2 (@ltx_model) March 5, 2026

A new vocoder increases dialogue clarity and sound realism. Cross-modal alignment between audio and video is tighter.

Stronger filtering and improved data processing reduce noisy outputs and improve overall audio fidelity.

6/7 pic.twitter.com/ulZmw5V7fJ

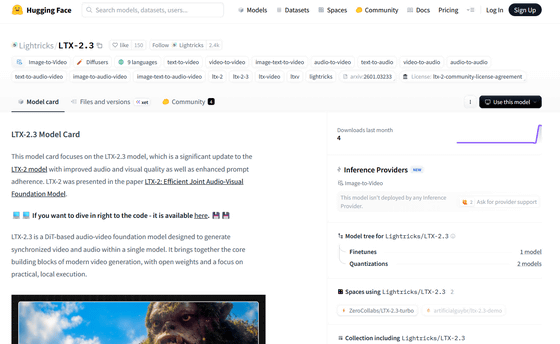

The LTX-2.3 model data is available at the following link:

Lightricks/LTX-2.3 · Hugging Face

https://huggingface.co/Lightricks/LTX-2.3

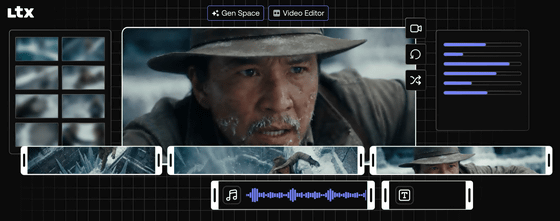

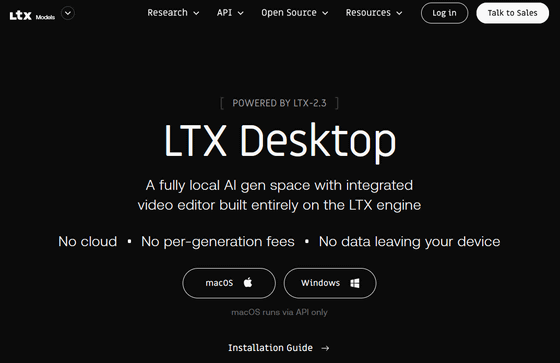

Along with the announcement of LTX-2.3, the company also released 'LTX Desktop,' a desktop app that can generate videos using LTX-2.3. LTX Desktop is compatible with Windows and macOS. The Windows version runs LTX-2.3 locally, while the macOS version runs LTX-2.3 via an API. For the Windows version, minimum requirements are 32GB or more of VRAM, 32GB or more of RAM, and 60GB or more of storage. At the time of writing, it only supports NVIDIA GPUs, but development is underway to support AMD and Intel.

LTX Desktop can be downloaded from the link below.

Free AI Video Production Suite: Generate & Edit Videos Online | LTX Desktop

https://ltx.io/ltx-desktop

ComfyUI also supports running LTX-2.3.

LTX-2.3 is now supported in ComfyUI

— ComfyUI (@ComfyUI) March 5, 2026

Major quality improvements from @Lightricks :

- Finer visual details

- Better 9:16 portrait videos

- Cleaner audio

-More stable image-to-video motion

-Smarter prompt understanding

- Clearer text rendering

Examples 🧵👇 pic.twitter.com/AH2wK6Etj7

Related Posts: