Z.ai releases industry-leading character recognition AI 'GLM-OCR' as open source, lightweight enough to run locally

Z.ai, a Chinese AI company, has released a multimodal OCR model called GLM-OCR , which is specialized for document understanding. GLM-OCR has been developed with an extremely small parameter count of just 0.9B (900 million), while aiming to analyze and extract complex document layouts with high accuracy.

zai-org/GLM-OCR · Hugging Face

https://huggingface.co/zai-org/GLM-OCR

The technical foundation of GLM-OCR is the GLM-V encoder-decoder architecture, which combines the CogViT visual encoder pre-trained on large-scale image and text datasets, a lightweight cross-modal connector for efficient token downsampling, and the GLM-0.5B language decoder.

The model integrates the CogViT visual encoder pre-trained on large-scale image–text data, a lightweight cross-modal connector with efficient token downsampling, and a GLM-0.5B language decoder. Combined with a two-stage pipeline of layout analysis and parallel recognition based… pic.twitter.com/Y2wtTsjdKQ

— Z.ai (@Zai_org) February 3, 2026

Furthermore, to improve training efficiency and recognition accuracy, multi-token prediction (MTP) loss and stable full-task reinforcement learning are introduced, and a two-stage pipeline of layout analysis and parallel recognition based on PP-DocLayout-V3 delivers high performance even for difficult documents. While conventional OCR can sometimes lose structure due to inserted tables and annotations, GLM-OCR performs advanced layout analysis to recognize text and images while taking into account the entire document structure.

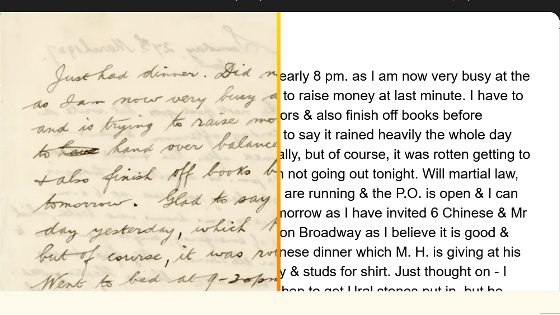

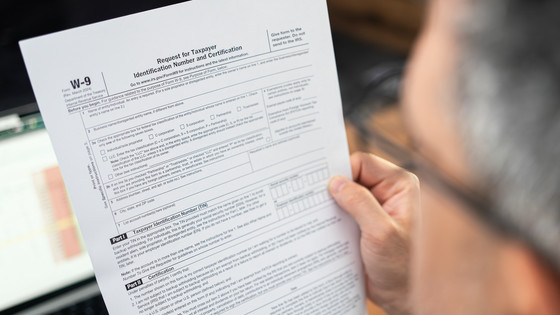

Optimized for real-world scenarios: It handles complex tables, code-heavy docs, official seals, and other challenging elements where traditional OCR fails. pic.twitter.com/n1y62bohjD

— Z.ai (@Zai_org) February 3, 2026

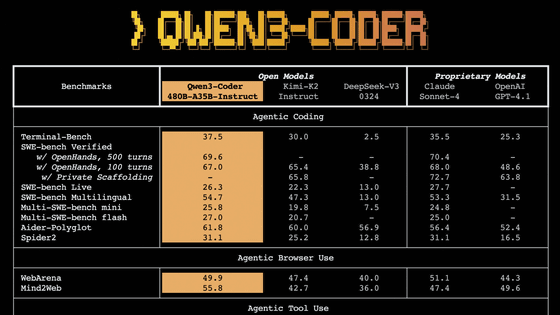

GLM-OCR achieved a score of 94.62 on the OmniDocBench V1.5 benchmark, outperforming competing OCR models. It also achieved state-of-the-art performance across a wide range of tasks, including formula and table recognition and structured information extraction. This demonstrates that GLM-OCR is a practical model with powerful processing capabilities despite its small size.

Introducing GLM-OCR: SOTA performance, optimized for complex document understanding.

— Z.ai (@Zai_org) February 3, 2026

With only 0.9B parameters, GLM-OCR delivers state-of-the-art results across major document understanding benchmarks, including formula recognition, table recognition, and information extraction.… pic.twitter.com/2c6iSsaXYs

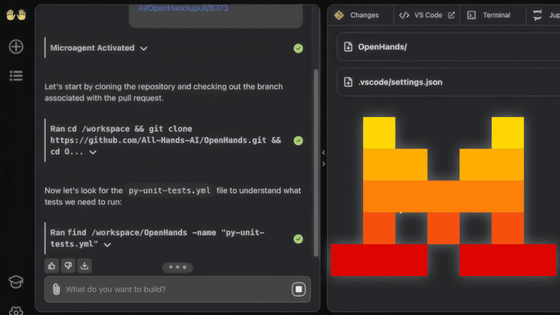

Z.ai points out that the greatest advantage of implementing and operating GLM-OCR is that its extremely light parameter count of approximately 900 million enables low-cost, high-speed inference even in local environments using major frameworks such as vLLM, SGLang, and Ollama. Being able to operate GLM-OCR on-premises will make it easier to digitize even highly confidential company documents.

GLM-OCR also proves its excellent efficiency in actual operational performance. In a processing speed test using the same hardware, single replica, and single concurrent execution to write Markdown from image and PDF input, it achieved a high throughput of 1.86 pages per second for PDF document processing and 0.67 pages per second for image input, significantly exceeding existing models in the same class.

GLM-OCR achieves a throughput of 1.86 pages/second for PDF documents and 0.67 images/second for images, significantly outperforming comparable models. pic.twitter.com/luNKI59hig

— Z.ai (@Zai_org) February 3, 2026

GLM-OCR has also been optimized for real-world business scenarios, and can flexibly handle cases that were difficult to handle with conventional OCR, such as complex tables with merged cells, technical documents with extensive code, and receipts containing seals and multiple languages, and can output accurate HTML and JSON formats.

GLM-OCR is fully open source, emphasizing contributions to the development community, and the source code is publicly available through Hugging Face's zai-org repository. The model itself is licensed under the MIT License, but PP-DocLayoutV3, which is part of the pipeline, is licensed under the Apache License 2.0, so users must comply with both licenses when using it.

Related Posts:

in AI, Posted by log1i_yk