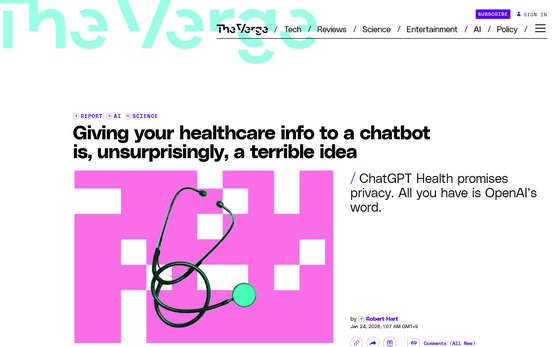

Experts point out that private health information should not be entered into 'ChatGPT Healthcare'

OpenAI announced

Giving your healthcare info to a chatbot is, unsurprisingly, a terrible idea | The Verge

https://www.theverge.com/report/866683/chatgpt-health-sharing-data

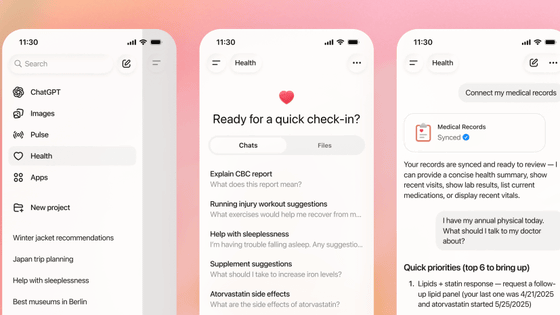

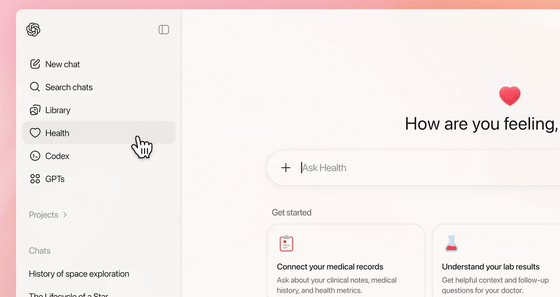

According to the report 'AI as a Healthcare Ally' published by OpenAI, more than 5% of ChatGPT messages worldwide are health-related, demonstrating the growing adoption of AI among healthcare professionals. ChatGPT Healthcare connects medical records and wellness apps to ChatGPT, allowing users to ask health-related questions and receive specific answers based on their personal data.

OpenAI announces 'ChatGPT Healthcare,' which connects to personal health records and app data to conduct medical interviews - GIGAZINE

By linking data from medical records and wellness apps, users will be providing ChatGPT with highly private information, such as height, weight, age, and dietary habits. Furthermore, because some people choose AI for mental health consultations because they are embarrassed to talk to others, confidentiality of information is essential. ChatGPT Healthcare offers data encryption, a custom design, and features for users to manage their own data. OpenAI also emphasizes privacy protection, explaining that health-related conversations and data from connected apps will not be used to train the underlying model.

However, several experts warn that people should be cautious about trusting ChatGPT with sensitive personal health information, even if the company promises to protect their data.

Sarah Gerke, a law professor at the University of Illinois at Urbana-Champaign, pointed out that most states lack comprehensive privacy laws and often have few safeguards against infringement or misuse. While ChatGPT Healthcare claims to be committed to data protection, this does not mean it strictly complies with any specific law, but rather is based solely on promises in its privacy policy and terms of use, meaning the basis for data protection is not guaranteed, Gerke said.

Hannah van Korfschoten, a digital health law researcher at the University of Basel in Switzerland, explained, 'ChatGPT's current terms of service state that it will keep data confidential and will not use it to train models, but users are not protected by law, and the terms of service may change at any time. Ultimately, we have no choice but to trust the company's promises.' Carmel Shachar, an associate professor of clinical law at Harvard Law School, similarly pointed out that OpenAI can change its terms at any time, and expressed concern that ChatGPT Healthcare is not subject to the US Health Information Privacy Act ( HIPAA ).

Beyond privacy concerns, the accuracy of AI responses is also a concern. Chat AI often provides false or misleading information, and in health and medical fields, even the slightest error can be life-threatening. In fact, after it was revealed that Google's 'AI summary' provided misleading information for certain health-related search queries, the company removed the 'AI summary' from some health-related search queries.

Google hides 'AI-generated summaries' for certain health-related search queries because they provided misleading information - GIGAZINE

According to a report by The Washington Post, ChatGPT Health analyzed about 10 years of data from Apple Watch and had experts review the results, which concluded that the results were 'unsubstantiated and not worthy of medical advice.' The report also noted that when the same questions were asked repeatedly, the health assessment score fluctuated from 'F' to 'B' each time, despite the data being the same.

You can now connect ChatGPT to an Apple Watch.

— Geoffrey A. Fowler (@geoffreyfowler) January 26, 2026

So I imported 29 mil steps and 6 mil heartbeats into the new ChatGPT Health.

It graded my heart an F.

Cardiologist @erictopol called it “baseless.”

Any bot claiming to give health insights shouldn't be this clueless. Even in beta. 🧵 pic.twitter.com/5ciq1fYGQ7

To prevent AI from providing patients with incorrect health information, OpenAI has stated that 'ChatGPT Healthcare is intended solely to support close collaboration with doctors and is not intended for diagnosis or treatment.' According to Gerke, OpenAI's assertion that it is 'not intended as a medical device' is significant for regulators, and even if users use it for medical purposes, it is likely to be exempt from strict regulatory oversight.

However, OpenAI has promoted ChatGPT Healthcare as a 'helpful tool for health consultations,' which some have pointed out could be misleading to users. 'It's only natural to question whether a tool like ChatGPT Healthcare should be classified as a medical device and subject to strict regulation,' van Kolffschoten said.

Related Posts:

in Free Member, AI, Posted by log1e_dh