Open signs multi-year contract with AI chip maker Cerebras worth ¥1.5 trillion to expand real-time AI with Cerebras' low-latency inference system

On January 14, 2026, local time, OpenAI announced a partnership with Cerebras, a company building dedicated AI systems to accelerate long-term output from AI models.

OpenAI partners with Cerebras | OpenAI

OpenAI Partners with Cerebras to Bring High-Speed Inference to the Mainstream

https://www.cerebras.ai/blog/openai-partners-with-cerebras-to-bring-high-speed-inference-to-the-mainstream

OpenAI signs deal, worth $10B, for compute from Cerebras | TechCrunch

https://techcrunch.com/2026/01/14/openai-signs-deal-reportedly-worth-10-billion-for-compute-from-cerebras/

The partnership between OpenAI and Cerebras will see the integration of Cerebras' AI systems into OpenAI's computing solutions, which OpenAI explained will 'significantly improve the response speed of AI.'

According to sources familiar with the matter, the deal between OpenAI and Cerebras is expected to exceed $10 billion.

OpenAI explained that it plans to gradually integrate Cerebras' low-latency systems into its inference stack and scale them across its workloads. Specifically, it plans to add 750MW of ultra-low latency AI computing to its platform.

OpenAI and Cerebras have met frequently since 2017 to share research results and early-stage work, and share a common belief that 'hardware architectures must be integrated to scale AI models.' This partnership is designed to make this happen.

'OpenAI's computing strategy is to match the right systems with the right workloads to build a highly resilient portfolio,' said

'We are thrilled to partner with OpenAI to bring the world's leading AI models to the world's fastest AI processors,' said Andrew Feldman, co-founder and CEO of Cerebras. 'Just as broadband transformed the internet, real-time inference will transform AI, enabling entirely new ways of building and interacting with AI models.'

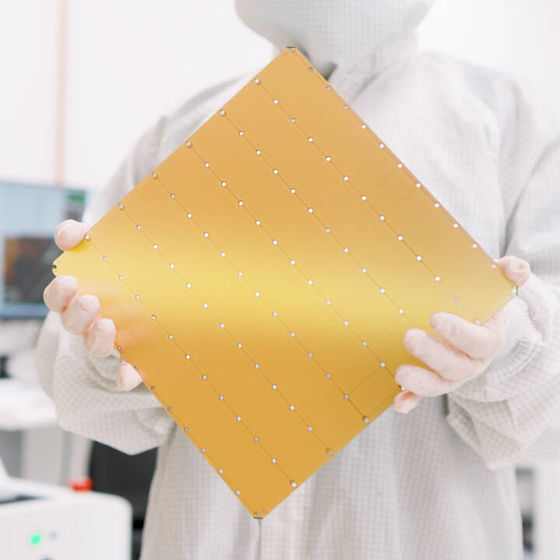

According to Cerebras, large language models (LLMs) running on its low-latency inference system respond up to 15 times faster than LLMs running on GPU-based systems, a significant advantage that Cerebras says will lead to increased consumer engagement and innovative applications.

Regarding Cerebras' low-latency inference solution, OpenAI explained that it plans to 'operate in multiple phases by 2028.' Cerebras explained, 'We have signed a multi-year contract to deploy Cerebras' 750MW wafer-scale system. This deployment will be rolled out in stages starting in 2026, making it the world's largest high-speed AI inference system.'

Related Posts:

in AI, Posted by logu_ii