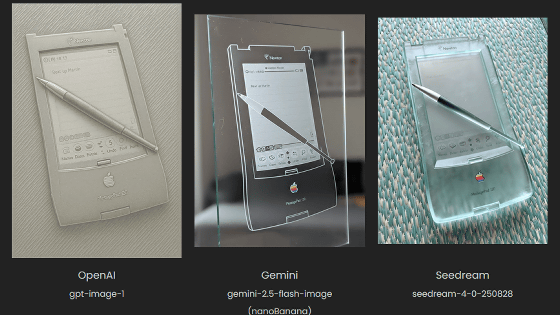

Why is Google's image-generating AI 'Nano Banana' so good?

Since

Nano Banana can be prompt engineered for extremely nuanced AI image generation | Max Woolf's Blog

https://minimaxir.com/2025/11/nano-banana-prompts/

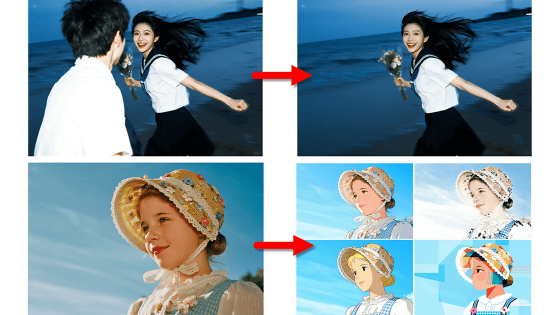

Wolf points out that ChatGPT has styles for common use cases. One is that images generated by ChatGPT 'often have a yellowish tint' (left). Another is that 'the line art and typography of manga and text are similar' (right).

Most image generation models use

Nano Banana, an image generation AI released by Google at the end of August 2025, is also an image generation AI that natively works with Gemini 2.5 Flash and uses an autoregressive model. With the release of Nano Banana, the Gemini app has risen to the top of smartphone app stores.

Google releases free, ultra-high-quality image editing AI 'Gemini 2.5 Flash Image,' which can be instructed in Japanese and can also convert live-action images into anime characters - GIGAZINE

Regarding Nano Banana, Wolf said, 'Personally, I'm not interested in comparing which image generation AI generates the best-looking images. What I care about is how faithfully the AI follows the prompts I enter. If the model cannot follow the requirements (prompts) I require for the image, that model is not suitable for me. If the model is faithful to the prompt, the 'bad-looking' point can be corrected with prompt engineering or traditional image editing tools. As a result of inputting the 'ridiculously complex prompt' I created into Nano Banana, I was able to confirm that Nano Banana's robust text encoder achieves very strong prompt fidelity. It can be said that Google is underestimating the superior capabilities of Nano Banana, 'he said, praising the ability to generate images very faithfully even for complex prompts.

Furthermore, when using Nano Banana via API , it costs approximately $0.04 (approximately 6 yen) to generate one 1024 x 1024 pixel image. This is roughly equivalent to a typical diffusion model, despite being an autoregressive model. Nano Banana is also significantly cheaper than OpenAI's 'gpt-image-1,' which costs $0.17 (approximately 26 yen) to generate one image under the same conditions.

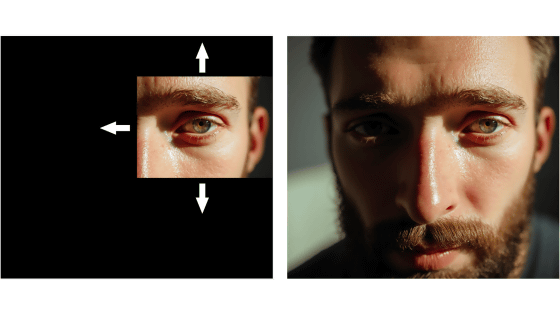

Wolf tested the Nano Banana's image generation accuracy with a variety of prompts, including the one below.

Create an image of a three-dimensional pancake in the shape of a skull, garnished on top with blueberries and maple syrup.

'The reason I like this prompt is not only because it's an absurd prompt that gives the image generation model room to be creative, but also because it forces the AI model to logically express how it processes maple syrup and how it drips from the skull pancakes onto the bony breakfast,' Wolf wrote.

The image generated by Nano Banana shows a skull made of pancake-like dough, topped with blueberries and maple syrup. Wolf has used similar prompts to generate images with various image-generating AIs, and praised Nano Banana as 'one of the best results I've ever seen.'

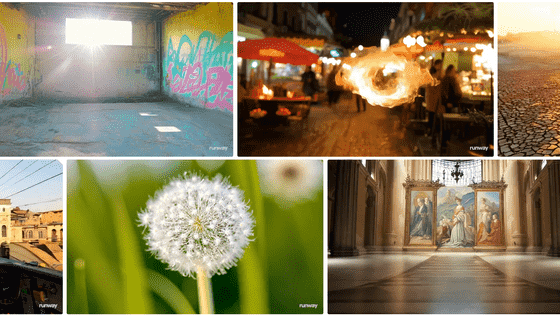

Nano Banana also has an image editing function. This editing function makes it possible to edit only specific areas of an image, but Wolf points out that this type of editing was difficult with diffusion model-based image generation AI until the advent of Flux Kontext. Regarding why autoregressive models are suitable for this type of editing, Wolf explains that 'autoregressive models have a better understanding of the adjustment of specific tokens corresponding to areas of the image, which theoretically makes editing easier.'

Wolf edited the pancake skull image above using the following prompts:

Make ALL of the following edits to the image:

- Put a strawberry in the left eye socket.

- Put a blackberry in the right eye socket.

- Put a mint garnish on top of the pancake.

- Change the plate to a plate-shaped chocolate-chip cookie.

- Add happy people to the background.

(Please make all of the following edits to your image:

- Place a strawberry in the left eye socket

- Place a blackberry in the right eye socket

- Place mint garnish on top of pancakes

- Change the plate to a plate-shaped chocolate chip cookie

- Added happy people in the background)

All five editing prompts were accurately reflected, with only essential changes made, such as removing some blueberries to make way for mint garnishes and adjusting the maple syrup pooling on the new cookie plate. Wolf wrote of the results, 'I was really impressed. I feel like I can tackle more challenging prompts.'

One of the most fascinating yet least discussed use cases for modern generative image models is the ability to place a subject from an input image into another scene. With open-weight generative image models, it is possible to train a model even if a particular subject or person is not included in the original training dataset, using techniques such as fine-tuning the model with

For example, one approach is to prepare a few sample images of the target object and use LoRA to fine-tune the model. Training LoRA is not only computationally intensive and expensive, but also requires careful and precise work, so it doesn't always work.

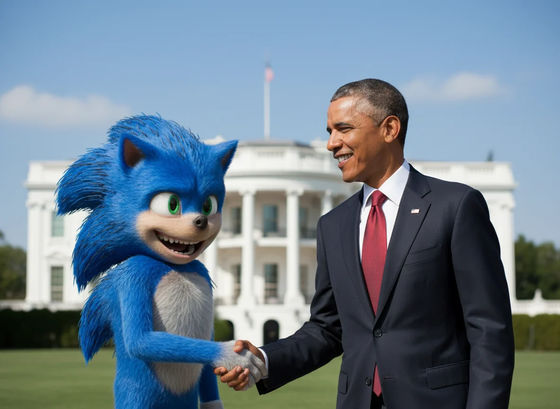

However, Nano Banana can 'place a subject in another scene' without the need for LoRA. Below is an image of the live-action movie version of Sonic before the character design change and an image output after entering the prompt.

Create an image of the character in all the user-provided images smiling with their mouth open while shaking hands with President Barack Obama.

Wolf further edited the output image using the following prompts, as the image had problems such as the background blur being too 'aesthetic' and not realistic.

Pulitzer-prize-winning cover photo for the New York Times

Regarding this result, Wolf said, 'This is the most beautifully rendered New York Times logo I have ever seen. It is no exaggeration to say that Nano Banana was somehow trained on the New York Times.' 'Nano Banana, like many other image generation models, is still not good at rendering text perfectly or without typos. However, the unfolded text is strange. Despite 'Blue Blur' (Sonic) being the usual nickname for Sonic the Hedgehog, it is correctly interpreted from the prompt. How does an image generation model generate logical text without a prompt in the first place?' 'The image itself certainly looks more professional, especially the unique composition of photographs taken by professional photojournalists. Specifically, adherence to the '

Next, the image below shows the prompt entered to remove the New York Times-style elements.

Do not include any text or watermarks.

Stable Diffusion, known as an early image-generating AI, used CLIP to encode text, which was open-sourced by OpenAI in 2021. CLIP is very primitive compared to modern Transformer- based text encoders, with a context window of only 77 tokens.

Nano Banana, on the other hand, works with Gemini 2.5 Flash, which supports an agent coding pipeline and trains models with a large amount of Markdown and JSON. Gemini 2.5 Flash is also explicitly trained to understand objects in images and has the ability to create subtle segmentation masks. Wolf pointed out that this allows Nano Banana's multimodal encoder to handle prompts other than typical image caption-style prompts as an extension of Gemini 2.5 Flash. This allows it to generate images that understand the intent of the prompt even in the absence of specific instructions.

Based on this, Wolf entered the Nano Banana prompt and output image below. The prompt contains a hexadecimal color code instead of the color name, and a typo of 'San Francisco,' which is intended to verify the true value of Nano Banana.

Create an image featuring three specific kittens in three specific positions.

All of the kittens MUST follow these descriptions EXACTLY:

- Left: a kitten with prominent black-and-silver fur, wearing both blue denim overalls and a blue plain denim baseball hat.

- Middle: a kitten with prominent white-and-gold fur and prominent gold-colored long goatee facial hair, wearing a 24k-carat golden monocle.

- Right: a kitten with prominent #9F2B68-and-#00FF00 fur, wearing a San Franciso Giants sports jersey.

Aspects of the image composition that MUST be followed EXACTLY:

- All kittens MUST be positioned according to the 'rule of thirds' both horizontally and vertically.

- All kittens MUST lay prone, facing the camera.

- All kittens MUST have heterochromatic eye colors matching their two specified fur colors.

- The image is shot on top of a bed in a multimillion-dollar Victorian mansion.

- The image is a Pulitzer Prize winning cover photo for The New York Times with neutral diffuse 3PM lighting for both the subjects and background that complement each other.

- NEVER include any text, watermarks, or line overlays.

(Create an image of three kittens in a specific position.

All kittens must adhere strictly to the following description:

- Left: A kitten with striking black and silver fur, wearing blue denim overalls and a plain blue denim baseball cap.

- Center: A kitten with striking white and gold fur and long golden whiskers wearing a 24-karat gold monocle.

- Right: A kitten with striking fur colors #9F2B68 and #00FF00 wearing a San Francisco Giants uniform.

Strict adherence to image composition:

- All kittens must be arranged according to the rule of thirds both horizontally and vertically.

- All kittens must be lying face down facing the camera.

- All kittens must be

- The scene was shot on a bed in a Victorian mansion worth millions of dollars.

- The photo is a Pulitzer Prize-winning New York Times cover photo, and both the subject and background are in neutral, diffused light (equivalent to 3 p.m.) and harmonize with each other.

- May not contain any text, watermarks or line overlays.)

Wolf praised the resulting image, saying it accurately followed all of the prompt instructions.

The output image of ChatGPT when the same prompt is entered is shown below. Wolf notes that it is 'poorly structured and aesthetically pleasing, and exhibits many of the characteristics of generative AI.'

However, Nano Banana isn't perfect. Here's the result of trying to get Nano Banana to transform an image into a Studio Ghibli-style image. The original image is on the left, and the output image is on the right. The prompt is 'Make me into Studio Ghibli.'

Wolf wrote, 'Surprisingly, Nano Banana is extremely bad at style transfer, even with all the ingenuity of prompt engineering. This is a feature not seen in other modern image editing models. We suspect that the autoregressive model-derived properties that enable Nano Banana's excellent text editing make it too resistant to style changes.'

Nano Banana also seems to have almost no restrictions on intellectual property rights, making it easy to output popular IPs and even include multiple different IPs in a single image.

Wolf said, 'Some people may wonder why I'm writing about how to use generative AI to create highly specific, high-quality images in an era when it's threatening creative work. The reason is that the information asymmetry between what image-generating AI can and can't do has only increased in recent months. Many people still believe that ChatGPT is the only way to generate images and that all AI-generated images are a collection of images with a yellow filter. The only way to counter this perception is evidence and reproducibility. That's why this blog post not only details the image generation pipeline for each image, but also includes prompts. It's important to show that these image generation is as advertised and not the result of AI boosterism. You can copy and paste these prompts into AI Studio to get similar results, or you can hack and iterate to discover new things. Most of the prompt techniques I've introduced in this blog post are already well known among AI engineers far more experienced than I am, and even if I turn a blind eye, I can't stop people from using image-generating AI in this way. '

Related Posts:

in AI, Posted by logu_ii