Anthropic updates Claude's terms of service and privacy policy, sparking outrage

Anthropic, the developer of the AI assistant

Updates to Consumer Terms and Privacy Policy

https://www.anthropic.com/news/updates-to-our-consumer-terms

On August 29, 2025, Anthropic announced updates to its consumer terms of use and privacy policy. Anthropic explained that these updates will enable it to provide more powerful and useful AI models. They also said they will provide users with the choice of whether or not to allow their data to be used to improve Claude and strengthen protection measures against harmful uses such as fraud and abuse.

These Consumer Terms of Service and Privacy Policy updates apply to users of Claude Free, Claude Pro, and Claude Max, including when using Claude Code through accounts associated with these plans. They do not apply to services under commercial terms of service, such as Claude for Work, Claude Gov, or Claude for Education, or to use of APIs, including through third parties such as Amazon Bedrock, Google Cloud, or Vertex AI.

Anthropic explained that the updated consumer terms of use and privacy policy will 'improve the accuracy of our harmful content detection systems, reducing the likelihood of harmless conversations being flagged. They will also contribute to improving the coding, analysis, and inference skills of future Claude models, ultimately leading to better models for all users.'

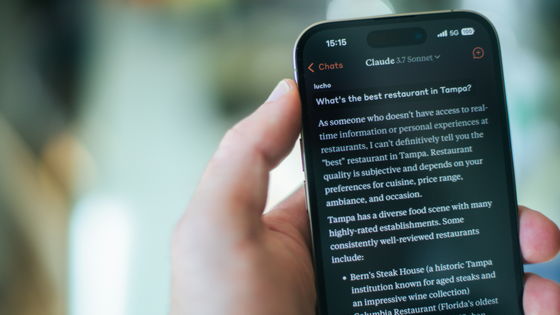

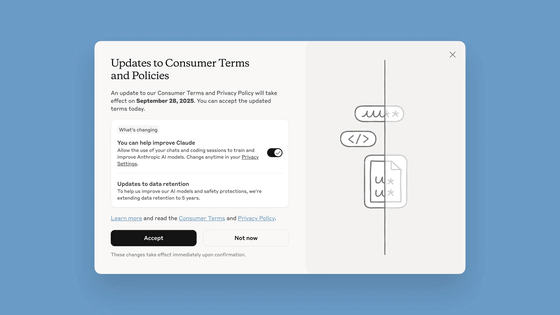

Existing users will be asked whether they agree to the new Consumer Terms of Service and Privacy Policy, as shown below. One change is that the option to 'Help improve Claude' (using your interactions with Claude to train AI models) is now enabled by default.

The new Consumer Terms of Service and Privacy Policy are scheduled to go into effect on September 28, 2025, but are already available for acceptance at the time of writing. The new Consumer Terms of Service and Privacy Policy only apply to new or resumed chat and coding sessions, not past interactions.

Additionally, if you allow your chat or coding sessions to be used for AI model training, your data retention period will be extended to five years. This data retention period appears to only apply to new or resumed sessions after you agree to the Consumer Terms of Use and Privacy Policy. Additionally, if you delete a conversation with Claude, that conversation will not be used for future model training. If you do not want your data to be used for model training, the existing 30-day data retention period will continue.

Anthropic's new consumer terms of service and privacy policy have sparked mixed reactions on social forum Hacker News.

Updates to Consumer Terms and Privacy Policy | Hacker News

https://news.ycombinator.com/item?id=45062683

One user pointed out that the popup informing users of Anthropic's new consumer terms of service and privacy policy resembled a dark pattern .

Another user pointed out that while the new option can be opted out, it is enabled by default and appears to be merely a statement explaining an update to the terms of service, which is suspicious. He also pointed out that the data retention period of five years is too long.

Others said, 'I'm using Claude to help me with my math research, and I'm concerned about a scenario where he suggests unpublished research ideas from our chats or coding to another user and that person believes them as their own.' Others said , 'Everyone seems unsurprised by this move, but I'm really shocked. What a self-destructive business decision. Google is evil, but they're not showing Gmail text in search results because if they showed Gmail in search results, no one would want to use Gmail. This is the case with large-scale language models (LLMs).' Others said , 'I tried to avoid having too many personal conversations with Claude because I don't trust him in the first place. However, we have had many superficial discussions about topics I'm interested in, and we've talked about my experience and background on those topics. So I'm deleting all our chats and deleting Claude's account.'

Furthermore, when asked why Anthropic updated its consumer terms of service and privacy policy, one commenter said, 'It's not surprising. The big companies are reaching the limits of training their AI with existing data. They're already training their AI using essentially the entire internet, plus a ton of allegedly stolen content (various lawsuits have been filed). We haven't seen any significant advances in model architecture from the big companies recently, and they're now in a race to acquire training data. They need data, they want your data now, and they'll go to increasingly shady lengths to get it.'

Regarding the use of user chat logs to train AI models, some have commented, 'We believe chat logs hold surprising signals. Given the hundreds of millions of users and the diverse tasks that will accumulate over time, this is probably the most efficient way to improve AI. The ability to directly reuse human and AI work experience in AI models could accelerate both problem exploration and the application of good ideas , potentially accelerating progress.'

Some users who support Anthropic's new policy say things like, 'Wouldn't it be great for us if LLM could be trained from past conversations? Without this data, LLM wouldn't improve much. That said, I recognize the risk of having a handful of companies responsible for something as important as the collective knowledge of civilization. Is self-management the long-term solution? Organizations and individuals might use and train models locally to protect and distribute the learning results internally. Of course, costs would need to drop significantly for this to be feasible.' Others say, 'Great. If you want to prioritize data privacy, just don't use your own data. The idea that 'no one wants to share their data' is now commonplace, but I've never had a terrible experience or seen any major disasters as a result of sharing data. Honestly , it seems like a good deal to me.'

Related Posts: