Science announces that all academic journals will use AI to check for fraudulent images

Science, one of the world's most prestigious academic journals, announced on January 4, 2024 that all of its academic journals use 'unauthorized images' as research results. We announced that we will introduce artificial intelligence (AI) to automate the detection process.

Genuine images in 2024 | Science

All Science journals will now do an AI-powered check for image fraud | Ars Technica

https://arstechnica.com/science/2024/01/all-science-journals-will-now-do-an-ai-powered-check-for-image-fraud/

Improvements in image processing technology and the shift to processes where everything from paper submission to publication is sometimes done digitally have made it easier to commit 'research fraud' by altering images presented as evidence of research results. This has been a problem for many years. Traditionally, after a research paper has been submitted, it is peer-reviewed by experts to examine the authenticity of the research content and results, but it is impossible to completely detect images that have been intentionally manipulated to mislead. It can be difficult. If the falsification of research results is actually discovered, it will cause great damage to the careers of experts who were not able to detect it through peer review.

Detecting fraud in research papers sometimes involvesspecialists who can spot fraudulent papers by reusing and processing images using their naked eyes and memory, but in many cases they use Adobe Photoshop, which can enlarge, invert, and overlay images. We conducted paper screening. On the other hand, the development of AI tools to detect images that have been fraudulently manipulated is progressing, and it was reported in October 2023 that tools that are much faster and more accurate than human labor have appeared. Masu.

Research results show that AI tools that detect ``unauthorized images'' in scientific papers are already more accurate than humans - GIGAZINE

In response, Science announced that it will introduce Proofig , an AI-powered image analysis tool, to detect falsified images in all six journals published by Science.

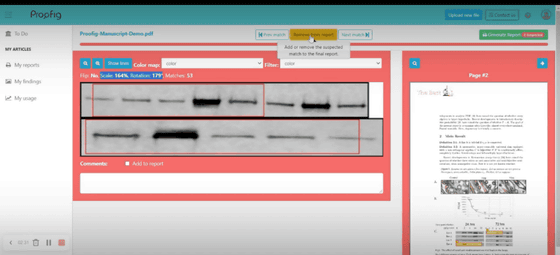

Proofig analyzes images and generates reports that show 'manipulation marks' such as overlaps with historical data, rotations, scale distortions, and joins. Depending on the research, a 'rotated image of something' may be posted as a legitimate image, so the paper's editor manually examines the report detected by AI, and there is a problem with the report detected by AI. Decide whether it is a thing. According to Science, after several months of piloting Proofig, they found clear evidence that it can detect problematic images that contain doctored images before they are published.

In response to Science's announcement, technology media Ars Technica said, ``Proofig can detect problems with a certain degree of accuracy, and it is desirable that problems are discovered before the paper is published.However, AI-based detection systems It is important to emphasize that not everything can be supplemented.' For example, Proofig detects data duplication by checking it against a database when data has been plagiarized from past research papers. However, if the data was handled in a non-commercial paper on a fairly minor field, there is a high possibility that the database will not cover it. Additionally, if the falsification is done from unpublished research results rather than images used in published papers, it will not be possible to detect it from data duplication.

In an editorial published at the end of 2023, Science described ``2024 as a year that will bring many challenges.'' 'We hope to build stronger trust and integrity in science over the next year' by implementing image detection AI to peer review papers, increasing monitoring and careful curation of research errors. says Science.

Related Posts:

in Science, Posted by log1e_dh