Meta Announces Purple Llama AI Safety Tool, Providing Tools and Evaluation Systems to Enhance the Safety of Open Generative AI Models

Meta has announced the launch of Purple Llama, a project that will provide developers with tools and a rating system to improve safety when building products using open generative AI.

Introducing Purple Llama for Safe and Responsible AI Development | Meta

Announcing Purple Llama: Towards open trust and safety in the new world of generative AI

https://ai.meta.com/blog/purple-llama-open-trust-safety-generative-ai/

In the world of cybersecurity , a team that emulates attacker tools and techniques to verify the effectiveness of security is called a red team, while a security team that defends systems against actual attackers and red teams is called a blue team. Meta brought this concept to generative AI risk assessment and named the project 'Purple' to clarify that attack and defense work together to reduce risk.

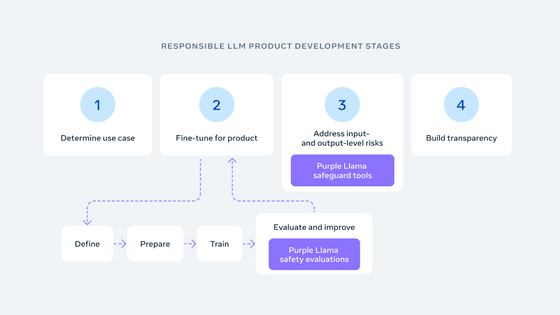

Purple Llama's goal is to help developers deploy generative AI models responsibly, following best practices shared in Meta's Responsible Use Guide . The project launched with the release of CyberSec Eval and Llama Guard .

CyberSec Eval is a set of cybersecurity safety evaluation benchmarks for large-scale language models. It includes 'metrics for quantifying the cybersecurity risk of large-scale language models,' 'tools for assessing the frequency with which insecure code is proposed,' and 'assessment tools for making it more difficult to generate malicious code and execute cyberattacks.' It is said to help reduce the risks outlined in

Llama Guard is a tool that detects potentially dangerous or completely outrageous content, and supports filtering by checking all inputs and outputs to large-scale language models.

Purple Llama takes an open approach and will collaborate with the AI Alliance, AMD, AWS, Google Cloud, Hugging Face, IBM, Intel, Lightning AI, Microsoft, MLCommons, NVIDIA, Scale AI, and many other companies to improve and develop the platform, and the tools it provides will be available to the open source community.

Partners Together.AI and Anyscale plan to demonstrate and provide technical details of the tool at a NeurIPS workshop to be held from December 10th to 16th, 2023.

Related Posts: