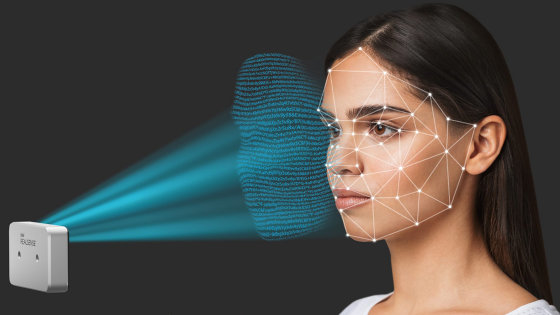

Why is 'Emotion Detection Technology' where AI reads emotions from human expressions considered dangerous?

by Dazzle Jam

An algorithm that “the computer reads human expressions to detect emotions” using AI technology that has been developed in recent years has attracted attention, and is expected to be used for security maintenance and educational settings. However, some researchers who study human emotions have warned that AI is dangerous for practical application of technology that reads emotions from human expressions.

Don't look now: why you should be worried about machines reading your emotions | Technology | The Guardian

https://www.theguardian.com/technology/2019/mar/06/facial-recognition-software-emotional-science

The technology to detect emotions requires a computer program that accurately identifies the facial expressions shown on the screen, and a machine learning algorithm that reads emotions from patterns of facial expressions. Generally, supervised learning is performed for machine learning algorithm training. In the case of an algorithm that reads emotions from facial expressions, there are many methods for labeling emotions such as “happy”, “sad”, “anger” on images that reflect human expressions, and teaching many combinations of expressions and emotions to the algorithm And.

Rana el Kaliouby , an Egyptian-American information engineer, is known as a leader in facial expression recognition research. In the early 2000's, Kaliouby did research to let the computer identify emotions, and created a device to detect emotions from the facial expressions of Asperger 's children during doctoral study. Kaliouby describes this device as an "emotional hearing aid".

Kaliouby, who founded a startup named Affectiva in 2009, has marketed his emotion detection technology as a product for market research and has claimed that companies can get real-time response to advertising and products. Now, large IT companies such as Amazon, Microsoft and IBM are also promoting "Emotion analysis" as part of face recognition technology, as well as market research, driver status, video game user experience, patient status analysis in medical institutions Technology that detects emotions from facial expressions is used.

Mr. Kaliouby has been involved in the development of computerized emotion detection technology since the early research stage, and witnessed how emotion detection technology has developed into a $ 200 billion industry in just 20 years or so . As the field of emotion detection technology continues to grow, Kaliouby predicts that this technology will eventually be adopted by any computer and all human emotions will be detected and used momentarily. doing.

by Kaique Rocha

Affectiva has about 7.5 million emotional data repositories collected from 87 countries, many of which are movies depicting the faces of people watching TV and driving cars. Humans analyze the expressions captured in the movie, label the corresponding emotions, and use the data set to train the Affectiva's algorithm.

Labeling of emotions to facial expressions widely used in the field of emotion detection technology is derived from a system called Emotion Facial Action Coding System (EMFACS) proposed by American psychologist Paul Ekman et al. Ekman thinks that emotions such as anger, aversion, fear, happiness, sadness and surprise exist universally regardless of culture and race, and images of people with different cultures to diverse groups in the world I was shown and asked what kind of emotion the person in the image had. As a result, they found that they could read the emotions of people belonging to different cultures, despite the fact that each group had a great cultural difference.

After that, Ekman mapped facial expressions and emotions based on his discoveries, and systems that read emotions from facial expressions were widely used in psychiatric and criminal investigations. The idea that true emotions appear through facial expressions prevails in the world, and Ekman is also a model of the mental-behavioral analyst Kal Reitman appearing in the American TV drama " Lee to Me Lies Tell the Truth " It has become.

However, there are many researchers who have doubts about Ekman's method of detecting emotions. Lisa Feldman Barrett , a psychologist at Northeastern University , points out that there is a problem with the research on which Ekman argues that the "many cultures have universal emotions" theory. We thought that the experiment method of passing several emotion labels to the subject beforehand and letting the emotions that can be read from the expression be selected from multiple labels influenced the results. In fact, Barrett did not give emotion labels in advance, and freely described emotions that can be read from images of expressions, and the degree of commonality of expressions and emotions among multiple cultures dropped sharply .

Mr. Barrett thinks that there is no universal emotion that causes a common reaction in many cultures, and the expression comes from a combination of brains with various differences among individuals and factors such as the environment and culture in which they were born and raised. Insist that it is a thing. It is meaningless to analyze the emotions of people from different cultures and environments based on their expressions.

Meredith Whittaker , an AI researcher at New York University , is also one of the researchers who think that there is a problem in practical application of emotion detection technology by AI. Whittaker believes that building a machine learning algorithm based on classic emotion mapping can be socially harmful. "In companies that use face recognition technology and emotion detection technology for recruiting activities, and sites that use emotion detection technology to measure the concentration of students in the educational field, incorrect emotion analysis adversely affects job hunting and academic evaluation There is a possibility, "said Mr. Whittaker.

by Rebecca Zaal

Kaliouby also states that he is well aware of these opinions that regard AI's emotion detection technology as dangerous. Affectiva uses emotions to be labeled using movies, not still images, to make decisions based on context based emotions, as well as collecting data on people of different races from many countries. It claims to be important to prevent data bias. "We need diverse data, such as whites, Asians, people with black skin and people wearing hijab ," Kaliouby said.

In fact, as Affectiva analyzes data sets of different countries and ethnic groups, it has found that different countries express different emotion expressions. For example, Brazilians show happiness through smiles, but Japanese have not only smiles to show happiness, but also patterns that make them smile to show "polite things", Kaliouby points out. In order to incorporate such cultural differences into the algorithm, Affectiva also trains ethnic differences of expressions in another layer, but identifying ethnic differences can lead to racial discrimination.

In response to these concerns, Mr. Kaliouby has stated that race classification is not currently performed by the emotion detection algorithm, and geographically such as "data taken in Brazil" and "data taken in Japan" at present. According to the difference, the data set is classified. However, if Japanese data appears in the data taken in Brazil, it is classified as Brazilian data, and it may not be possible to recognize "polite smile". Kaliouby replied, "At the moment, our technology is not 100% perfect."

by rawpixel.com

Related Posts:

in Note, Posted by log1h_ik