It turned out that Amazon's top secret AI tool was discarded because it was 'female contempt'

by geralt

Although Amazon secretly developed a system that "Evaluate job applicant's resume on AI" secretly, Amazon discards the tool because AI likes men and evaluates women as being disadvantageous in finding employment It turned out that it was.

Amazon scraps secret AI recruiting tool that showed bias against women

https://www.reuters.com/article/us-amazon-com-jobs-automation-insight/amazon-scraps-secret-ai-recruiting-tool-that-showed-bias-against-women-idUSKCN1MK08G

Amazon reportedly scraps internal AI recruiting tool that was biased against women - The Verge

https://www.theverge.com/2018/10/10/17958784/ai-recruiting-tool-bias-amazon-report

Amazon's machine learning expert team has created an algorithm to evaluate job seekers from resume to search for talented talent in 2014. Since "automation" is an extremely important factor for Amazon, it is said that automation by AI in human resource employment was a natural flow.

However, in 2015, it was discovered in 2015 that this dreamlike algorithm is "female dislikes" from the recruiter who automatically selects talented top 5% from the resume. In technical posts including software developers, Amazon's system was not neutral in terms of gender.

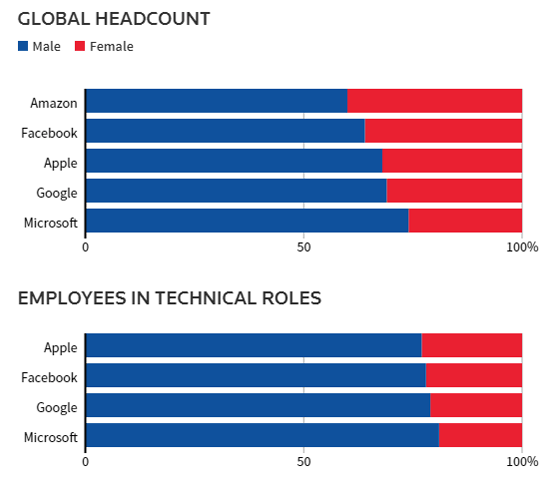

The reason why the AI system became disliked by women was that Amazon's computer model was learning by the resume pattern in the past 10 years. In the past decade, men dominated the technology industry.

Amazon's system liked male candidates and said he was putting a resume in a disadvantageous position, including the word "female", such as "captain of female chess club". In addition, it has also been found that the candidate who graduated from girls' college was evaluated low from the information of the person who is familiar with the circumstances.

Amazon edited the program to be neutral to certain words, but it was thought that the system could potentially handle candidates differentially in other ways. Therefore, the team was dissolved in the beginning of 2017. As of 2018, Amazon confirms "Recommended" made by AI tool when doing recruitment, but it does not depend on ranking only.

Amazon does not comment on the technical challenges of the tool, he says, "Amazon never used this tool to evaluate candidates".

According to the 2017 survey conducted by CareerBuilder on job search information site, 55% of HR department managers in American companies think that "artificial intelligence / AI will become commonplace for their work within 5 years" And that. However, computer scientist Nihar Shah said, "The path is still far from being fair to make the algorithm fair and realistically interpretable." In fact, other scientists have raised concerns about the point that machine learning reproduces human prejudice.

AI learns female discrimination and racial discrimination from human language - GIGAZINE

Related Posts:

in Software, Web Service, Posted by darkhorse_log