Why does reinforcement learning, which was effective in chess and Go, fail in simple games?

While AI has demonstrated superior strength to humans in board games such as chess and Go, it has also been discovered that AI has weaknesses, as evidenced

Impartial Games: A Challenge for Reinforcement Learning | Machine Learning | Springer Nature Link

https://link.springer.com/article/10.1007/s10994-026-06996-1

Figuring out why AIs get flummoxed by some games - Ars Technica

https://arstechnica.com/ai/2026/03/figuring-out-why-ais-get-flummoxed-by-some-games/

Investigating whether you can beat an AI in a board game might seem like mere intellectual curiosity. However, understanding AI's weaknesses can help identify common failure patterns and devise ways to improve AI training methods. As more and more people rely on AI in their daily lives and work, the importance of exploring ways to improve AI is increasing.

The research team focused on a very simple game like ' Nim .' Nim is a game where two players take turns removing any number of coins or stones from one or more piles of a finite number of stones. This process is repeated until one of the players removes the last coin or stone, and that player wins.

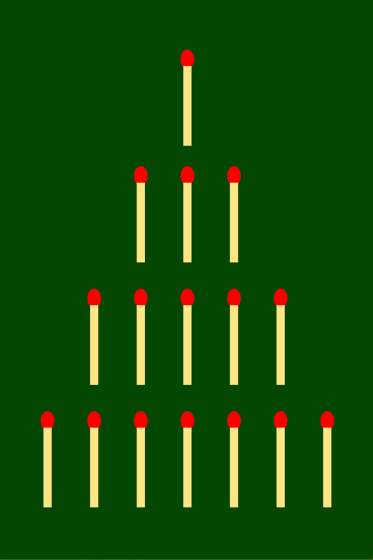

A more visually simpler version of Nim involves arranging matchsticks in a pyramid shape, with one matchstick on the top, three on the second, five on the third, seven on the fourth, and so on. Players then take any number of matchsticks from each layer. This version uses the Nim pile as the layers, and just like when multiple piles are used, the player who takes the last matchstick wins.

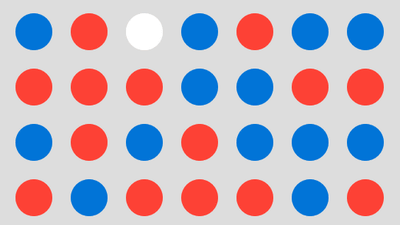

Games like Nim, where each player shares the same pieces they can move and the available actions remain the same for all players, are called

One of the characteristics of impartial games like Nim is that you can accurately evaluate the board state at any point in the game and determine which player has a chance of winning. In other words, by inputting the board state into a specific function ( parity function ), you can calculate the optimal move and the probability of winning.

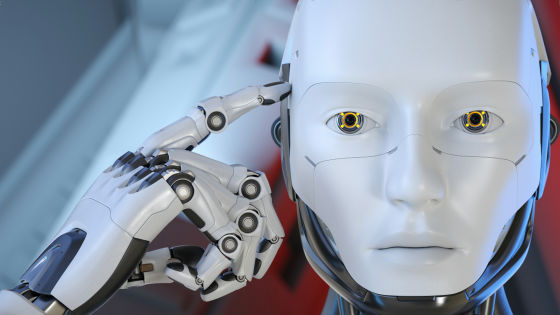

In this study, the research team investigated whether it was possible to develop a parity function for winning unbiased games like Nim using reinforcement learning, similar to AlphaGo. Reinforcement learning is a machine learning technique in which rules and constraints are given to an AI, and the AI repeatedly searches for the optimal way to reach its goal through trial and error. When training a chess AI with reinforcement learning, the rules of chess are given to the AI, and by having it repeatedly play games on its own, it is possible to correlate various board configurations with win rates.

In the case of the pyramid version of Nim, one might think it's easier for AI than chess or Go because the optimal moves are limited depending on the board configuration. However, this study found that while reinforcement learning worked well with a 5-tier pyramid, the rate of performance improvement dramatically decreased at 6 tiers, and at 7 tiers, performance improvement almost completely stopped after 500 self-plays.

To make the problem easier to understand, the research team replaced the subsystem that suggests potential moves with a 'randomly operating subsystem.' They found that, even after 500 self-plays, the performance of the reinforcement-trained version and the randomly operating version of Nim (7th dan) remained unchanged. While Nim (7th dan) has been found to have three moves that lead to a final victory from the initial state, the AI gave almost equal evaluations to all possible moves in the initial state.

When interpreting these conclusions, one might consider that unbiased games like Nim are exceptional. However, the research team points out that similar tendencies could occur in chess AI, where AI board evaluations may sometimes give high ratings to moves that 'miss checkmate.' In chess, these mistakes are less likely to be a problem because there are many possible future branches, but in games like Nim, where there is always a best move, these mistakes may become more apparent.

The research team stated, 'AlphaZero excels at associative learning. However, it fails at problems requiring symbolic reasoning, which cannot be implicitly learned from the correlation between game states and outcomes.' In other words, even if simple rules govern the game, reinforcement learning alone may not be enough for the AI to arrive at those rules.

Technology media outlet Ars Technica points out that while many are exploring the usefulness of AI in solving mathematical problems, AI may fail at symbolic reasoning. 'It may be difficult to clearly demonstrate how to get AI to do such reasoning, but it is useful to know which methods obviously don't work,' they said.

Related Posts: