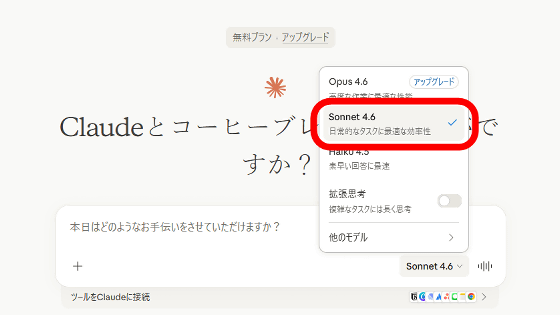

Claude Opus 4.6 and Claude Sonnet 4.6 now allow input of 1 million tokens.

Claude Opus 4.6 and Claude Sonnet 4.6 now allow you to input a massive context of up to 1 million tokens.

1 million context window: Now generally available for Claude Opus 4.6 and Claude Sonnet 4.6.

pic.twitter.com/jreruGukcm — Claude (@claudeai) March 13, 2026

1M context is now generally available for Opus 4.6 and Sonnet 4.6 | Claude

https://claude.com/blog/1m-context-ga

Inputting 1 million tokens is already available in Claude Opus 4.6 and Claude Sonnet 4.6. There is no additional charge for inputting long-text contexts. Previously, there was an additional usage fee for 1 million token contexts.

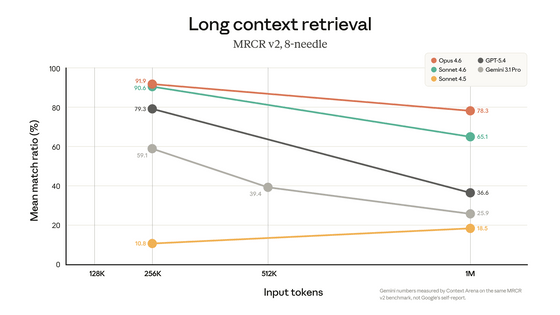

Claude Opus 4.6 achieved a score of 78.3% in the MRCR v2 benchmark, which measures long-text interpretation performance with a massive amount of text, making it the highest-performing model in terms of interpretation performance for context lengths of 1 million tokens. Furthermore, it exhibits less performance degradation when the amount of input tokens increases compared to Gemini 3.1 Pro and GPT-5.4.

Both Claude Opus 4.6 and Claude Sonnet 4.6 were advertised from their initial release as being capable of handling '1 million tokens.'

Anthropic announces Claude Opus 4.6, improving performance not only for coding but also for financial processing and document creation, and supporting context windows with up to 1 million tokens - GIGAZINE

Anthropic boasts that 'it can directly read and utilize entire codebases, thousands of pages of contracts, or observations and intermediate inferences from long-running agents. It eliminates the need for engineering work, information-loss summarization, and context clearing that were previously required for working with long contexts. The entire conversation can be preserved as is.'

The 1 million token context is available through the Claude Platform and Amazon Bedrock, Google Cloud's Vertex AI, and Microsoft Foundry. Max, Team, and Enterprise users of Claude Code using Claude Opus 4.6 will automatically have the 1 million token context enabled by default.

Related Posts:

in AI, Posted by log1p_kr