Over 100 scientists propose framework to restrict access to dangerous biological data to prevent AI from developing bioweapons

While advances in AI technology are expected to advance various scientific research fields, there are also concerns that AI could be misused for cybercrime and weapons development. To address this issue, more than 100 scientists from universities including Oxford, Stanford, Columbia, Johns Hopkins, and New York University have proposed a framework that would gradually impose restrictions on the biological data that can be used to train AI models.

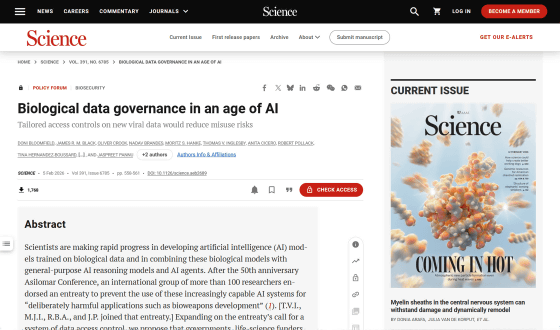

Biological data governance in an age of AI | Science

The biosecurity gap in AI governance

https://www.axios.com/2026/02/17/ai-data-viruses-biosecurity

Researchers Propose Biosecurity Data Levels to Control AI Access to Pathogen Datasets

https://www.implicator.ai/researchers-propose-biosecurity-data-levels-to-control-ai-access-to-pathogen-datasets/

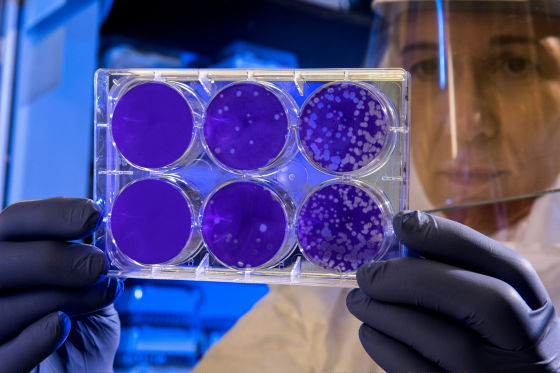

More than 100 researchers have proposed a framework in the journal Science to prevent AI from developing biological weapons and deadly viruses. The framework, called 'Biosecurity Data Levels,' is modeled after biosafety levels , which define containment levels for laboratories and facilities that handle bacteria and viruses.

Under the Biosecurity Data Levels, biological data is classified into five tiers (BDL), with different restrictions on access to each data tier. The BDL classifications are as follows:

- BDL-0: The lowest level that covers the vast majority of biological data and imposes no restrictions.

BDL-1: Data to help AI models learn common patterns of viral infections in eukaryotic organisms. Access requires a registered account and government-issued ID.

BDL-2: Data on the potential for pandemics, such as virus hosts and environmental stability, adds institutional affiliation checks and fraud screening to the BDL-1 items.

BDL-3: Data that maps functions such as the transmissibility, virulence, and immune evasion of human-infectious viruses to specific gene sequences. In addition to the requirements up to BDL-2, access requires confirmation of legitimate use, work in a research environment where scientists cannot access the raw data, and a risk assessment of AI models created using BDL-3 data before they are released.

BDL-4: Data that enables AI to design virus variants with pandemic potential. In addition to the requirements for access up to BDL-3, the AI models created are subject to pre-access review by the government. Authorities will decide who has access to AI models trained on BDL-4 data.

These security measures are inspired by the multiple layers of safety required when dealing with dangerous pathogens such as the Ebola virus, which causes the Ebola hemorrhagic fever. These include airlocks, chemical showers, and pressure suits. While most biological data is not restricted, dangerous data is subject to strict containment, just like real viruses and bacteria. The biosecurity data level only applies to data collected after government approval; existing datasets are unaffected.

AI media outlet Implicator.ai points out that an important aspect of biosecurity data is that it is classified not based on the type of virus it targets, but on whether it 'gives AI models the ability to design dangerous biological weapons or viruses.' In other words, even if data on a virus is inherently harmless, if it is likely that an AI model could generalize to more dangerous pathogens, such as infectious data on specific genetic mutations, it could be classified as BDL-3/4.

Some opponents of data regulation argue that restricting access to data is pointless because it doesn't impair AI capabilities. However, the scientists who proposed the biosecurity data level point out that experimental results have shown that AI models that exclude data such as virus-specific proteins and the genetic sequences of viruses that infect eukaryotes perform worse on virus-related tasks.

'Collecting data to map pathogen genotypes to real-world traits is time-consuming and requires expertise across multiple fields of biology, so few laboratories are able to do this on a large scale. At the time of writing, there are no large-scale datasets that would allow AI models to engineer dangerous pathogens, so the rapid deployment of a framework could prevent AI models from developing biological weapons.'

Some well-known developers of biological AI models have chosen to exclude virus-related data without waiting for regulatory approval due to concerns about the development of biological weapons. While this is a wise decision, not all developers share the same conscience. Others may try to fine-tune public AI models with virus-related datasets they have obtained themselves, giving them features not available in the original AI models.

'Legitimate researchers should be given access to the data, but it shouldn't be posted anonymously on the internet where it can't be traced back to who downloaded it,' said

While it may seem difficult to implement a biosecurity data level, the US National Institutes of Health's (NIH) ' All of Us ' already stores data in three tiers, and Oxford University's ' OpenSAFELY ' strictly controls access to datasets. Researchers who want to use OpenSAFELY data can submit code to the platform and view the output reviewed by staff, but they do not have access to the raw data.

At the Munich Security Conference in 2025, a group of 14 experts considered a scenario in which extremist groups use AI to create a new enterovirus , sparking a pandemic that could infect 850 million people and kill 60 million. The group found this scenario to be highly concerning and worthy of immediate action.

Implicator.ai supported the introduction of biosecurity data levels, stating, 'Once you publish biological data, it cannot be taken back. It can be instantly replicated, stored by a third party, and incorporated into training datasets without anyone noticing. Pathogens can be contained behind airlocks, and a lab's BSL-4 certification can be revoked, but published datasets cannot be undone.'

Related Posts: